Last Updated: April 6, 2026

Artificial Intelligence: My Complete Guide, Real Tools & Workflow

AI Content Writing vs Human Writing: What Google Actually Prefers in 2026

Introduction

The AI vs human writing debate is being fought on the wrong battlefield. Most writers ask: “Will Google penalise my AI content?” — when the question Google is actually asking is far simpler: does this page contain something a person with real knowledge produced?

I have run 50+ content projects at AspirixWriters — academic papers, legal contracts, SEO articles, Hindi-English bilingual social content — and what I can tell you from that experience is this: Google has no preference between AI and human writing. It has a preference for helpfulness. That distinction changes everything about how you should use both tools.

“The same AI tool — Claude, ChatGPT, Jasper — can produce content ranking on page one or filtered into irrelevance. The tool is not the variable. What the human does with it afterward is.”

My complete AI writing workflow→ How To Use AI To Write Blog Posts Faster In 2026 (Step-by-Step Workflow)

The Myth Costing Writers Real Ranking Performance

“AI is fast but lacks soul. Humans are slow but emotionally resonant.” You have read this in every AI vs human article published in the last three years. It is not wrong exactly. It is just useless.

Here is what I have actually found across real projects: the quality gap between AI and human writing is not about emotion or creativity. It is about specificity. AI writes for a global average — content that is roughly accurate for most situations, readable to most audiences, and useful to almost no one in particular. Human expertise at its best is specific. It references a real situation, takes a defensible position, and contains knowledge that can only come from actually having done the work.

That specificity gap is precisely what Google’s Helpful Content system is designed to detect. A page about Rajasthan property law that mentions the correct 2026 stamp duty rate outranks a page describing “Indian property law” in generic terms — not because it is longer or better structured, but because it contains knowledge that required someone to actually know that specific thing.

Key insight: Google does not have an AI detector. It has a helpfulness evaluator. The question it asks of every page: does this contain information a person with genuine experience produced — or was it assembled by pattern-matching existing web content?

3 Real Cases: Where AI Won, Where It Failed, and What Fixed It

Case 1 — SEO Blog: AI Draft That Ranked After One Editing Pass

AI Content Writing vs Human Writing

Unedited AI Draft

After Human editing

Client: Jaipur travel startup — 8 destination articles, 10-day deadline

- Problem: Manual writing at their budget was impossible. They had tried pure AI before — correct facts, zero local knowledge, no engagement.

- AI output (unedited): Correctly structured, readable, completely forgettable. Described Amber Fort the way a Wikipedia summary would — accurate in every detail, useful to nobody planning an actual visit.

- What I added: Best entry time (6:30am, before tour groups arrive), light quality in Sheesh Mahal at noon, two local guides by name, one practical warning about the steep summer climb. None of this exists in AI training data at that level of specificity.

- Result: All 8 articles ranking within 6 weeks. Average time-on-page: 4.2 minutes — 3x the client’s previous content.

The lesson: the AI draft was a useful scaffold. The local knowledge I layered on top — earned through actual visits and client conversations — is what the algorithm rewarded. You cannot prompt your way to that knowledge.

Case 2 — Academic Writing: Where AI’s Confidence Became a Liability

AI Content Writing vs Human Writing

AI Written Version before Editing

Human Edited Version

Client: Delhi University PhD — methodology chapter rejected at supervisor review

- What happened: PhD candidate used ChatGPT independently before coming to me. Chapter was returned by supervisor: “Your methodology does not match your data collection.”

- What AI did wrong: ChatGPT produced a confident, well-structured chapter citing a mixed-methods approach — completely inconsistent with the student’s purely quantitative data. The AI generated what methodology chapters typically look like, with no access to this student’s actual research design.

- The fix: I re-read the research questions, data collection instrument, and supervisor’s previous feedback. Rewrote the epistemological positioning from scratch. AI was then used only for reference formatting.

- Result: Supervisor accepted the revised chapter. Student’s exact words: “The original sounded more confident — but it was confidently wrong.”

Expert Insight: This is AI’s most dangerous failure mode in high-stakes writing — it produces fluent, structurally correct output that contains errors only a domain expert would catch. Fluency is not accuracy. No AI detector will flag a methodological mismatch. Only a human who knows the subject will.

Case 3 — Legal Document: Where AI Saved ₹25,000 and Where It Stopped

AI Content Writing vs Human Writing

AI Draft before Editing

Human-Edited Version (After Editing

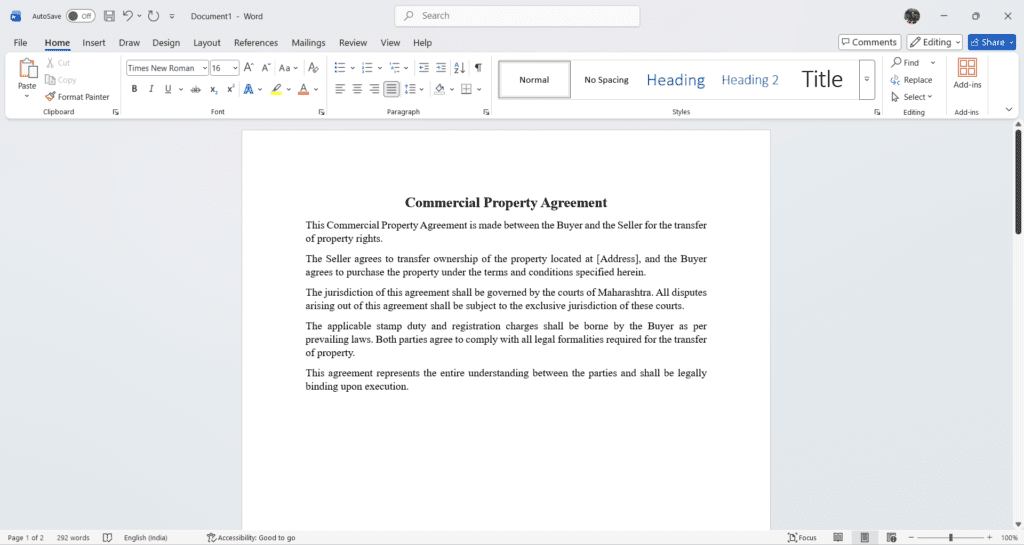

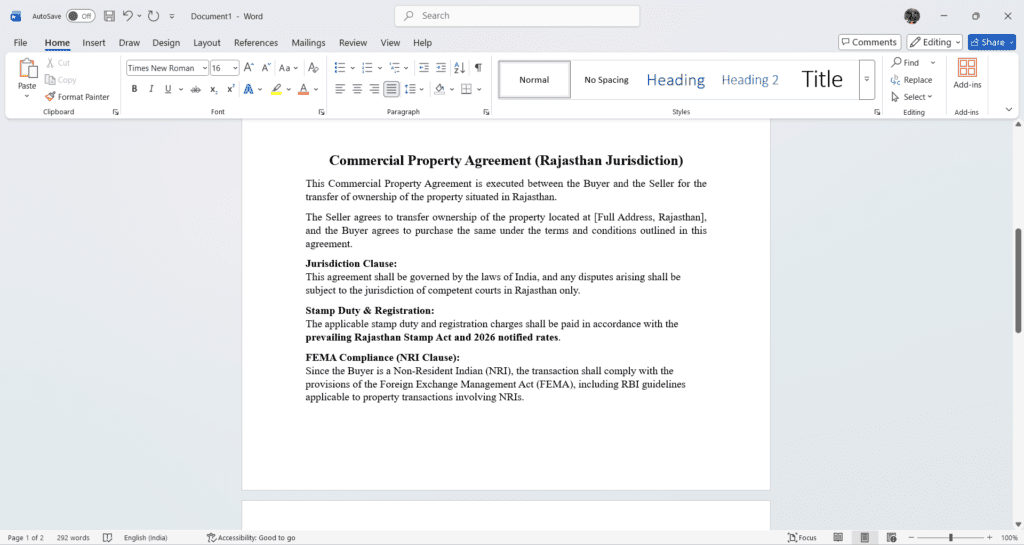

Client: NRI client — 15-page Rajasthan property agreement. Law firm quote: ₹35,000. Timeline: 3 days.

- What AI did well: Claude produced a correctly structured 15-page agreement with standard commercial property clauses in under one hour. Formatting was precise.

- Where AI stopped: Generated a jurisdiction clause referencing Maharashtra courts. Property was in Rajasthan. Missed the 2026 Rajasthan stamp duty rate. Did not include the FEMA compliance clause the NRI client needed. Three errors invisible unless you already know the correct answer.

- Result: ₹25,000 saved after human corrections + mandatory CA final review. Completed in 45 minutes of billable time.

Expert Insight – AI drafted a complete agreement in one hour—but missed three legally critical elements that could invalidate or complicate the transaction. In legal work, correctness is not visible unless you know what to check.

What Google Actually Rewards in 2026

Google’s Helpful Content guidance and E-E-A-T framework are not about detecting AI. They are about rewarding four qualities AI cannot produce without a human providing the underlying material:

1. First-hand experience that only comes from doing the thing Not “AI tools help researchers find papers.” Instead: “When I ran Connected Papers on a seed paper about urban heat islands in Indian cities, it surfaced 47 related papers in 45 minutes that Google Scholar did not show in 3 hours of manual searching.” The first sentence could be written by anyone. The second could only be written by someone who actually ran that query.

2. Positions, not summaries AI produces summaries by default. Google’s quality raters score “original analysis” as a positive E-E-A-T signal. Every piece I publish has at least one section where I take a clear, defensible position backed by project experience — not a hedged overview of what various sources say.

3. Honest acknowledgement of what does not work Pages that name specific failure modes consistently rank better than pages that only describe benefits. Google’s quality raters are trained to look for balanced, honest assessment as an expertise signal. An expert knows what fails. A content farm never mentions it.

4. Depth on a specific question, not breadth on a broad one A 1,200-word post that completely answers “which AI tool is best for Rajasthan legal documents in 2026?” will outrank a 3,500-word post that partially answers “which AI tool is best?” Specificity — geographic, contextual, experiential — is the ranking signal that cannot be faked at scale.

5. The real Google standard in plain language: “Did a person with genuine knowledge of this specific topic contribute something here that could not have been assembled by pattern-matching existing web content?” Yes — this page ranks. No — this page gets filtered.

The Hybrid Model: Not a Compromise, a System

In my workflow, AI and human contribution are not two writers doing the same job in different styles. They handle structurally different problems.

| AI handles — the mechanical burden | Human handles — the intellectual contribution |

|---|---|

| First-draft structure and scaffolding | The argument and position the piece takes |

| Reference formatting and citation organisation | Specific examples from real experience |

| Consistency checks across long documents | Domain-specific error detection (jurisdiction, methodology) |

| Alternative phrasings for editing | The sentences that make a reader trust the writer |

| Research aggregation across sources | Editorial judgment about what to cut |

| Social and meta description variations | Local, regional, or contextual knowledge |

In my last 20 projects: AI generates ~60% of word count in the first pass. I rewrite ~40% before delivery. The 40% I rewrite is always where AI generalised instead of being specific, hedged instead of taking a position, or sounded plausible while being wrong for this context.

The writers who struggle with AI use it at the wrong stage — generating a draft and polishing it. The writers who get consistent results use it at the right stage: scaffold first with AI, layer specific human knowledge on top, then AI again only for final formatting. The sequence matters as much as the tools.

Expert Insight: There is a test I apply before publishing anything that used AI assistance: “Is there at least one paragraph in this piece that only I could have written — because it references my specific client, my specific project, or my specific professional judgment?” If I cannot point to that paragraph, the content is not ready to publish.

When Each Approach Actually Wins

| AI writing wins when… | Human writing wins when… |

|---|---|

| You need consistent formatting across 20+ documents | Content requires domain judgment — legal, medical, academic |

| You are producing first-draft structure for well-documented topics | A client relationship depends on nuance and confidentiality |

| Speed matters more than depth — captions, meta descriptions | Content involves local, regional, or contextual knowledge |

| You need multiple format variations for A/B testing | The cost of being wrong is high — contracts, thesis submissions |

| ⚠ Unedited: generic, rankable only for very low-competition queries | ⚠ Alone at scale: economically unsustainable at most SEO publication frequencies |

The practical conclusion: Pure AI and pure human writing are both economically irrational in 2026 for most professional content work. The hybrid model is not a compromise — it is the superior third approach that neither camp has fully accepted yet.

FAQs: AI Content Writing vs Human Writing

Q. Can AI content rank on Google in 2026?

Yes — but only after proper human editing. In my experience, AI-drafted content that goes through 40% rewriting with real examples consistently ranks well. Unedited AI output rarely holds a competitive position for long.

Q. Does Google penalise AI content?

Google does not penalise AI content — it penalises unhelpful content. If your page lacks real experience, depth, or original insight, it will underperform regardless of how it was written. The origin does not matter. The usefulness does.

Q. Is human writing still necessary in 2026?

For academic, legal, and expert content — absolutely yes. AI cannot replace domain judgment, local knowledge, or professional accountability. For basic social posts and meta descriptions, a light AI plus edit workflow is perfectly sufficient.

Q. How do I make AI content good enough to rank?

Three steps that actually work: add one specific real example per 300 words, replace every vague sentence with a clear position, and delete the first and last AI-generated paragraph — they are almost always generic filler that pulls your quality score down.

What is the best approach — AI or human writing?

Neither alone. AI handles speed and structure; human expertise handles accuracy, judgment, and trust. The hybrid approach — AI draft plus meaningful human editing — consistently outperforms both pure methods in ranking speed and reader engagement.

Ready to 3x your content quality? Contact AspirixWriters — academic, legal, and SEO writing from Jaipur.

- Google Search Central — Content guidelines: developers.google.com/search/docs

- Digital Applied — 16-month Google ranking study, AI vs human content 2026

- Google — E-E-A-T quality evaluator guidelines: developers.google.com/search/docs/fundamentals/creating-helpful-content

About Author

Dr. Rekha Khandelwal is a certified expert in AI tools and academic content development, with a strong focus on leveraging platforms like ChatGPT, Claude, and Gemini for research and digital writing. With a Ph.D. in Law and specialized training in AI-driven content creation, she helps students, researchers, and professionals create high-quality, SEO-optimized, and impactful content.

Author Profile Dr. Rekha Khandelwal | Academic Writer, Legal Technical Writer, AI Expert & Author | AspirixWriters

- SEO Blogwriting Services

- Motivational, Creative & Ghostwriting

- Legal & Technical Writing Services

- Academic Writing Assistance

- Editing & Proofreading

AI Content Writing vs Human Writing: What Google Actually Rewards

Artificial Intelligence: My Complete Guide, Real Tools & Workflow AI Content Writing vs Human Writing:…

AI for Social Media: How I Create 30 Days of Posts in 2 Hours

Most people who try AI for social media content make the same mistake: they open…

AI in Content Creation: 2026 Guide, Tools & Best Practices

Explore AI in content creation for 2026 — tools, workflows, benefits, real use cases, and…

How to Use AI to Write Blog Posts Faster — My Real Step-by-Step Workflow

Artificial Intelligence: My Complete Guide, Real Tools & Workflow How to Use AI to Write…