Cluster Post 2 | Module 3: Research Methodologies

From Concept to Submission Series | 2026

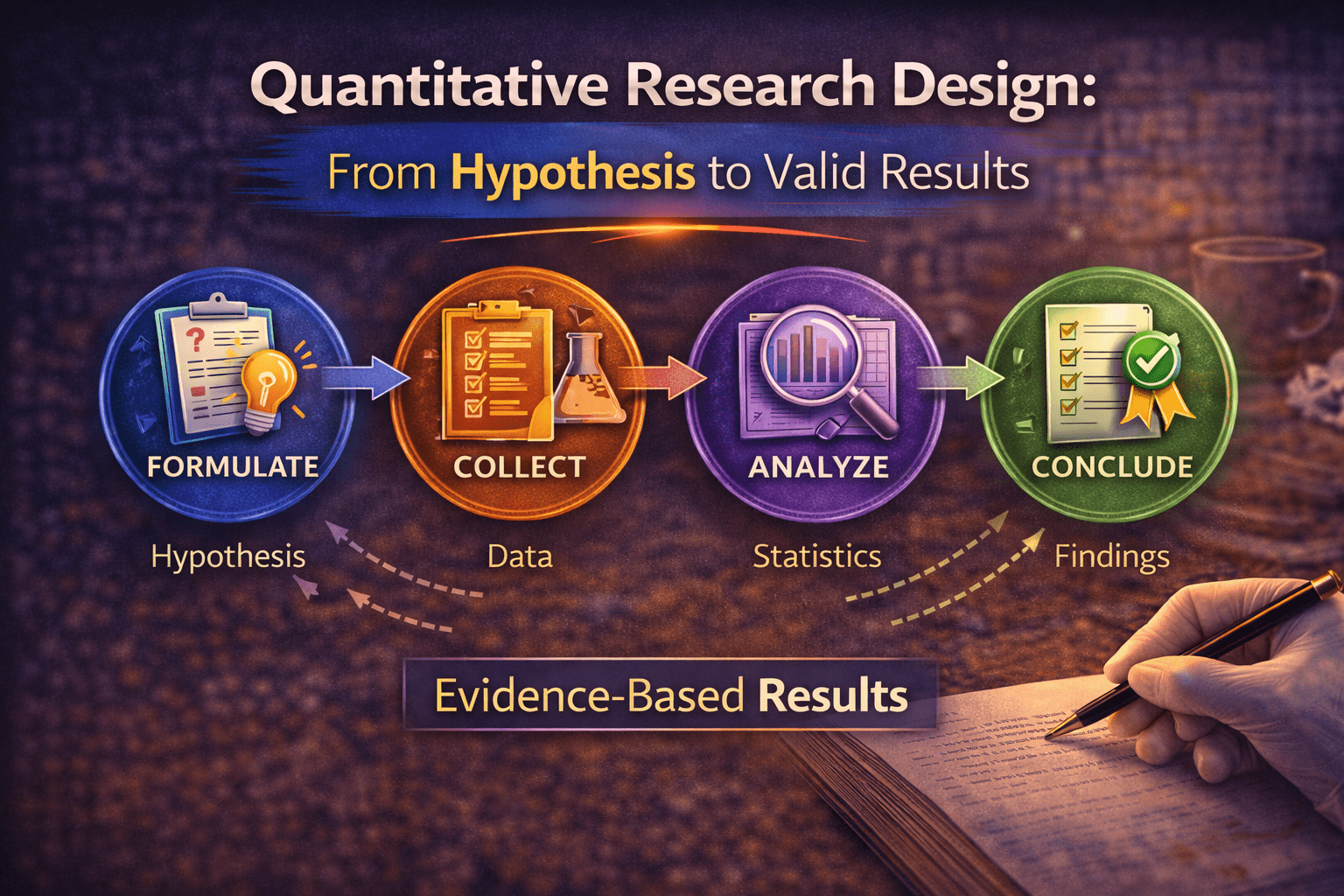

Quantitative Research Design: From Hypothesis to Valid Results

The module overview introduced experimental, survey, and correlational designs. This post goes deeper: how to operationalise variables rigorously, the distinction between internal and external validity and why it matters, how to determine your sample size before you collect data, and the specific threats to validity that reviewers look for in quantitative studies.

Starting Right: The Hypothesis

A research question asks what you want to know. A hypothesis states, in advance, what you expect to find and why. The difference matters more than it sounds.

Hypotheses make your reasoning visible and testable. When you articulate a specific prediction before collecting data, you commit to a logic that can be evaluated: if the data support the prediction, the reasoning behind it gains credibility; if they do not, the reasoning needs revision. This is the mechanism through which quantitative research builds knowledge.

A good hypothesis has three properties. It is specific — naming the exact variables and the direction of the expected relationship. It is grounded — derived from theory or prior research, not from intuition alone. And it is falsifiable — stated in a way that clearly defines what evidence would contradict it.

Weak hypothesis: “Peer mentoring will affect student retention.” Strong hypothesis: “Students who receive peer mentoring with weekly contact frequency will show significantly higher first-year retention rates than students in a no-mentoring control condition, after controlling for socioeconomic status and prior academic achievement (predicted direction: positive effect; predicted mechanism: social integration).”

The strong version names the comparison condition, specifies the direction, identifies the control variables, and states the theoretical mechanism. This level of specificity serves a practical purpose beyond academic rigour: it defines your analysis plan before you collect a single data point, which protects against the temptation to adjust your hypotheses after seeing your data.

Operationalisation: Turning Concepts Into Measurements

Operationalisation is the process of defining exactly how you will measure an abstract concept. It is where most measurement error enters quantitative research, and it receives far less attention in methods courses than it deserves.

Consider “peer mentoring.” As a concept, it refers to a supportive relationship between peers that facilitates learning and adjustment. But as a variable in a study, it needs a precise operational definition: Is it the presence or absence of a formal mentoring assignment? The frequency of mentor contact? The quality of the relationship as rated by the mentee? The number of topics discussed? Each operationalisation captures a different aspect of the concept and will produce different results.

The choice of operationalisation is a theoretical claim. When you decide to measure peer mentoring as weekly contact frequency, you are claiming that frequency is the most important dimension — that what matters about mentoring is how often it occurs, not what is said or how the mentee feels about it. This claim should be justified by theory or prior research, not assumed by default.

The two tests every measure must pass

Reliability means the measure gives consistent results. Test-retest reliability checks whether the same participant scores similarly on two occasions close together in time (when no real change has occurred). Inter-rater reliability checks whether two coders independently assign the same codes or scores to the same data. Cronbach’s alpha checks whether items in a scale correlate with each other as expected. Report whichever form of reliability is relevant to your measure, and verify it in your own sample — a scale that was reliable with US undergraduates may not be reliable with Indian government college students.

Validity means the measure actually measures what it claims to. Face validity asks whether the items look relevant to the construct — a necessary but insufficient check. Content validity asks whether the measure covers the full domain of the construct, not just part of it. Criterion validity asks whether scores correlate with other measures they should correlate with. Construct validity — the most demanding test — asks whether the measure behaves as the theory predicts it should across a range of conditions.

When using a published instrument, report the reliability and validity evidence from the original validation study, and then report your own reliability estimates from your sample. Both are required. Saying “the scale was validated by Smith (2019)” without reporting your own alpha is insufficient — you need to show the scale worked in your specific sample.

Research Designs: Choosing the Right One for Your Question

True experiments: the only design that establishes causation

True experiments require two elements: manipulation of the independent variable (you create the conditions, rather than measuring pre-existing ones) and random assignment of participants to conditions. Random assignment is what makes experiments the gold standard for causal inference — it distributes both known and unknown confounding variables across conditions, so that any difference observed between conditions can be attributed to the manipulation rather than to pre-existing differences between participants.

The most common mistake in reading experimental results: confusing statistical significance with practical significance. A study with N = 2,000 can detect very small effects as statistically significant. An effect of d = 0.08 (less than one-tenth of a standard deviation difference between groups) might be statistically significant with a large enough sample but utterly trivial in practice. Always report and interpret effect sizes alongside p-values.

Quasi-experiments: when random assignment is not possible

In most educational and social research, random assignment is not feasible. You cannot randomly assign students to different schools, teachers to different classrooms, or regions to different policy regimes. Quasi-experimental designs compare groups that were not randomly assigned — which means pre-existing differences between groups are a persistent threat to valid causal inference.

The critical question for any quasi-experimental claim is: could a difference between groups be explained by pre-existing differences rather than by the intervention? The standard approaches to addressing this are matching (selecting comparison participants who resemble intervention participants on key variables), regression discontinuity (comparing participants just above and just below a threshold cutoff), and difference-in-differences (comparing change over time between groups, not just levels at one point).

Example: A quasi-experimental study compares retention rates at three colleges that received peer mentoring training with three that did not. Pre-existing differences in student demographics, college resources, and geographic location all threaten the causal interpretation. Addressing this requires either demonstrating baseline equivalence on key variables or using statistical controls — not simply noting the limitation and moving on.

Surveys and correlational designs

Survey and correlational designs are appropriate when you want to describe a population, measure the prevalence of something, or examine associations between variables — but not when you want to establish causation. The correlation-causation distinction is stated in every research methods textbook and violated in nearly every discussion section of every survey study ever written.

The discipline required: when you find a significant correlation between variables A and B, you have three possible interpretations: A causes B, B causes A, or a third variable C causes both. Survey designs cannot distinguish between these possibilities. Your discussion section must acknowledge this explicitly and reason about which interpretation is most plausible given the theory and context — not simply assert the causal interpretation that fits your hypothesis.

Internal and External Validity: The Two Tensions Every Designer Faces

Internal validity is the degree to which your study supports causal inference — the degree to which observed differences between conditions can be attributed to the manipulation rather than to confounds. External validity is the degree to which findings generalise beyond the specific sample, setting, and operationalisation used.

These two forms of validity trade off against each other, and this trade-off is one of the most important constraints in quantitative research design. The tightly controlled laboratory experiment that maximises internal validity does so by creating artificial conditions that may not resemble any real-world setting. The naturalistic study that maximises external validity does so by relinquishing the control that would allow causal inference.

Understanding this trade-off changes how you evaluate and design studies. A finding with high internal validity but low external validity is useful for theory testing but may not inform practice. A finding with high external validity but weak internal validity may be highly relevant to practitioners but should not be interpreted as evidence that the intervention caused the outcome.

| Threat to internal validity | What it means and how to address it |

| Selection bias | Groups differ before the intervention. Address by random assignment or by demonstrating baseline equivalence on key variables. |

| History | Events outside the study affect one group more than another during the study period. Address by simultaneous data collection across conditions. |

| Maturation | Participants change over time regardless of the intervention (students learn throughout the semester). Address by including a control condition. |

| Testing effects | Repeated measurement sensitises participants to the measure. Address by varying measures across time points or using parallel forms. |

| Attrition | Participants drop out non-randomly — those who benefit least may be most likely to leave. Report dropout rates and compare completers to non-completers on baseline variables. |

| Demand characteristics | Participants behave differently because they know they are being studied. Address by blind conditions where possible. |

Sample Size: Determining It Before You Collect Data

The module overview says larger samples are generally better. This section explains the principled way to determine how large your sample needs to be — which is not “as large as I can manage” but a calculation based on what effect you expect and how confident you need to be in detecting it.

Power analysis is the standard method for determining minimum sample size in quantitative research. It requires four inputs:

- Effect size: How large is the effect you expect to find? Use prior research or established benchmarks (Cohen’s d: small = 0.2, medium = 0.5, large = 0.8; r: small = 0.1, medium = 0.3, large = 0.5).

- Alpha level: Your significance threshold — conventionally 0.05, meaning you accept a 5% probability of a false positive.

- Desired power: The probability of detecting the effect if it exists — conventionally 0.80, meaning you accept a 20% probability of missing a real effect.

- Design: The specific statistical test you will use determines the formula.

Free power analysis software (G*Power, available at gpower.hhu.de) calculates required sample size given these inputs. For a two-group comparison (t-test) with a medium effect size (d = 0.5), alpha = .05, and power = .80, the required sample is approximately 64 participants per group — 128 total. For a small effect (d = 0.2), the required sample jumps to approximately 394 per group.

Why this matters for Indian research: many quantitative studies in Indian universities use samples of 50–100 total participants for designs that require several hundred for adequate power. This means these studies are systematically underpowered — they will miss real effects more than 20% of the time, and the effects they do detect may be overestimates of the true effect size (because small samples detect only the largest noise in the data). Reporting a power analysis in your methodology chapter — even a post-hoc one showing what power your achieved sample gave you — signals methodological sophistication that reviewers and examiners notice.

🔱 For Law Students

Quantitative methods appear in legal research primarily in two contexts: empirical legal research (studying the behaviour of courts, litigants, or legal institutions through data) and socio-legal research (studying how law operates in social contexts). Both require the same rigour as quantitative social science research, applied to legally relevant phenomena.

Quantitative methods in empirical legal research

Studies that analyse large numbers of court decisions, measure disparities in sentencing, track compliance rates with regulatory requirements, or examine the effects of legal interventions on behaviour all use quantitative methods. The design considerations described above apply fully: operationalisation must be rigorous (how exactly is a “favourable” court outcome defined?), correlation-causation distinctions must be respected (a correlation between judicial appointment type and decision direction does not establish that appointment type causes decision direction), and sample size must be adequate for the analysis planned.

The most common quantitative method in empirical legal research is regression analysis — examining whether a legal outcome (dependent variable) is predicted by case characteristics or litigant attributes (independent variables) after controlling for other relevant factors. If you are using regression, you must report: the full model including all predictors, standardised and unstandardised coefficients, standard errors, confidence intervals, model fit statistics (R²), and checks for violations of regression assumptions (multicollinearity, heteroskedasticity, normality of residuals).

A note on SRS and Indian court data

Official Indian court data — from NJDG (National Judicial Data Grid), SCC Online, or AISHE — has specific limitations that affect quantitative analysis. Coverage is uneven across courts and time periods; case classification is inconsistent; and some variables (such as litigant characteristics) are systematically missing. Any quantitative study using Indian court data must address these data quality issues explicitly in the methods section — not dismiss them as minor limitations, but explain what steps were taken to check data quality and what the remaining limitations mean for the conclusions that can be drawn.

References

- Creswell, J. W., & Creswell, J. D. (2022). Research Design: Qualitative, Quantitative, and Mixed Methods Approaches (6th ed.). Sage.

- Field, A. (2024). Discovering Statistics Using IBM SPSS Statistics (6th ed.). Sage.

- Cohen, J. (1988). Statistical Power Analysis for the Behavioral Sciences (2nd ed.). Lawrence Erlbaum.

- Shadish, W. R., Cook, T. D., & Campbell, D. T. (2002). Experimental and Quasi-Experimental Designs for Generalized Causal Inference. Houghton Mifflin.

- G*Power: Free power analysis software — gpower.hhu.de

- National Judicial Data Grid (NJDG) — India court statistics. njdg.gov.in

Next: Cluster Post 3 — Qualitative Research Design: Choosing the Right Approach

The Complete Guide to Research Paper Structure: IMRAD Format, Thesis Organization & Academic Writing (2026)

From Concept to Submission: A Complete Guide to Research Paper and Thesis Writing Module 1:…

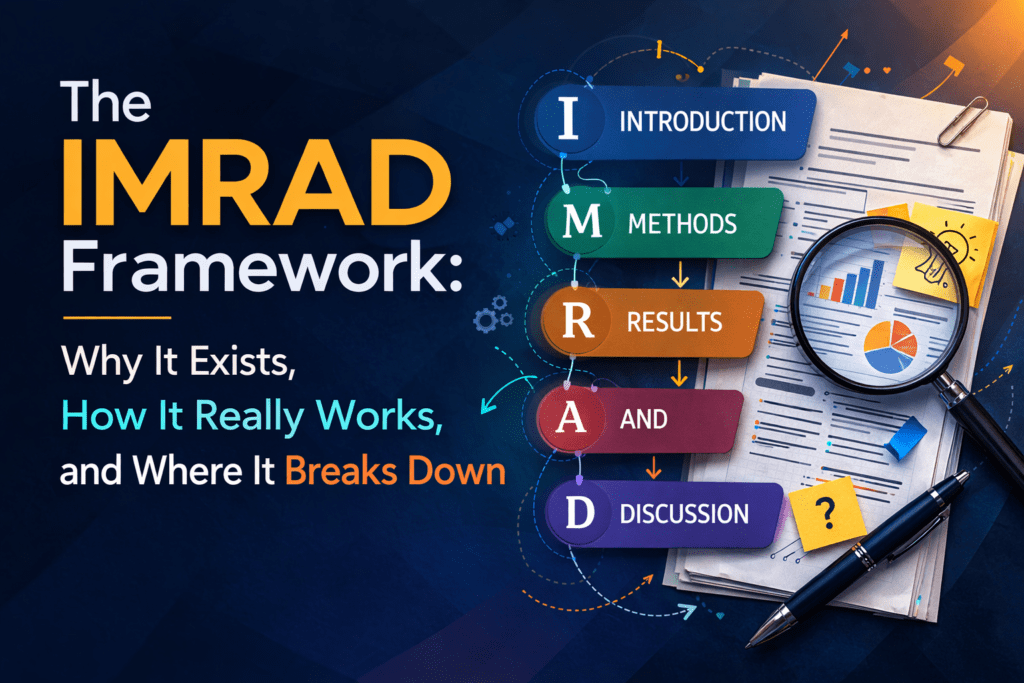

The IMRAD Framework: Why It Exists, How It Really Works, and Where It Breaks Down

Cluster Post 1 | Module 1: Understanding the Structure of Research Papers and Theses From…

How to Write a Research Introduction That Reviewers Cannot Ignore

Cluster Post 2 | Module 1: Understanding the Structure of Research Papers and Theses From…

How to Write a Methods Section That Reviewers Will Trust

Cluster Post 3 | Module 1: Understanding the Structure of Research Papers and Theses From…

The Results Section: How to Present Findings Without Letting Interpretation Slip In

Cluster Post 4 | Module 1: Understanding the Structure of Research Papers and Theses From…

The Discussion Section: How to Turn Findings Into Knowledge

Cluster Post 5 | Module 1: Understanding the Structure of Research Papers and Theses From…

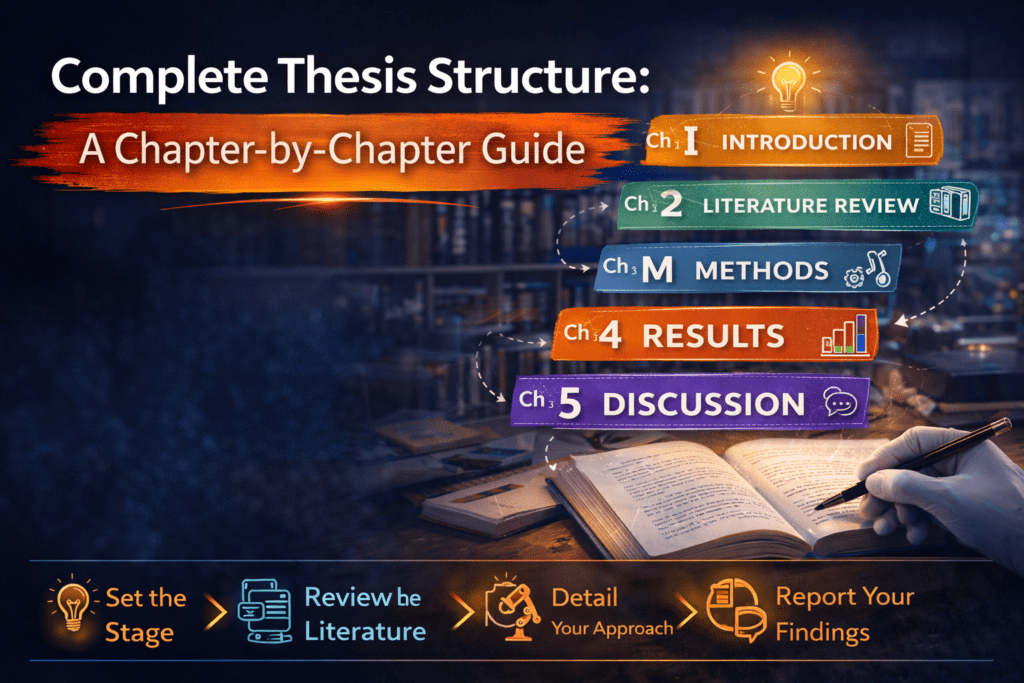

Complete Thesis Structure: A Chapter-by-Chapter Guide

Cluster Post 6 | Module 1: Understanding the Structure of Research Papers and Theses From…

10 Structural Mistakes That Get Research Papers Rejected — And How to Fix Every One

Cluster Post 7 | Module 1: Understanding the Structure of Research Papers and Theses From…

The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

From Concept to Submission: A Complete Guide to Research Paper and Thesis Writing Module 2,…

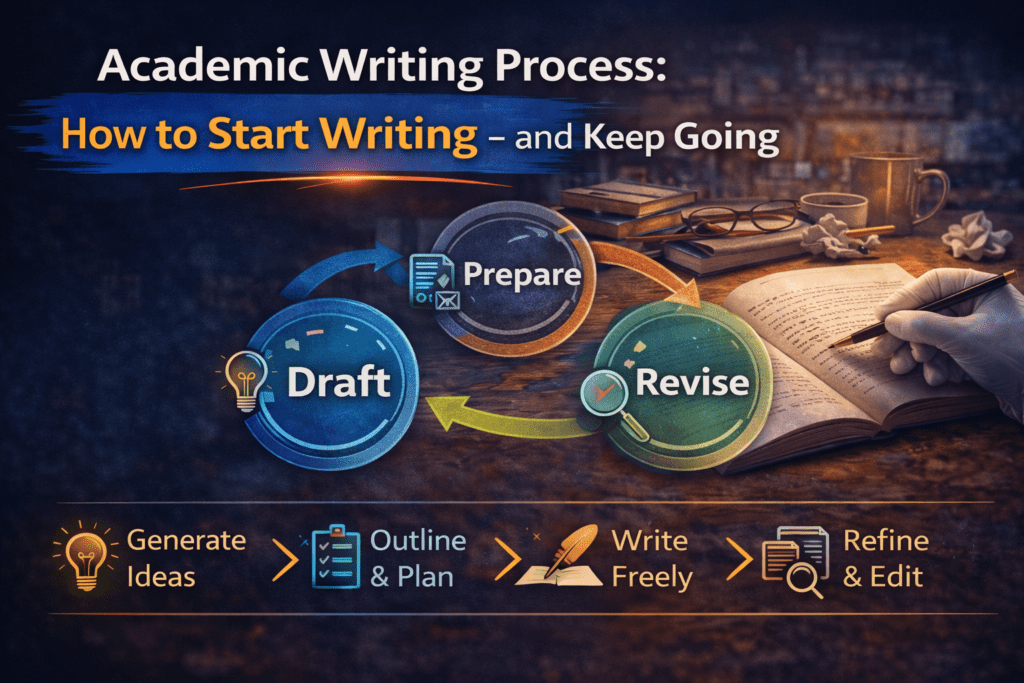

How to Start Writing — and Keep Going

Cluster Post 1 | Module 2: The Academic Writing Process From Concept to Submission Series …

How to Write Clear Engaging Academic Prose

Cluster Post 2 | Module 2: The Academic Writing Process From Concept to Submission Series …

The Revision Process: How to Turn a Draft Into a Submission

Cluster Post 3 | Module 2: The Academic Writing Process From Concept to Submission Series …

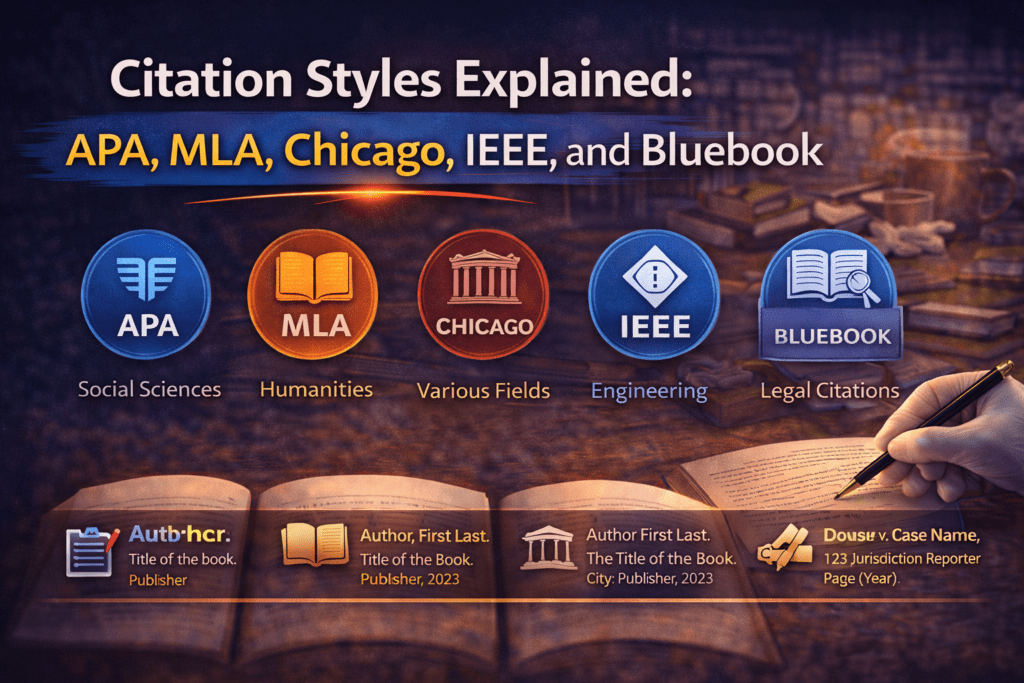

Citation Styles Explained: APA, MLA, Chicago, IEEE, and Bluebook

Cluster Post 4 | Module 2: The Academic Writing Process From Concept to Submission Series …

Reference Management: Zotero and Mendeley from Setup to Submission

Cluster Post 5 | Module 2: The Academic Writing Process From Concept to Submission Series …

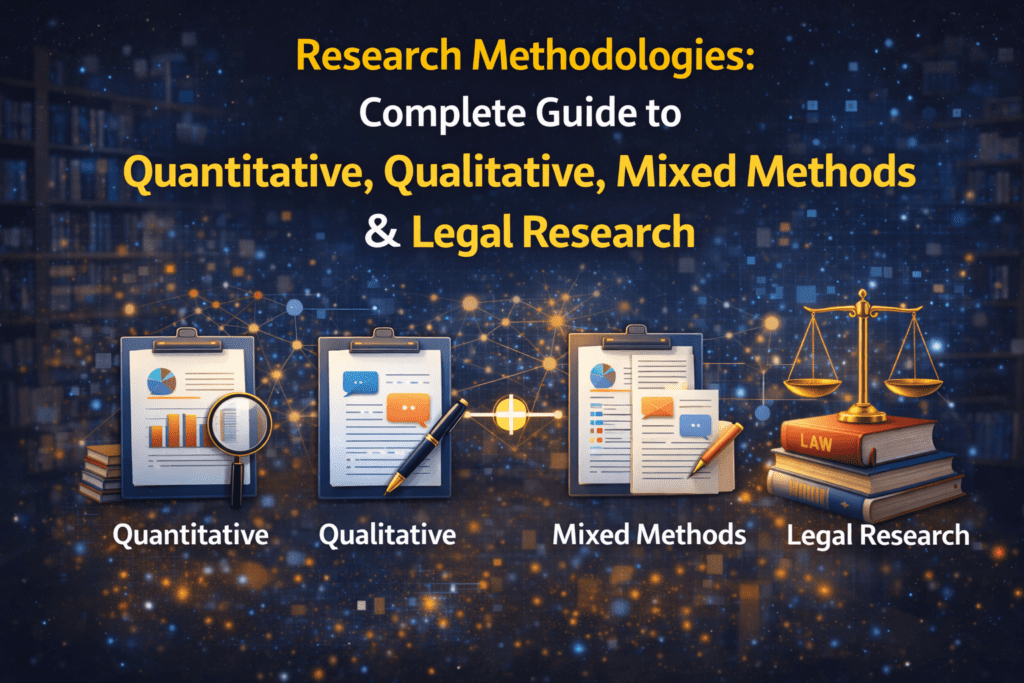

Research Methodologies: Complete Guide to Quantitative, Qualitative, Mixed Methods & Legal Research (2026)

Why Methodology Determines Research Quality Here’s what thesis examiners focus on first: your methodology section…