Last Updated: March 31, 2026

Sampling

Module 3: Research Methodologies

From Concept to Submission Series | 2026

Academic Writing Mastery: The Complete 2026 Guide To Research Papers, Thesis & Dissertation Writing

Module 1 (Complete Guide)- The Complete Guide To Research Paper Structure: IMRAD Format, Thesis Organization & Academic Writing (2026)

Module 2 (Complete Guide) –The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

Sampling: Choosing Who to Study and How Many

The module overview introduced probability and purposive sampling with brief descriptions. This post goes deeper: the logic behind each sampling approach and how it determines what conclusions you can draw, how to write a sampling justification that satisfies reviewers, what saturation actually means and how to demonstrate it, and the most common sampling mistakes that generate examiner queries.

The Fundamental Logic: What Sampling Is For

Sampling is not primarily a practical problem — it is an epistemological one. The sampling decisions you make determine what population your findings apply to, what conclusions you can legitimately draw, and what claims of generalisability you can make. Getting the logic wrong produces findings that are technically sound but intellectually indefensible.

The core question every sampling decision must answer is: what do I need my sample to be in order for my findings to be valid? For quantitative research, validity usually requires representativeness — the sample should be structured so that its characteristics reflect those of the population you want to generalise to. For qualitative research, validity requires informativeness — the sample should be selected so that participants can provide rich, relevant insight into the phenomenon you are studying.

These are different logics, and they produce different sampling strategies. Applying representativeness logic to qualitative sampling — selecting participants to be demographically representative of a population — is a category error that produces a sample too large for in-depth analysis and too varied for thematic depth. Applying informativeness logic to quantitative sampling — selecting participants because they have interesting things to say — produces a biased sample that cannot support statistical inference.

Probability Sampling: When and How

Probability sampling gives every member of the target population a known, non-zero chance of selection. This property is what enables statistical inference from a sample to a population — the mathematical logic of inferential statistics requires that observations are drawn by a random process.

Simple random sampling

Every member of the population has an equal probability of selection. Requires a complete sampling frame — a list of every member of the population from which to sample randomly. In practice, complete sampling frames are rarely available for the populations social scientists study. A complete list of all first-year students at Indian government colleges does not exist centrally; a list of students at a specific college does.

Stratified random sampling

The population is divided into subgroups (strata) defined by a characteristic relevant to the research question, and random samples are drawn from each stratum. Stratification ensures representation of each subgroup and increases precision when the characteristic used to stratify is correlated with the outcome of interest.

Study examining peer mentoring and retention across disciplines: Stratify by discipline (Arts, Science, Commerce) and randomly sample proportionally from each stratum (40% Arts, 30% Science, 30% Commerce, reflecting the population distribution). This ensures that discipline-level variation is represented and allows discipline-specific analyses.

Cluster sampling — and its hidden limitation

When a complete individual-level sampling frame does not exist but a frame of groups (clusters) does, randomly sample clusters and then study all or a random sample of members within selected clusters. Useful for geographically dispersed populations: randomly sample colleges from a district, then survey all first-year students at selected colleges.

The limitation: cluster sampling introduces design effects — members of the same cluster are more similar to each other than to members of other clusters, which reduces effective sample size. Standard errors must be adjusted for clustering in the analysis, using software that accounts for this (Stata, R’s survey package). Many researchers using cluster samples apply standard error formulas appropriate for simple random samples, which underestimates uncertainty and produces misleadingly narrow confidence intervals.

Convenience sampling: when and how to defend it

Most quantitative research in Indian universities uses convenience samples — available participants rather than randomly selected ones. This is a pragmatic reality, not automatically a fatal flaw, but it requires explicit acknowledgement and careful limitation of the conclusions drawn.

A convenience sample cannot support claims about a defined population beyond the sample itself. A study of 450 students at three Rajasthan government colleges cannot claim to represent Indian government college students generally. It can claim to examine the phenomenon in a specific, described context, and it can generate findings that are plausible candidates for generalisation pending replication in other contexts.

The methodology chapter must: describe the sampling frame precisely (which colleges, which year groups, which departments), explain why this sample was accessible and what it is a sample of, and constrain the generalisability claims in the discussion to match the sample actually studied.

Purposive Sampling: The Logic of Qualitative Selection

Purposive sampling selects participants or cases deliberately, based on characteristics relevant to the research question, rather than randomly. This is not a compromise forced by the impossibility of random sampling — it is the logically correct approach when the goal is insight rather than statistical representativeness.

| Purposive strategy | When to use it |

| Maximum variation | Select participants who vary widely on characteristics of interest. Patterns that hold across maximum variation are more robust than patterns found only in a narrow population. Good for exploratory research where you want to map the full range of a phenomenon. |

| Homogeneous | Select participants who share a key characteristic. Useful when you want to understand a specific subgroup in depth without variation on the characteristic of interest. |

| Typical case | Select participants or cases that are representative of the common experience. Useful when you want to describe what is typical rather than map the full range. |

| Critical case | Select a case where the phenomenon should be especially visible or where your theory should especially apply. If it does not hold here, it is unlikely to hold elsewhere. |

| Extreme or deviant case | Select unusual cases to understand boundary conditions. What happens at the extremes illuminates what happens in the middle. |

| Snowball | Ask initial participants to refer others. Useful for reaching populations that are hard to identify or access — stigmatised groups, professional networks, covert communities. |

The key to writing a purposive sampling justification is explaining not just what strategy you used but why that strategy fits your research question. Maximum variation sampling is appropriate when you want to identify patterns that hold across diverse cases; it is not appropriate when you want to understand a specific, bounded experience. The justification connects the sampling logic to the research question.

Weak: “Purposive sampling was used to select thirty participants.” Strong: “Purposive sampling using a maximum variation strategy was used to select thirty participants who varied across three dimensions identified as theoretically relevant: discipline (Arts, Science, Commerce), geographic origin (urban, semi-urban, rural), and first-generation status (first-generation vs. continuing-generation). Maximum variation was chosen because the research question concerns peer mentoring experience broadly across the government college population; selecting a homogeneous sample would risk generating findings applicable only to a narrow subgroup.”

Sample Size: The Right Answer for Each Approach

Quantitative sample size: power analysis

The module overview gives rough guidelines — 30 to 50 per group for basic comparisons, several hundred for survey research. These are useful starting points but not substitutes for a power analysis. As explained in Post 2, a power analysis takes your expected effect size, your alpha level, and your desired power and calculates the minimum sample size needed to detect the effect reliably.

Report your power analysis in the methodology chapter. State the expected effect size and its source (prior research, published meta-analysis, or Cohen’s benchmarks with justification), your alpha level, your desired power (conventionally .80), and the calculated minimum N. If your achieved sample falls below the minimum, report the actual power your achieved sample provides. Post-hoc power calculation using G*Power takes five minutes and adds considerable credibility to a methodology chapter.

Qualitative sample size: the saturation standard

Saturation — the point at which new participants are not generating new codes, themes, or theoretical insights — is the qualitative equivalent of statistical power as a sample size criterion. It is the right standard, but it is widely misunderstood and inconsistently applied.

Saturation is not a single moment that arrives cleanly. It is a judgment the researcher makes, incrementally, about whether the pattern of what is being heard is stable. You do not know you have reached saturation until you have gone past it — collected several additional interviews and found that they are confirming, not extending, your existing themes. Saturation is therefore not a stopping rule you apply in advance; it is a retrospective judgment you make after analysis.

How to demonstrate saturation in your methodology chapter: report the number of participants, the number who were interviewed before themes stabilised, and the number of additional interviews conducted after that point to confirm stability. This demonstrates that saturation was actively tested rather than assumed.

“Thematic stability was reached after approximately eighteen interviews, when new participants were elaborating existing themes rather than introducing new ones. Six additional interviews were conducted beyond this point to confirm stability; none generated themes not already identified. The final sample of twenty-four participants therefore exceeds the saturation point, providing confidence that the theme structure is stable within this population and context.”

The guidelines debate: what counts as enough for qualitative research

The module mentions that PhD committees often expect 15 to 30 interviews. This is accurate but misleading if taken as a target. Fifteen interviews with highly knowledgeable participants in a well-bounded context may reach saturation comfortably; thirty interviews with participants who have limited direct experience of the phenomenon may never reach it.

The appropriate number of participants depends on the tradition (IPA typically works with smaller samples of 4–10; grounded theory may require 20–30 or more), the heterogeneity of the population (diverse populations require more participants to reach saturation), and the complexity of the phenomenon (phenomena with multiple dimensions require more data to map fully than simpler ones). Cite Braun and Clarke, Creswell, or the tradition-specific methodology texts to justify your sample size, not just a committee guideline.

The Most Common Sampling Mistakes

- Calling a convenience sample random: The most consequential sampling error. Convenience samples cannot support statistical inference to a population in the same way random samples can. Mislabelling a convenience sample as random invalidates the inferential claims built on it.

- Purposive sampling without a rationale: Stating that purposive sampling was used without explaining what characteristics participants were selected for and why those characteristics are relevant to the research question.

- Over-claiming generalisability from a convenience or purposive sample: Drawing population-level conclusions from a sample that cannot support them. Conclusions must be scoped to the sample that was actually studied.

- Declaring saturation without demonstrating it: Stating “theoretical saturation was reached” without describing the process by which it was determined. Examiners increasingly expect to see evidence of how saturation was assessed, not just a claim that it occurred.

- Ignoring attrition: For longitudinal or multi-phase designs, failing to report how many participants dropped out between phases, and whether those who dropped out differed systematically from those who remained. Non-random attrition is a serious threat to validity that must be addressed, not mentioned as a minor limitation.

FAQs

Q: What is the difference between probability and non-probability sampling?

Probability sampling uses random selection so every member of the population has a known, non-zero chance of inclusion — enabling statistical generalisation to the population. Types include simple random, stratified, systematic, and cluster sampling. Non-probability sampling uses deliberate selection based on criteria — it does not enable statistical generalisation but is appropriate for qualitative research, hard-to-reach populations, and exploratory studies. Types include purposive, snowball, convenience, and quota sampling. The choice depends on your research question and whether generalisation is your goal.

Q: How do you calculate sample size for quantitative research?

Sample size for quantitative research is calculated based on: the effect size you expect to detect (small effects need larger samples); the desired statistical power (typically 0.80 — 80% chance of detecting a real effect); the significance level (typically α = 0.05); and the statistical test you will use. Use G*Power software (free) to calculate required sample size before data collection. Reporting ‘we used a convenience sample of whatever we could get’ is not a sample size justification — reviewers will flag this.

Q: What is snowball sampling and when should you use it?

Snowball sampling recruits initial participants who then refer others from their networks. It is appropriate for hard-to-reach or hidden populations where no sampling frame exists — undocumented migrants, illegal drug users, whistleblowers, elite groups with gatekeeping. It is not appropriate when representativeness is required, because participants refer people from their own networks, producing a biased sample. Acknowledge snowball sampling’s limitations in your methodology: the sample reflects the social networks of your initial contacts.

Q: What is saturation in qualitative research sampling?

Saturation is the point at which collecting additional data produces no new themes, categories, or insights. It is the qualitative equivalent of achieving adequate statistical power. Theoretical saturation (from grounded theory) occurs when new data no longer develops or refines the emerging theory. Thematic saturation occurs when no new themes emerge from additional interviews. Document when and how you determined saturation — the decision should be systematic, not a feeling. Saturation typically occurs between 12 and 30 interviews depending on the heterogeneity of the participant group.

Q: What is stratified sampling and when is it used?

Stratified sampling divides the population into subgroups (strata) based on relevant characteristics (age, gender, institution type, district) and then randomly samples from each stratum. It ensures that subgroups important to the analysis are adequately represented — particularly useful when subgroups are small relative to the population. Proportionate stratified sampling maintains population proportions. Disproportionate stratified sampling over-samples small but important subgroups. Use stratified sampling when subgroup comparisons are a primary research objective.

Author

Dr. Rekha Khandelwal, a legal scholar and academic writing expert, is the founder of AspirixWriters. She has extensive experience in guiding students and researchers in writing research papers, theses, and dissertations with clarity and originality. Her work focuses on ethical AI-assisted writing, structured research, and making academic writing simple and effective for learners worldwide.

Author Profile Dr. Rekha Khandelwal | Academic Writer, Legal Technical Writer, AI Expert & Author | AspirixWriters

References

- Creswell, J. W., & Creswell, J. D. (2022). Research Design: Qualitative, Quantitative, and Mixed Methods Approaches (6th ed.). Sage.

- Patton, M. Q. (2015). Qualitative Research & Evaluation Methods: Integrating Theory and Practice (4th ed.). Sage.

- Braun, V., & Clarke, V. (2022). Thematic Analysis: A Practical Guide. Sage.

- Guest, G., Bunce, A., & Johnson, L. (2006). How many interviews are enough? Field Methods, 18(1), 59–82.

- Cohen, J. (1988). Statistical Power Analysis for the Behavioral Sciences (2nd ed.). Lawrence Erlbaum.

- G*Power: Free power analysis software.

- SCC Online — Supreme Court Cases database.

Next—Research Ethics in Practice: What Ethics Forms Don’t Tell You

Next in Series

- Complete Guide: Research Methodologies: Complete Guide to Quantitative, Qualitative, Mixed Methods & Legal Research (2026) (Module 3)

- Complete Guide: Data Analysis and Results Presentation: Complete Guide for Quantitative, Qualitative & Legal Research (2026) (Module 4)

- Complete Guide: Organization and Academic Tone: Complete Guide to Professional Scholarly Writing (2026) (Module 5)

- Complete Guide: Peer Review and Publication: Complete Guide from Submission to Acceptance (2026) (Module 6)

- Complete Guide: AI Tools in Academic Research: Opportunities, Ethics, and Best Practices (2026) (Module 7)

- Complete Guide: Grant Writing and Research Funding: Complete Guide to Finding Money for Your Research (2026) (Module 8)

- Complete Guide: Academic Career Development: Complete Guide to Building Your Professional Life in Research (2026) (Module 9)

- Complete Guide: Research Ethics and the IRB Process: Complete Guide to Doing Research Responsibly (2026) (Module 10)

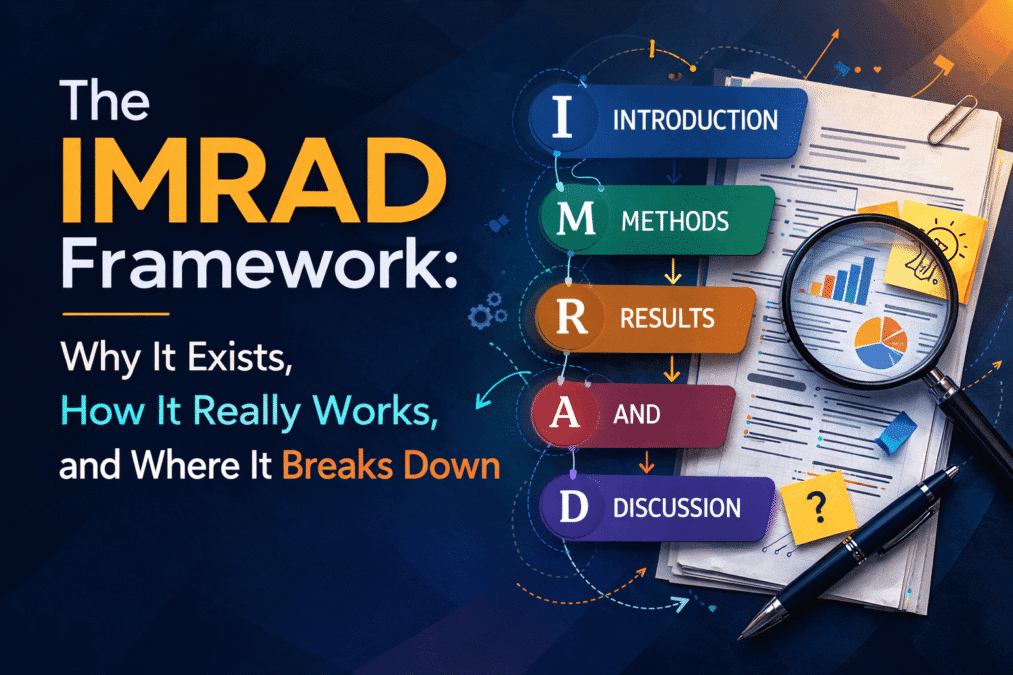

The Complete Guide to Research Paper Structure: IMRAD Format, Thesis Organization & Academic Writing (2026)

From Concept to Submission: A Complete Guide to Research Paper and Thesis Writing Academic Writing…

The IMRAD Framework: Why It Exists, How It Really Works, and Where It Breaks Down

The IMRAD Framework Understanding the Structure of Research Papers and Theses – Module 1: From Concept…

How to Write a Research Introduction That Reviewers Cannot Ignore

How to Write a Research Introduction Module 1: Understanding the Structure of Research Papers and…

How to Write a Methods Section That Reviewers Will Trust

How to Write a Methods Section Module 1: Understanding the Structure of Research Papers and…

The Results Section: How to Present Findings Without Letting Interpretation Slip In

The Results Section Module 1: Understanding the Structure of Research Papers and Theses From Concept…

The Discussion Section: How to Turn Findings Into Knowledge

The Discussion Section Module 1: Understanding the Structure of Research Papers and Theses From Concept…

Complete Thesis Structure: A Chapter-by-Chapter Guide

Complete Thesis Structure Module 1: Understanding the Structure of Research Papers and Theses From Concept…

10 Structural Mistakes That Get Research Papers Rejected — And How to Fix Every One

10 Structural Mistakes That Get Research Papers Rejected Module 1: Understanding the Structure of Research…

How to Write a Journal Abstract That Gets Your Paper Read

How to Write a Journal Abstract Module 1: Understanding the Structure of Research Papers and…

Systematic Review and PRISMA: How to Conduct and Report a Review That Meets Publication Standards

Systematic Review and PRISMA Module 1: Understanding the Structure of Research Papers and Theses From…

Legal Research Methods: A Complete Guide to Doctrinal, Empirical and Comparative Legal Research

Legal Research Methods Module 1: Understanding the Structure of Research Papers and Theses From Concept…

The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

Module 2, Complete Guide: The Academic Writing Process – from First Draft to Submission From…

How to Start Writing and Keep Going

How to Start Writing Module 2: The Academic Writing Process From Concept to Submission Series …

How to Write Clear Engaging Academic Prose

How to Write Clear Engaging Academic Prose – Module 2: The Academic Writing Process From…

The Revision Process: How to Turn a Draft Into a Submission

The Revision Process Module 2: The Academic Writing Process From Concept to Submission Series | …