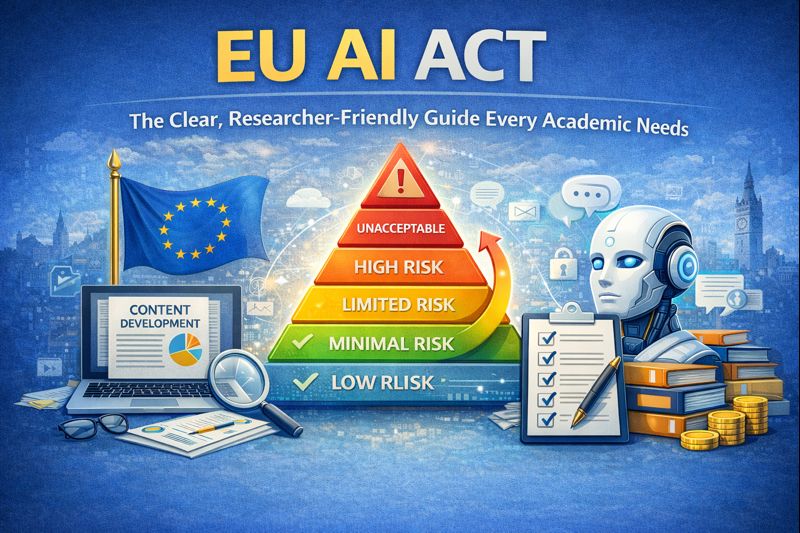

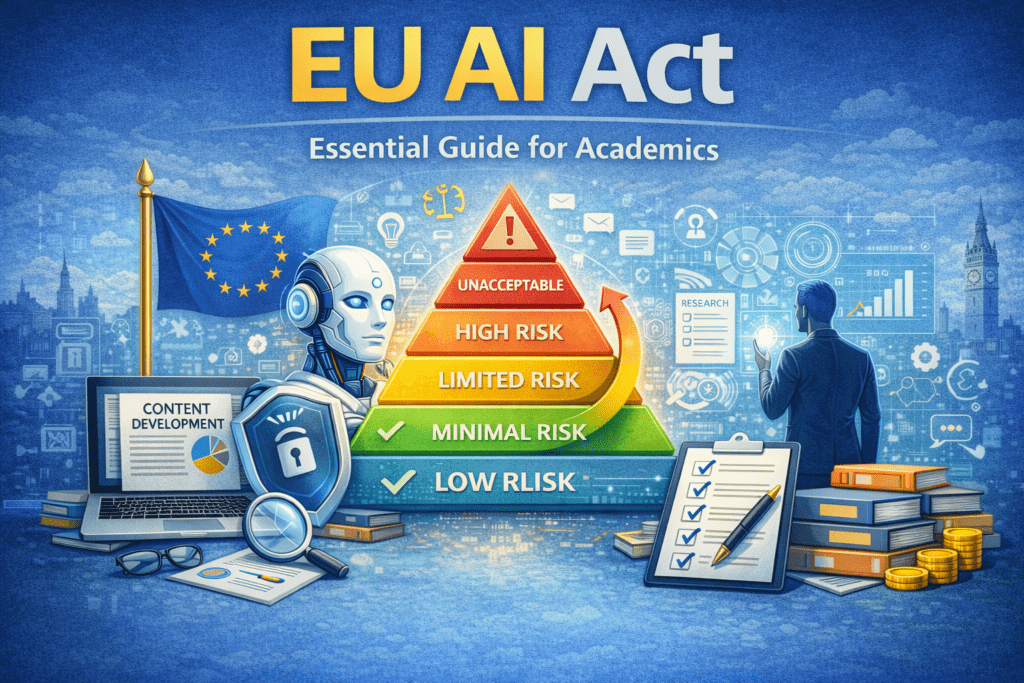

Why the EU AI Act 2026 Matters Now

The EU AI Act 2026 marks the first comprehensive legal framework in the world designed to regulate artificial intelligence based on risk. From academic researchers and PhD scholars to content creators and AI‑powered businesses, this regulation reshapes how AI tools can be developed, used, and trusted.

If you are using AI for research writing, literature reviews, content creation, or tool evaluation, this guide explains exactly what the EU AI Act means for you—without legal jargon, fear‑based headlines, or technical overload.

What Is the EU AI Act 2026?

The EU Artificial Intelligence Act (officially Regulation (EU) 2024/1689) introduces a risk‑based approach to AI regulation. Instead of banning AI broadly, it categorizes systems by the potential risk they pose to individuals, society, and fundamental rights.

In simple terms, the higher the risk, the stronger the legal obligations.

EU AI Act 2026 Risk Categories

Unacceptable Risk (Prohibited AI)

AI systems that threaten fundamental rights or democratic values.

Examples:

- Social scoring systems

- Certain forms of real‑time biometric surveillance in public spaces

Status: Completely banned under the EU AI Act 2026

High‑Risk AI Systems

AI used in sensitive decision‑making processes affecting people’s lives.

Examples:

- AI in recruitment and hiring

- Medical diagnosis and treatment support

- Credit scoring and loan approvals

- Biometric identification systems

Requirements:

- Risk management systems

- Human oversight

- Technical documentation and audits

Limited‑Risk AI (Transparency Required)

AI systems that interact directly with humans or generate synthetic content.

Examples:

- Chatbots (including generative AI tools)

- AI‑generated images, audio, or video

- AI‑assisted content writing tools

Requirement:

- Clear disclosure that AI was used

Minimal‑Risk AI (No New Obligations)

AI systems with minimal or no impact on fundamental rights.

Examples:

- Academic research tools

- Literature review and citation generators

- Grammar checkers and plagiarism detection tools

- AI‑assisted research blogs with verified references

Status: No mandatory compliance requirements

EU AI Act 2026 Timeline: Key Dates to Know

- February 2025: Ban on unacceptable‑risk AI systems begins

- August 2025: Transparency rules apply to chatbots and generative AI

- August 2026: Full enforcement of high‑risk AI obligations

- August 2027: Extended compliance for complex medical AI systems

Current position (2026): Research and academic use cases remain low‑risk, but transparency is now a best practice.

Who Is Affected by the EU AI Act?

The regulation applies if you:

- Offer AI tools or services to EU users

- Publish AI‑assisted content accessed by EU readers

- Use EU citizens’ data in AI training

Impact on Indian Researchers & Content Creators

- Academic blogs and research articles → Minimal risk

- AI‑assisted content for EU clients → Limited risk (disclosure needed)

- AI tools for hiring, health, or admissions → High risk

Example:

An India‑based researcher publishing AI‑assisted literature reviews with proper citations faces no compliance burden.

EU AI Act Risk Classification Table

| Risk Level | Use Case Examples | Compliance Requirement | Penalties |

|---|---|---|---|

| Unacceptable | Social scoring, mass surveillance | Prohibited | Up to €35M or 7% revenue |

| High Risk | Hiring, medical AI, credit scoring | Audits, governance, oversight | Up to €15M or 3% revenue |

| Limited Risk | Chatbots, generative content | Transparency labels | Up to €7.5M or 1% revenue |

| Minimal Risk | Research blogs, academic tools | None | No penalties |

What Changes for Content Creators and Researchers?

For Academic Writers & Bloggers

- Continue publishing research‑based content

- Use AI for drafting, summarization, or outlining

- Maintain verified references

- Add a transparency disclosure when AI is used

Recommended disclosure: This article was developed with AI assistance and reviewed by a human author. Sources are independently verified.

For PhD Scholars and Funded Researchers

- Literature review tools remain minimal‑risk

- Ethics statements are increasingly expected

- EU‑funded projects may require AI transparency documentation

EU AI Act Penalties: Should You Be Concerned?

While penalties appear high, enforcement is proportionate.

- Large technology providers are the primary focus

- Small research blogs and academic creators face no fines

- Market access restrictions matter more than penalties

Minimal‑risk research use cases carry zero financial risk.

5‑Minute EU AI Act Compliance Checklist

- Identify AI tools used

- Classify risk level (minimal / limited / high)

- Add AI‑use disclosure where applicable

- Retain basic usage documentation

- Periodically review accuracy and bias

Frequently Asked Questions (FAQs)

1. What is the EU AI Act in one sentence?

The EU AI Act is the European Union’s risk-based law that sets rules for the development, deployment, and use of AI systems to protect fundamental rights, safety, and transparency while enabling innovation.

2. Does the AI Act apply to university research and PhD projects?

Yes — the Act can apply when research develops, tests, or deploys AI that falls into regulated categories (especially “high-risk” uses) or if the results are offered or used in the EU market; purely theoretical work that never produces or deploys regulated systems is less likely to trigger obligations.

4. When do the main obligations take effect?

Most core obligations for high-risk AI systems (Articles 9–49) become enforceable on 2 August 2026; some transparency rules for certain systems and GPAI-related measures started earlier. Researchers should act now to prepare.

5. What practical steps should researchers take today?

Start with: (1) classify whether your AI is “high-risk”; (2) create a basic risk assessment and data governance checklist; (3) keep detailed documentation (design, datasets, testing); (4) document human oversight and model limitations; (5) consider registering or using a sandbox if appropriate. These steps reduce future compliance work.

6. Do I have to disclose my training data or model details?

Yes — the Act requires increased transparency for certain systems (e.g., generative models and systems with significant societal impact). That typically means documenting dataset sources, known biases, and limitations — not necessarily publishing raw proprietary data, but being transparent about provenance and risk.

7. Are there special rules for general-purpose AI models (GPAI)?

Yes — the Commission has published guidance and draft clarifications addressing GPAI obligations (transparency, risk assessment, and safeguards). Researchers using large pretrained models should track those guidance documents and note labeling/usage requirements.

8. What about privacy and personal data in research under the AI Act?

You must follow both the AI Act and data protection laws (e.g., GDPR). That means careful data minimization, lawful basis for processing, documentation of consent/permissions, and extra care when datasets include sensitive attributes. The EDPS has risk-management guidance useful for researchers.

9. Can universities use an “AI regulatory sandbox”?

Yes — the Act encourages Member States to run sandboxes by 2 August 2026. Sandboxes are controlled environments where researchers and innovators can test systems under regulator supervision with reduced immediate enforcement risk — ideal for academic pilots.

10. Will the AI Act stop academic freedom or open research?

No — the Act targets risky deployments and harmful uses, not basic academic inquiry. Still, researchers must be mindful when publishing models, datasets, or tools that could be misused or qualify as high-risk. Good documentation and ethical review reduce legal and reputational risk.

11. What records and documentation should I keep for reproducibility and compliance?

Keep: project scope, risk assessments, dataset inventory (sources, consent), data preprocessing steps, model architecture & training logs, evaluation metrics (incl. fairness tests), human oversight plans, and deployment notes. These serve both reproducibility and potential audits.

12. If my project uses third-party APIs (e.g., LLMs), who is responsible?

Responsibility may be shared: model providers may have obligations (transparency, safety) and researchers/deployers also must ensure lawful and safe use. Contracts and supplier due diligence are important — check provider documentation and the AI Act obligations for deployers.

13. What are the likely penalties for non-compliance?

Fines can be significant for serious breaches (the Act provides for tiered administrative penalties, potentially up to a percentage of global revenue for businesses). For researchers, consequences are more likely institutional (ethics review failures, funding risks) unless deployment brings the research under commercial rules.

14. Where can researchers find official guidance and updates?

Main resources: the European Commission AI pages, the AI Act single information platform & Service Desk, EDPS guidance on risk management, and national regulator pages. Bookmark these and subscribe to updates because guidance is still evolving.

15. Quick checklist for an ethical, compliant research project (short version)

Classify risk (is it high-risk?)

Run a documented risk assessment

Log datasets and consent details

Test for bias/fairness and document results

Plan human oversight and explainability

Use sandboxes when available

Keep reproducibility + compliance records

Conclusion: What the EU AI Act 2026 Really Means

For researchers, academic writers, and educators, the EU AI Act is not a barrier—it is a trust framework. It protects low‑risk academic use, encourages transparency, and strengthens ethical AI adoption.

Adding a simple disclosure, maintaining source integrity, and following ethical research practices are enough to remain aligned.

This article is AI assisted and reviewed by a human author. All sources have been independently verified.

References Official EU Sources

1. Artificial Intelligence Act (Official Law)

European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). EUR-Lex.

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

2. AI Regulatory Framework

European Commission. (2024). Artificial intelligence: Regulatory framework. Shaping Europe’s Digital Future.

AI Act | Shaping Europe’s digital future

3. AI Act Legislative Overview

European Parliament. (2024). Regulation on artificial intelligence: Legislative train schedule. European Parliament.

Carriages preview | Legislative Train Schedule

4. European AI Office

European Commission. (2024). European AI Office. Shaping Europe’s Digital Future.

European AI Office | Shaping Europe’s digital future

Ethical Issues in AI: A Complete 2026 Guide

- Academic Writing

- AI Ethics & Future

- AI in Academic Research

- AI in Business & Marketing

- AI in Content Creation

- AI in Design & Development

- AI in Education/Teaching

- AI in Research

- AI Tools & Review

- Indian Laws

- Motivation

- SEO & Digital Marketing

- Writing & Content Creation

10 Structural Mistakes That Get Research Papers Rejected — And How to Fix Every One

Cluster Post 7 | Module 1: Understanding the Structure of Research Papers and Theses From…

7 Ways AI Is Revolutionizing Data Analysis in Academic Research

AI is revolutionizing data analysis in academic research by automating data cleaning, accelerating statistical analysis,…

Academic Writing Mastery: The Complete 2026 Guide to Research Papers & Thesis Writing

Academic Writing Mastery Your research deserves to be read. This complete guide transforms your ideas…

AI Chatbots Customer Support: 24/7 Help Guide

Ever lost sales because customer questions came at 2 AM? Many small businesses struggle to…

AI Ethics Checklist for PhD Research: A Practical Guide

A complete AI ethics checklist for PhD research. Learn how to use AI tools ethically,…

AI For Logo and Branding: Best Tools & Workflows 2026

Building a professional brand identity once required hiring designers, waiting weeks for revisions, and investing…

AI for Small Businesses: Practical Tools & Growth Guide

Running a small business today is both exciting and challenging. You may be managing customers,…

AI for UI/UX Design: Tools, Workflows, and Trends

User Interface (UI) and User Experience (UX) design play a critical role in the success…

AI in App Development: Tools, Use Cases & Trends (2026)

App development is no longer limited to experienced programmers or large teams. Today, AI in…

AI in Content Creation: 2026 Guide, Tools & Best Practices

Explore AI in content creation for 2026 — tools, workflows, benefits, real use cases, and…

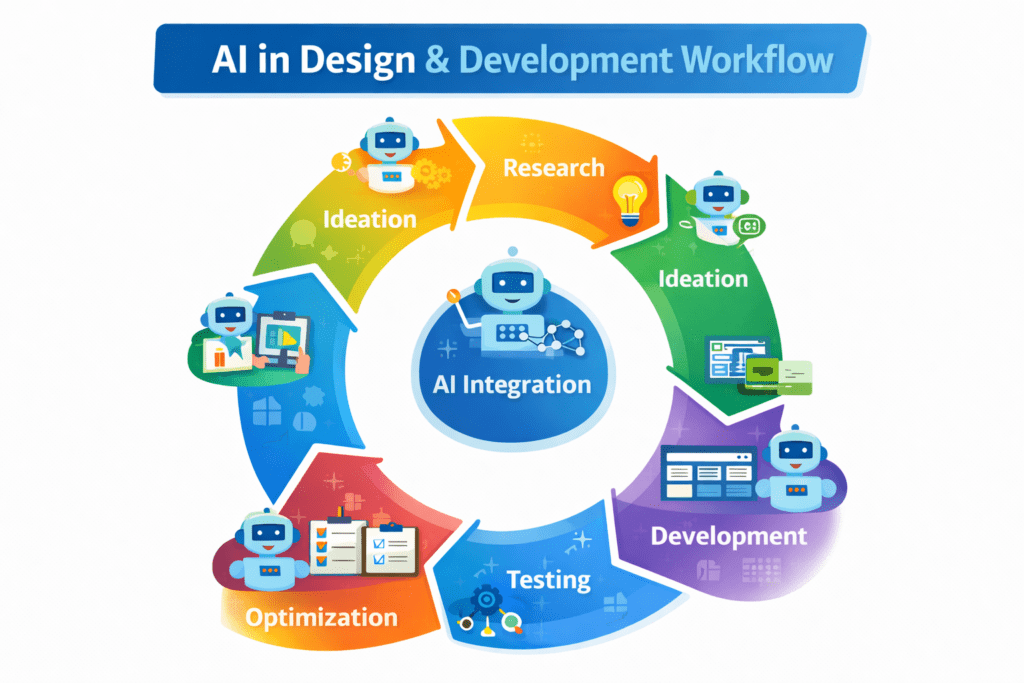

AI in Design & Development: Tools, Workflows, and Best Practices 2026

Artificial Intelligence is no longer a future concept.AI in Design & Development is already shaping…

AI in Digital Marketing: Trends Shaping Smarter Marketing

Digital marketing has changed dramatically in recent years, and AI in digital marketing is one…

AI in Education: How Artificial Intelligence Is Transforming Teaching and Learning 2026

Education is undergoing one of its most important transformations ever.AI in education is changing how…

AI in Email Marketing: Smarter Emails for Better Engagement in 2026

Email marketing remains one of the most reliable digital channels—but only when emails feel relevant….

AI in Graphic Design: Best Tools, Trends & Tips for 2026

Still designing everything manually in 2026?While you’re adjusting fonts and layers, AI in graphic design…

AI in Market Research: 2026 Trends, Tools & Practical Insights

AI in market research helps businesses analyze data faster, predict trends, and personalize strategies. Learn…

AI in Research: How Artificial Intelligence Is Transforming Academic Research 2026

AI in Research – Just Think About This… You’re buried under 5,000 research papers. Your…

AI in Sales Automation: Practical Guide & Trends

AI in sales automation helps teams save time, prioritize leads, and forecast pipelines accurately. Learn…

AI in Web Development: Tools, Use Cases & Best Practices

Learn how AI in web development improves coding, personalization, and performance. Explore tools, benefits, challenges,…

AI Tools & Reviews: Complete Guide for Smart Business Decisions (2026)

Best 10 AI Literature Review Tools for 2026: Save Hours on Research

Ever stared at a mountain of 500+ research papers, wondering where to even start? You’re…

Best 10 AI Tools in Web Development 2026

Top 10 AI Tools in Web Development You Should Use Today Smart AI Tools Helping…

Best AI App Development Tools for 2026

Discover the best AI app development tools for 2026. Compare Copilot, Lovable, Bubble, and more…

Best AI Tools Every Researcher Must Know in 2026

Just Think About This…A professor pulled me aside last week. “I need to buy AI…

Best AI Tools for Business & Marketing 2026

Best AI Tools for Business & Marketing 2026-What if you could run campaigns, write content,…