Artificial Intelligence is no longer a “future technology.”

It is already deciding who gets a job interview, which loan applications are approved, how diseases are diagnosed, and even what news or posts you see online.

That sounds impressive—but it also raises a challenging question:

Who is responsible when AI makes the wrong decision?

In 2026, this question is no longer theoretical. It affects real people, real businesses, and real lives. Let’s explore the ethical issues in AI in a clear, practical, and easy-to-understand way.

Why Ethical Issues in AI Matter More Than Ever

AI adoption has grown at record speed. By 2024, nearly 78% of companies worldwide were using AI, yet less than half of users trusted AI systems with their personal data.

At the same time:

- AI-related privacy and security incidents increased by over 50% in one year

- Lawsuits involving AI hiring tools reached real courts

- Governments introduced strict AI regulations with heavy penalties

This gap between rapid AI growth and public trust is exactly why AI ethics matters today.

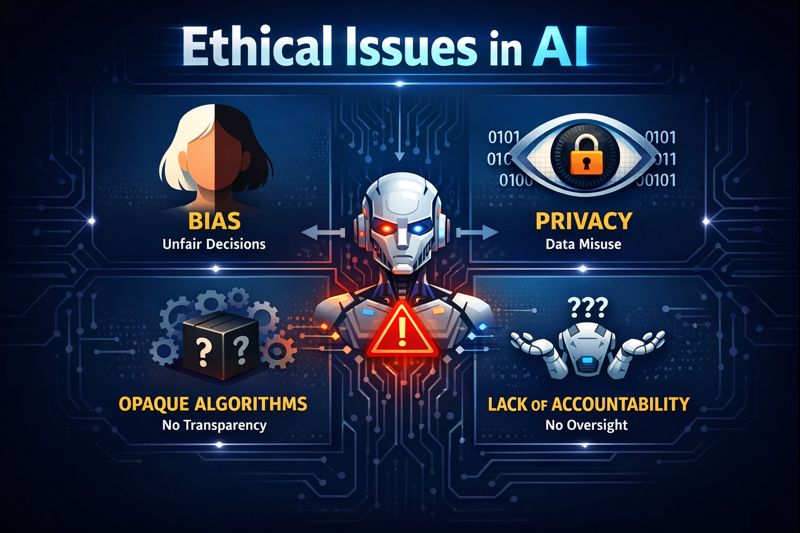

What Are Ethical Issues in AI?

Ethical issues in AI refer to the moral and social problems that arise when artificial intelligence systems impact people’s rights, privacy, fairness, and safety. These issues include bias, lack of transparency, misuse of personal data, weak accountability, and harm caused by automated decisions.

The Five Biggest Ethical Issues in AI

1. Bias and Discrimination: When AI Learns Our Mistakes

AI systems learn from historical data.

The problem? Human history is biased.

If biased data is used to train AI, the system doesn’t fix discrimination—it automates and amplifies it.

Real-world examples:

- AI hiring tools rejecting candidates based on age, gender, or race

- Resume screeners showing near-zero selection rates for certain name groups

- Healthcare algorithms delivering worse outcomes for minority patients

In one major case, a U.S. court ruled that discrimination caused by AI is legally equivalent to discrimination by a human. That ruling changed everything.

Key takeaway: AI can scale inequality faster than humans if ethics are ignored.

2. Privacy Concerns: Your Data, Used Without Clear Consent

AI systems require massive amounts of data to function. Much of this data comes from:

- Online activity

- Public content scraping

- User behavior tracking

Often, people never explicitly agree to their data being used for AI training.

By 2026:

- Over 40% of organizations reported AI-related privacy incidents

- Governments introduced strict rules to limit facial recognition, data scraping, and surveillance AI

- Data protection and AI laws increased four-fold since 2016

Key takeaway: Traditional privacy rules are not enough for AI-driven systems.

3. The Black Box Problem: “Why Did the AI Decide This?”

Many advanced AI models work like a black box:

- They provide decisions

- But cannot clearly explain how they reached them

This becomes dangerous when AI:

- Rejects loan applications

- Screens job candidates

- Assesses criminal risk

- Influences medical decisions

In 2026, regulators now require explainable AI for high-risk applications. People have the right to understand decisions that affect their lives.

Key takeaway: If AI can’t explain itself, it shouldn’t decide your future.

4. Accountability Gaps: Who Is Responsible When AI Fails?

When an AI system causes harm, responsibility becomes blurry:

- Is it the developer?

- The company using the AI?

- The data provider?

- The algorithm itself?

Traditional governance models fail because AI systems constantly change and learn.

Leading organizations now use:

- Continuous monitoring

- Human oversight

- Real-time ethical risk assessment

This approach is called adaptive AI governance.

Key takeaway: Ethical AI needs ongoing human responsibility, not one-time policies.

5. Innovation vs Ethics: Do We Have to Choose?

For years, tech culture followed the idea of “move fast and break things.”

In AI, that approach has proven dangerous.

In 2026, a new mindset is emerging:

“Build fast—but verify and explain.”

Ethical challenges often conflict:

- Transparency vs privacy

- Fairness vs data minimization

- Speed vs safety

There is no perfect solution—only responsible trade-offs guided by human values.

Key takeaway: Ethical AI is not anti-innovation—it is sustainable innovation.

How Ethical AI Works (Step-by-Step)

- Define ethical risk level (low, medium, high impact)

- Audit training data for bias and imbalance

- Build explainability tools into AI models

- Test AI decisions on diverse groups

- Monitor real-world performance continuously

- Ensure human review for high-risk decisions

Benefits of Ethical AI

- Builds public trust

- Reduces legal and regulatory risk

- Improves fairness and inclusion

- Strengthens brand credibility

- Enables long-term innovation

Challenges and Limitations

- Ethical standards vary by culture and region

- Full transparency is technically difficult

- Trade-offs between accuracy and fairness

- High cost of audits and governance tools

- Rapidly changing regulations

Being ethical is harder—but ignoring ethics is far more expensive.

Best Practices for Ethical AI in 2026

- Use diverse and representative training data

- Conduct regular bias and privacy audits

- Document AI decisions clearly

- Keep humans involved in critical decisions

- Align AI systems with local and global laws

FAQs: Ethical Issues in AI

What are the main ethical issues in AI?

Ethical issues in AI include bias, discrimination, privacy violations, lack of transparency, accountability gaps, and misuse of automated decision-making. These problems arise when AI systems impact people without sufficient safeguards or human oversight.

Why is bias a serious ethical issue in AI?

AI bias occurs when systems learn from unfair or incomplete data. This can lead to discrimination in hiring, healthcare, finance, and law enforcement, affecting millions of people at scale without human awareness.

How does AI threaten data privacy?

AI often relies on large datasets collected from online behavior and public sources. Without strong safeguards, personal data can be misused, exposed, or used without informed consent.

What is explainable AI and why does it matter?

Explainable AI allows humans to understand how and why an AI system makes decisions. It is critical for trust, fairness, and legal compliance, especially in high-impact areas like hiring or credit approval.

What is explainable AI and why does it matter?

Explainable AI allows humans to understand how and why an AI system makes decisions. It is critical for trust, fairness, and legal compliance, especially in high-impact areas like hiring or credit approval.

Are governments regulating ethical issues in AI?

Yes. Governments worldwide have introduced strict AI laws focusing on transparency, privacy, and accountability. These regulations aim to protect individuals while allowing responsible innovation.

Conclusion: Why Ethical AI Is Everyone’s Responsibility

Ethical issues in AI are no longer optional discussions for researchers—they are real-world challenges shaping our future.

The goal is not to slow innovation, but to ensure AI:

- Respects human rights

- Works fairly

- Remains understandable

- Serves society, not harms it

The decisions made today will define how AI affects lives tomorrow.

Ethical AI isn’t about fear—it’s about responsibility.

References

- European Commission – EU Artificial Intelligence Act

https://digital-strategy.ec.europa.eu/en/policies/european-approach-artificial-intelligence - National Institute of Standards and Technology (NIST), USA

https://www.nist.gov/itl/ai-risk-management-framework - IEEE – Ethically Aligned Design

https://standards.ieee.org/industry-connections/ec/ - OpenAI – AI Safety and Governance

https://openai.com/safety

Best AI Tools for Business & Marketing 2026

- Academic Writing

- AI Ethics & Future

- AI in Academic Research

- AI in Business & Marketing

- AI in Content Creation

- AI in Design & Development

- AI in Education/Teaching

- AI in Research

- AI Tools & Review

- Indian Laws

- Motivation

- SEO & Digital Marketing

- Writing & Content Creation

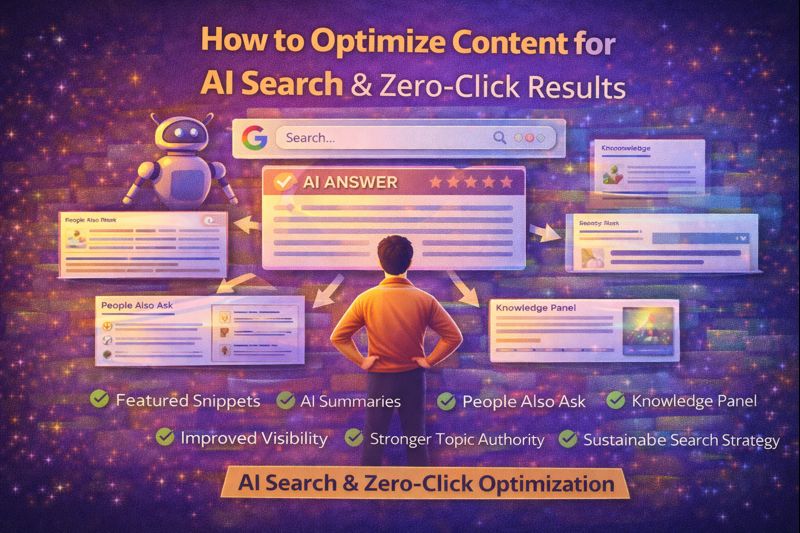

How to Optimize Content for AI Search & Zero-Click Results Smartly

Introduction Search results are no longer limited to blue links. Today, users often find answers…

How Content Marketing Helps You Monetize Blogs with AdSense (2026)

Introduction Many bloggers struggle with a common problem: their blog gets content published, but income…

Content Marketing Success Using Google Analytics & Search Console

Introduction Creating content is only half the work. Without measurement, even high-quality content becomes guesswork….

Low-Competition Keyword Research for Content Marketing (Step-by-Step)

low-competition keyword research for content marketing – One of the biggest mistakes content creators make…

Internal Linking Strategy for Content Marketing: Boost SEO Rankings

Introduction Many websites focus heavily on publishing new content but overlook one of the most…

Search Intent Strategy in Content Marketing: Rank What Users Want

Introduction One of the biggest reasons content fails to rank is not poor writing or…

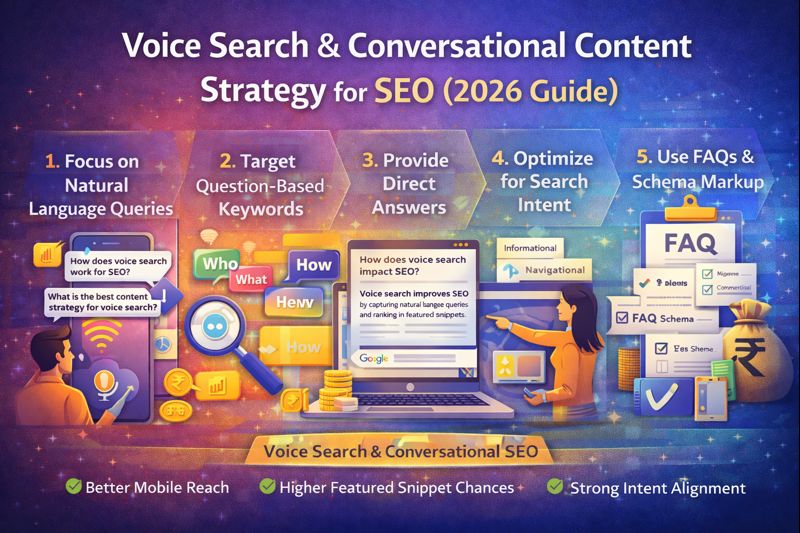

Voice Search & Conversational Content Strategy for SEO (2026 Guide)

Voice Search & Conversational Content Strategy – Search behavior has changed dramatically. People no longer…

Topic Cluster Strategy: How to Build Topical Authority with Content Marketing

Introduction Many websites publish high-quality content regularly, yet struggle to rank consistently on Google. The…

Evergreen vs Trending Content Strategy: What Works Better for SEO?

Introduction Content creators often face a common dilemma:Should you focus on evergreen content that lasts…

Content Repurposing Strategy for SEO: Boost Traffic Without Writing More

Introduction Creating high-quality content takes time, effort, and research. Yet many websites publish a post…

What Is Generative SEO and How Content Marketers Can Use It in 2026

Introduction What Is Generative SEO – Search engines are changing faster than ever. Traditional SEO…

Content Marketing Strategy That Ranks on Google (Complete Guide)

How to Build a Content Marketing Strategy That Ranks on Google Many websites publish content…