Last Updated: April 15, 2026

What Is the EU AI Act? (In Simple)

Imagine you build a robot that helps doctors diagnose diseases. Or you create an AI tool that scores job applicants. Or you develop a chatbot that talks to students.

Each of these AI systems could seriously affect people’s lives. What if the robot gives wrong diagnoses? What if the hiring AI is biased against women? What if the chatbot gives harmful advice?

The EU AI Act is a law passed by the European Union that says: if you build AI that could harm people, you must follow rules to make it safe, fair, and transparent.

It officially became law in August 2024 — making it the world’s first comprehensive legal framework for artificial intelligence.

Think of it like a safety certificate system. Just as cars need to pass safety tests before going on the road, AI systems now need to meet certain standards before being used in the EU.

“AI regulation is not a barrier — it’s a framework for responsible innovation.

The goal is not to stop AI development. It is to make sure AI that affects people’s health, rights, jobs, or safety is built and tested responsibly.

Who Does It Apply To?

This is one of the most common questions — especially for researchers and developers outside Europe.

The EU AI Act applies to you if:

- You build or sell an AI system that will be used in Europe

- You use an AI system in your work within the EU

- You research AI at a European university or institution

- You collaborate on EU-funded projects that involve AI

- You are an Indian researcher whose AI system will be tested, published, or deployed in a European context

Simple rule: If your AI affects people in the EU — no matter where you built it — the law likely applies.

This is important for Indian researchers and startups. If you are working with a German university, applying for Horizon Europe funding, or building a product for European users, you fall within the scope of this law.

The Risk Levels — How AI Is Classified

The EU AI Act does not treat all AI the same way. It uses a simple “traffic light” system based on how much risk the AI poses to people.

🔴 Level 1 — Completely Banned (Unacceptable Risk)

Some AI is banned outright. These are the most harmful uses of AI. Examples include:

- AI that manipulates people without them knowing (e.g., hidden persuasion techniques)

- AI that gives social scores to citizens based on their behaviour (like a government grading people on how “good” they are)

- AI that identifies faces in real-time in public spaces for law enforcement

- AI that predicts crimes based only on someone’s profile — without actual evidence

- AI that infers emotions in schools or workplaces

Think of this as the “absolutely never” category. No exceptions, no special permissions.

🟠 Level 2 — High Risk (Allowed, But With Strict Rules)

These AI systems are allowed — but only if they meet a detailed set of safety and transparency requirements before they are released.

High-risk AI is used in areas where a mistake could seriously harm people, such as:

| Area | Example |

|---|---|

| Education | AI that scores student exams or decides who gets into university |

| Healthcare | AI that helps diagnose illnesses or recommend treatments |

| Employment | AI that screens job applications or monitors workers |

| Credit and Banking | AI that decides whether someone gets a loan |

| Law Enforcement | AI that assesses criminal risk or analyses evidence |

| Border Control | AI that verifies identity at borders |

If your research involves building AI in any of these areas — even for academic purposes — it may fall into the high-risk category.

🟡 Level 3 — Limited Risk (Transparency Required)

These AI systems are allowed with minimal requirements. The main rule is:

Tell people they are talking to AI.

Examples: chatbots, AI-generated text or images, emotion-detecting software in some contexts.

🟢 Level 4 — Minimal Risk (No Special Rules)

The majority of AI falls here. Spam filters, recommendation systems in games, weather prediction AI — these face no mandatory requirements under the EU AI Act.

Compliance Timeline: What Happens When

The EU AI Act does not require everyone to comply immediately. It is being phased in gradually to give organisations time to adapt.

| Date | What Happens | Who Is Affected |

|---|---|---|

| August 2024 | The law officially comes into force | Everyone — the clock starts ticking |

| February 2025 | The ban on prohibited AI becomes enforceable | All AI developers — banned AI must stop |

| August 2025 | Rules for large AI models (GPAI) take effect | Developers of large language models and similar |

| August 2026 | Full rules for high-risk AI become enforceable | Researchers and developers of high-risk systems |

| August 2027 | Rules for AI built into regulated products fully apply | Developers of AI in medical devices, machinery, etc. |

The most important dates for most researchers:

- February 2025 — if your research involves any of the banned AI practices, they must already be stopped or redesigned

- August 2026 — if you are building high-risk AI, full compliance is required from this date

High-Risk AI — What Researchers Must Know

If your AI system falls into the high-risk category, you cannot simply build it and release it. You must follow a specific set of rules.

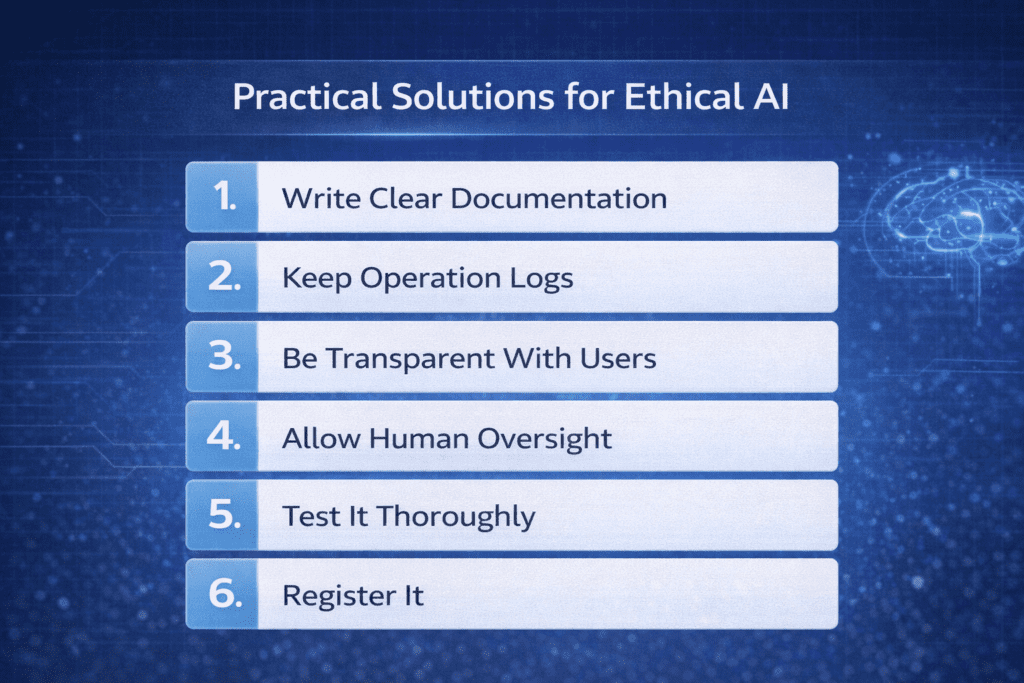

Here is what high-risk AI compliance requires — explained as simply as possible:

1. Write Clear Documentation

You need to write down — in detail — what your AI does, how it was trained, what data was used, and what its limitations are. Think of this as a “birth certificate” for your AI system.

A researcher building a student assessment AI would document:

- What the AI was trained to evaluate

- What data (student essays, grades) was used in training

- How accurate it is — including where it performs less well

- What happens when the AI is uncertain or makes a mistake

2. Keep Operation Logs

Your AI system must automatically record what it does — like a diary. If something goes wrong later, there is a record of what happened and when.

3. Be Transparent With Users

People who use your AI — or are affected by it — must be told:

- What the AI can and cannot do

- What its known limitations are

- That they have the right to ask for human review

4. Allow Human Oversight

You must design your AI so that a human can watch, understand, and if necessary — stop or override what the AI is doing. The human must always be able to “take back control.”

5. Test It Thoroughly

Before your AI is used by anyone, you must test it rigorously for accuracy, consistency, and safety — especially across different demographic groups to check for bias.

6. Register It

High-risk AI systems must be registered in an official EU database before they are deployed.

GPAI Models — The Rules for Large AI Systems

GPAI stands for General Purpose AI. These are large, powerful AI models that can do many different tasks — think of systems like ChatGPT, Gemini, or Llama. They are trained on enormous amounts of data and used as the foundation for many other AI applications.

From August 2025, the EU AI Act applies specific rules to GPAI models.

What GPAI Providers Must Do

All GPAI providers must:

- Keep detailed technical documentation — how the model was trained, what data was used, how it was evaluated, and what its known limitations are

- Publish a summary of training data — enough information for anyone whose content was used to understand that and potentially exercise their rights

- Have a clear policy for respecting copyright in training data

For Very Powerful GPAI Models (Systemic Risk)

Models above a certain size and capability threshold face additional requirements:

- Adversarial testing — deliberately trying to break or fool the model to find vulnerabilities

- Reporting serious incidents — if the model causes or is involved in a significant harm, this must be reported to the EU AI Office promptly

- Cybersecurity measures to protect the model from misuse

What This Means for PhD Researchers Working With GPAI

If you are a PhD student or postdoctoral researcher who fine-tunes or builds on a GPAI model, good practice — and increasingly expected practice in EU research contexts — includes documenting:

- Which base model you used (name, version)

- How you fine-tuned it and with what data

- What the resulting system is intended to do

- Any testing you conducted for safety, bias, or accuracy

- Known limitations of your adapted model

Prohibited AI — What Is Completely Banned

The banned practices section is critical for academic researchers to understand — especially those working with human subjects or sensitive AI applications.

Here is each ban explained in simple language:

Ban 1: Hidden Manipulation

What it means: You cannot build AI that secretly influences people’s behaviour in harmful ways — below their conscious awareness.

For researchers: Any study involving persuasive AI, nudging systems, or behavioural influence technology must be carefully designed to ensure informed consent and cannot cross into harmful manipulation.

Ban 2: Exploiting Vulnerable People

What it means: AI that targets people in vulnerable situations — those with mental health challenges, elderly individuals, children — to exploit their weaknesses or distort their decisions is banned.

Ban 3: Social Scoring Systems

What it means: No AI system can be used by governments or public authorities to give citizens a “score” based on their behaviour that then leads to them being treated differently or unfairly.

For researchers: Academic study of how social scoring systems work is not banned — but building and deploying a functional social scoring system, even as a research prototype, enters very sensitive legal territory.

Ban 4: Real-Time Biometric Identification in Public

What it means: AI that identifies people by their faces (or other biometric characteristics) in real-time in publicly accessible spaces — for law enforcement purposes — is banned with very narrow exceptions.

For researchers: This specifically targets law enforcement deployment. But researchers working on facial recognition or biometric AI tested in public spaces need to be very careful about how their work is scoped and documented.

Ban 5: Emotion Detection in Schools and Workplaces

What it means: AI that infers how people are feeling in educational or employment settings is banned (with limited exceptions).

For researchers: Studying AI emotion detection technology academically is different from deploying it on real students or employees. Keep the distinction clear in your research design.

Ban 6: Predicting Crime Without Real Evidence

What it means: AI that profiles people and predicts they will commit crimes — based only on their characteristics, with no objective factual basis — is banned.

AI Regulatory Sandboxes — A Special Opportunity for Researchers

Here is one of the most researcher-friendly parts of the EU AI Act: regulatory sandboxes.

What Is a Regulatory Sandbox?

A regulatory sandbox is a controlled environment where you can develop and test an innovative AI system under official supervision — without needing full compliance from day one.

Think of it like a testing lab with official permission. You can try things out, make mistakes in a safe environment, and get direct guidance from regulators — before your system has to meet all the requirements of the live market.

Why This Is Valuable for Researchers

- You can develop cutting-edge AI without the full compliance burden immediately

- You get direct guidance from the national authority about what compliance will look like for your specific system

- Small businesses, startups, and research spinouts get priority access

- Within the sandbox, your liability is reduced when you are operating in good faith under official guidance

How to Apply for a Sandbox in 2026

- Find your national authority — each EU country has a designated authority managing sandbox access. Germany, France, the Netherlands, and other countries have active programmes

- Prepare an application describing your AI system, why it requires sandbox access, and your testing plan

- For pan-EU GPAI model sandboxes — the European AI Office is setting up access at EU level, particularly relevant for large language model research

- For Indian researchers — access is typically available through your EU university or research institution partner. Ask your institution’s research and compliance office about available sandbox programmes

Compliance Checklist for Researchers

Use this as a practical starting point. It is not a substitute for qualified legal advice on your specific project.

Before You Start Building

- Identify whether your AI system falls into any of the banned practices categories

- Determine whether your system qualifies as high-risk (check the eight categories above)

- Decide whether your system is a GPAI model

- Write down your risk classification reasoning — reference specific parts of the law

During Development

- Keep a technical documentation file from day one — don’t wait until you’re done

- Document your training data — where it came from, how it was selected, any known biases

- Test your model’s performance across different demographic groups, not just overall

- Build in a human override mechanism — humans must be able to stop or correct the AI

- If using personal data, confirm GDPR compliance

Before Deployment

- Complete a Fundamental Rights Impact Assessment (FRIA) if deploying a high-risk system (template in the next section)

- Ensure your system is registered in the EU database (for high-risk Annex III systems)

- Write a clear user guide explaining what the system does, its limitations, and how to raise concerns

- Test your human oversight mechanism — does it actually work as designed?

After Deployment

- Set up incident monitoring — a way to detect when something goes wrong

- Know your reporting obligations — what needs to be reported, to whom, and how quickly

- Schedule a quarterly review of the system’s performance and the compliance documentation

- Update your FRIA whenever the system changes significantly

A Simple Compliance Example (Step by Step)

Important: This is an invented, illustrative example created for teaching purposes. It does not represent any real person, institution, or actual compliance situation.

Meet Arjun. He is a PhD researcher at a Dutch university. His project: an AI system that automatically evaluates student essays and gives them a score and feedback.

Step 1: Arjun checks the risk level. He looks at the EU AI Act. Category 3 of the high-risk list covers AI used in education for “assessing examination results” and “evaluating learning progress.” His system clearly fits this description.

Result: High-risk AI system.

Step 2: Arjun starts his documentation file. From the first day of his project, he keeps a file recording:

- What his AI is trained to do (evaluate argumentative essays)

- Where his training data came from (past student essays, collected with consent)

- How he tested the model’s accuracy

- That the model performs about 5% less accurately for non-native English writers — a gap he notes clearly

Step 3: Arjun completes a Fundamental Rights Impact Assessment. He identifies one key risk: the lower accuracy for non-native speakers could lead to those students receiving unfairly low scores. This is a potential discrimination concern.

His solution: any AI score below 60% triggers a mandatory human review. Students are informed of this policy in the course guidelines.

Step 4: Arjun designs the human oversight. The course administrator can see all AI-generated scores and override any of them. Every student has the right to request human review of their score.

Step 5: Arjun tests his deployment. Before the system goes live, he runs a pilot with 30 volunteer students. He checks whether the human override actually works and whether the lower-accuracy gap for non-native speakers is being caught by the review trigger.

Result: The system goes into limited deployment with documented compliance in place. The whole process took about three months.

Common Mistakes to Avoid

Assuming academic research is automatically exempt. There is a limited research exemption — but only for AI that is still in pure development and has never been used by real people. The moment you test your AI on real students, patients, or job applicants — even in a small pilot — compliance obligations may start to apply.

Classifying your system as lower risk to avoid paperwork. Deliberately misclassifying a high-risk system to avoid compliance is a serious legal risk. Penalties under the EU AI Act go up to €30 million or 6% of global annual turnover for the most serious violations. Honest documentation is both ethically right and strategically safer.

Treating GDPR and the AI Act as separate. If your AI uses personal data, both laws apply simultaneously. Data Protection Impact Assessments under GDPR and Fundamental Rights Impact Assessments under the AI Act often overlap. Coordinate your compliance work to address both at the same time.

Leaving documentation until the end. Documentation written after the fact is always incomplete. You will not remember exactly which data preprocessing choices you made, or why you chose a particular model architecture, three months later. Start the documentation file on day one.

Building incident monitoring after an incident. Do not wait for something to go wrong before you set up a system for detecting problems. Build monitoring into your deployment from the start.

Tools and Templates to Get Started

Simple FRIA Template (Fundamental Rights Impact Assessment)

SECTION 1 — WHAT DOES YOUR AI SYSTEM DO?

- Name of the system:

- What decision does it make or assist with?

- Who is affected by this decision?

SECTION 2 — WHICH RIGHTS COULD BE AFFECTED?

(Tick all that apply)

[ ] Right to privacy

[ ] Right to non-discrimination

[ ] Right to education / fair access

[ ] Right to appeal a decision

[ ] Other: ___________

SECTION 3 — HOW LIKELY IS HARM, AND HOW SERIOUS?

For each right ticked above:

- How likely is harm? (Low / Medium / High)

- How serious would that harm be? (Low / Medium / High)

- Which groups are most affected?

SECTION 4 — WHAT WILL YOU DO ABOUT IT?

Technical steps:

(e.g., human review for borderline cases, bias testing, accuracy thresholds)

Process steps:

(e.g., appeal mechanism, user notification, audit schedule)

SECTION 5 — WHEN WILL YOU REVIEW THIS?

Date of this assessment: ___________

Next review date: ___________

What would trigger an early review?

(e.g., model retrained, scope expanded, incident reported)

Risk Classification Quick Check

Before building anything, answer these five questions:

- Will your AI make or strongly influence a decision about a real person?

- Does it involve any of the eight high-risk categories (education, healthcare, employment, etc.)?

- Does it process biometric data (faces, fingerprints, voice)?

- Is it trained on a very large dataset with significant computational resources?

- Is it intended for use in law enforcement, border control, or justice?

If you answered yes to any of these, treat your system as potentially high-risk and get proper advice before proceeding.

What This Means for Indian Researchers and Startups

The EU AI Act matters to India’s AI community in several very direct ways.

When You Are Directly Affected

- PhD researchers at EU universities — you are subject to EU law and your institution’s compliance obligations

- Indian researchers on EU-funded grants — Horizon Europe and other EU programmes increasingly require AI Act compliance

- Indian AI startups building for European markets — you are a “provider” under the Act and must comply

- Researchers publishing in EU journals or presenting at EU conferences — compliance indicators are being incorporated into academic standards

What You Should Do Now

1. Audit your EU-connected AI work. Make a list of every project involving EU collaborators, EU funding, EU data, or intended EU deployment. For each one, ask: does this involve high-risk AI?

2. Build documentation habits. Even if you are not sure whether the law applies to your specific situation, maintaining good documentation — what your AI does, how it was built, what its limitations are — is good research practice regardless of regulation. It also makes your work more reproducible and trustworthy.

3. Engage your institution’s ethics and legal office. Major Indian research institutions with international partnerships are developing AI governance frameworks. Find out what your institution requires.

4. Watch for harmonised standards. European standards organisations are publishing technical standards that translate the AI Act’s legal requirements into specific engineering practices. When published, these will be the most practical guidance available for implementation.

5. Consider regulatory sandbox access. If you have an innovative AI system and an EU partnership, ask whether sandbox access is available through your partner institution. This can significantly ease your compliance pathway.

The Opportunity Framing

India has positioned itself as a serious player in global AI development. The EU AI Act, rather than being just a compliance burden, creates an opportunity: Indian researchers and startups that demonstrate robust AI ethics and compliance practices are better positioned for EU partnerships, international funding, and global market access.

The standards the EU AI Act sets are increasingly becoming the de facto global benchmark. Building to those standards now is building for the future.

Frequently Asked Questions

Q1. Does the EU AI Act affect researchers outside Europe?

Yes — if your AI system is used in the EU, you work at an EU institution, or you participate in EU-funded research, the Act likely applies to you. The law is based on where the AI is used, not where it was built. Indian researchers collaborating with EU universities or developing products for European markets are directly within its scope.

Q2. Is there an exemption for academic research?

There is a narrow exemption for AI still in pure research and development that has not been used by real people. Once you test your AI on actual users — even a small pilot group — compliance requirements may start to apply. The exemption is much narrower than most researchers assume. When in doubt, seek legal guidance.

Q3. What is a FRIA and do I need one?

A Fundamental Rights Impact Assessment is a structured evaluation of how your AI might affect people’s rights. It is formally required in certain deployment contexts for high-risk AI. More broadly, it is best practice for any AI that makes consequential decisions about individuals. A template is provided in this guide to help you get started.

Q4. What happens if I don’t comply?

The EU AI Act includes significant penalties. For the most serious violations — using prohibited AI, or failing to comply with high-risk system requirements — penalties can reach €30 million or 6% of global annual turnover (whichever is higher). For smaller violations, penalties are lower. For research institutions, non-compliance could also affect EU funding eligibility.

Q5. When do the rules for high-risk AI systems fully apply?

August 2026 is the main deadline for high-risk AI systems under Annex III — the list that includes AI in education, healthcare, employment, and similar areas. The prohibition on banned AI practices has been in effect since February 2025. If you are building high-risk AI, planning for August 2026 compliance should start now.

Q6. What is a GPAI model under the EU AI Act?

GPAI (General Purpose AI) models are large AI systems trained on broad data that can perform many different tasks — like large language models. From August 2025, GPAI model providers must maintain documentation, publish training data summaries, and comply with copyright policies. Very large GPAI models above a specific computational threshold face additional requirements including adversarial testing and incident reporting.

Final Thoughts

The EU AI Act can feel overwhelming when you first encounter it — full of legal language, cross-references, and complex classifications.

But at its core, it asks a simple question:

If your AI makes an important decision about a person’s life — their education, their job, their health, their freedom — can you show that you built it carefully, tested it honestly, and designed it so humans can understand and control it?

If the answer is yes, you are already most of the way to compliance. The documentation, the FRIA, the oversight mechanisms — these are just the formal structure for demonstrating something that responsible AI development should already include.

Start by classifying your risk level. Start your documentation file. Build the human override into your design from the beginning.

The EU AI Act’s compliance timeline gives you until August 2026 for high-risk systems. That is enough time — if you start now.

“Compliance today builds trust tomorrow.”

Official Sources

- EU AI Act Full Text: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=OJ:L_202401689

- European AI Office: https://digital-strategy.ec.europa.eu/en/policies/european-ai-office

- Plain-language AI Act summary: https://artificialintelligenceact.eu

- EU AI Act Compliance Checker: https://artificialintelligenceact.eu/assessment/eu-ai-act-compliance-checker/

Disclaimer: This guide is for educational purposes. The EU AI Act’s implementing regulations, official guidance, and harmonised standards continue to be published. Always check official EU sources for the latest information, and consult qualified legal counsel for compliance decisions.

15 Best Free AI Tools for PhD Students 2026

15 Best Free AI Tools for PhD Students 2026 | Aspirix Writers Free AI ToolsPhD…

7 Ways AI Is Revolutionizing Data Analysis in Academic Research

Act as an expert academic content writer, data analysis mentor, and SEO strategist. Create a…

AI Ethics and Future: Complete Guide to Responsible AI in 2026

AI Ethics and Future Why AI Ethics Matters More Than Ever Think about the last…

AI Ethics Checklist for PhD Research: A Practical Guide

AI Ethics Checklist for PhD Research 10-Point Compliance Checklist 2026 Everything you need to use…

AI in Education in 2026

How Artificial Intelligence Is Transforming Teaching and Learning Think about a Class 10 student in…

AI Research Revolution 2026: Complete PhD Workflow Guide

AI Research Revolution 2026 The complete AI PhD research workflow 2026 is changing how researchers…

Best 10 AI Literature Review Tools for 2026: Save Hours on Research

Ever stared at a mountain of 500+ research papers, wondering where to even start? You’re…

Best AI Tools Every Researcher Must Know in 2026

Just Think About This…A professor pulled me aside last week. “I need to buy AI…

EU AI Act: Complete Researcher-Friendly Guide (Compliance Timeline to 2026)

What Is the EU AI Act? (In Simple) Imagine you build a robot that helps…