Last Updated: April 28, 2026

AI Ethics and Future

Why AI Ethics Matters More Than Ever

Think about the last time you applied for a loan, searched for a job online, or visited a doctor.

Chances are, an AI system was involved somewhere in that process. It may have helped decide whether you qualify for credit. It may have sorted your job application before a human ever saw it. It may have suggested a diagnosis or treatment pathway.

These are not small decisions. They affect whether people have money to live on, whether they get hired, whether they receive the right medical care.

Now ask yourself: who checks whether these AI systems are fair? Who makes sure they don’t make mistakes that hurt certain groups of people more than others? Who ensures they can be questioned or challenged when they get it wrong?

These are the questions AI ethics is trying to answer.

AI ethics is simply about making sure AI is built and used in ways that are fair, honest, safe, and respectful of people’s rights. It is about asking — before we release a powerful AI tool into the world — “could this harm someone, and if so, what are we doing to prevent that?”

This guide explains the main ethical concerns in AI, the real-world problems they cause, what governments and researchers are doing about them, and practical steps you can take right now.

What Are Ethical Concerns in AI?

There are five main areas where AI raises serious ethical questions. Here is each one explained as simply as possible.

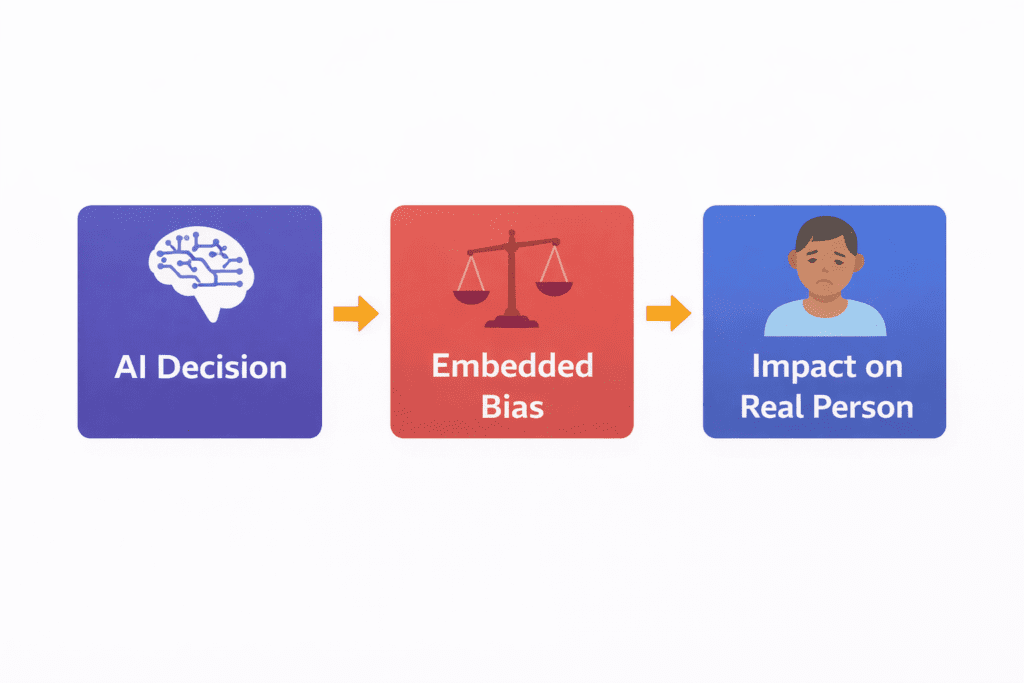

1. Bias and Discrimination

AI systems learn from data. If that data reflects the unfair patterns of the past — historical discrimination in hiring, lending, criminal justice — the AI will reproduce those patterns.

Simple example: Imagine an AI trained on 10 years of hiring decisions from a company that mostly hired men. The AI learns that “good employees” look like the people the company previously hired — and starts filtering out women’s applications automatically.

The AI is not intentionally sexist. It is just repeating the pattern in the data it learned from. But the outcome — women being less likely to get interviews — is real discrimination.

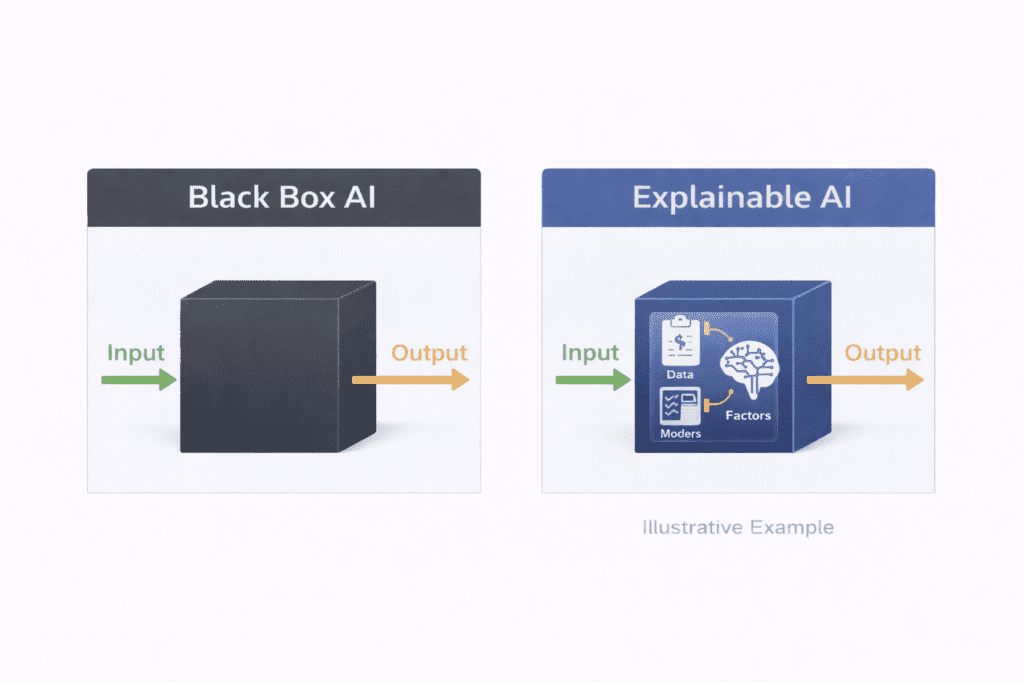

2. Lack of Transparency (The Black Box Problem)

When you make a decision, you can usually explain why. “I chose this candidate because of their experience and communication skills.” AI systems often cannot do this.

Many modern AI systems — especially deep learning models — produce outputs without any readable explanation of how they got there. They take in data, run it through millions of calculations, and produce a result. What happened in the middle is essentially a mystery.

This becomes a serious problem when the AI is deciding whether you get a loan, or whether you are a flight risk, or whether your cancer scan requires urgent follow-up.

3. Privacy Violations

AI systems need large amounts of data to work well. That data often includes personal information — your browsing history, health records, financial behaviour, location data, social media activity.

The concern is not just that this data is collected. It is what is done with it. AI systems can draw inferences from this data that you never consented to share — like predicting your health conditions from your shopping history, or inferring your political views from your online reading patterns.

4. Accountability Gaps

When an AI system makes a mistake that harms someone — who is responsible?

The company that built it? The organisation that deployed it? The team that collected the training data?

Current laws and organisational structures often do not have clear answers to this question. This means that when AI causes harm, the people harmed often have no clear path to challenge the decision or receive acknowledgement of the mistake.

5. Human Control

As AI systems become more capable, keeping meaningful human control over their decisions becomes both more important and more difficult.

The concern is not science-fiction scenarios about robots taking over. It is the practical question: when an AI is making 10,000 decisions per hour about loan applications, medical records, or social media content — is any human actually watching, understanding, and able to correct what it does?

AI Bias and Fairness — The Problem With Unfair AI

Bias in AI is not rare. Based on published research across healthcare, employment, and finance, it is a common feature of AI systems trained on real-world data — because real-world data reflects real-world inequality.

Where Does Bias Come From?

From the training data. If the data used to train an AI reflects historical discrimination, the AI will replicate that discrimination. A medical AI trained mostly on data from one demographic group may work less well for patients from other groups.

From what the data measures. Sometimes AI uses indirect signals as a proxy for what it actually wants to measure. Using someone’s postcode as a measure of creditworthiness, for example, ends up encoding assumptions based on race and income — because where people live is strongly shaped by both.

From feedback loops. If a biased AI makes decisions that influence the data used to train the next version of the AI, the bias can grow stronger over time rather than weaker.

Real Examples From Research

Note: The following examples are based on patterns reported in academic and investigative research. They are not claims about specific named products.

- Research has found that AI medical diagnostic tools trained primarily on data from lighter-skinned patients can be less accurate for patients with darker skin tones.

- Studies have shown that AI hiring tools can learn to deprioritise candidates based on gender because historical hiring data reflects gender imbalances.

- Research on credit scoring AI has found that algorithms using indirect variables can end up discriminating by race even when race is not included as an input.

What Can Be Done?

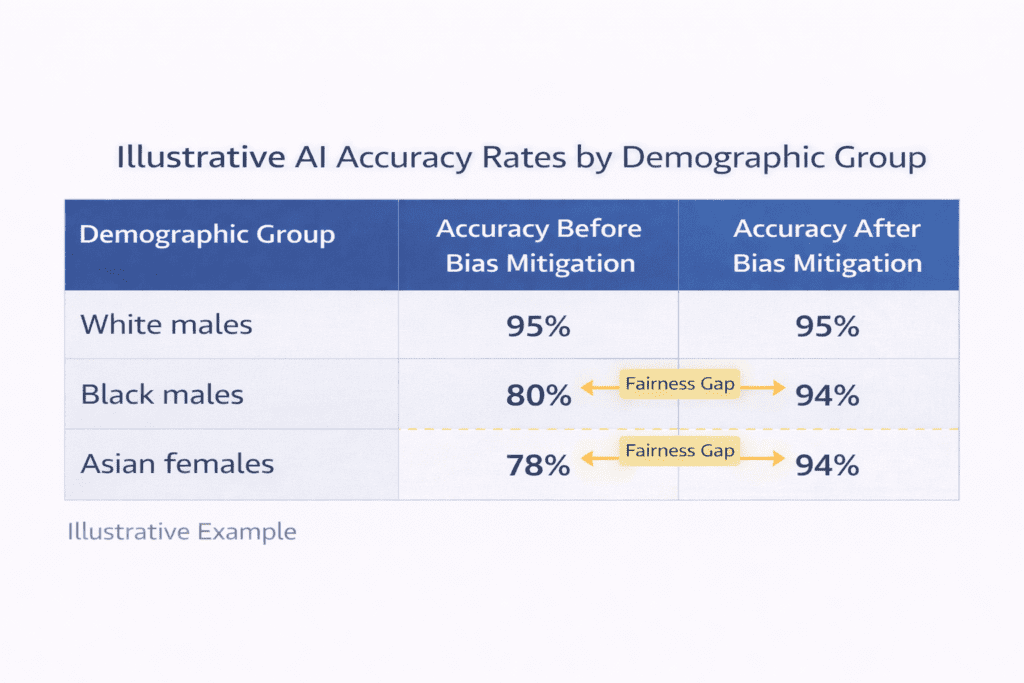

Fairness audits are one of the most practical tools. Before an AI system is released, developers run tests comparing how it performs across different groups — by gender, age, race, or other relevant characteristics. If the system makes significantly more errors for one group than another, that signals a problem requiring investigation and correction.

Tools like IBM AI Fairness 360, Microsoft Fairlearn, and Google’s What-If Tool help developers run these tests.

The honest limitation: Finding a bias gap is the first step. Understanding whether it reflects an actual unfairness — versus a real difference in the phenomenon being measured — requires careful human analysis. Fairness auditing is necessary but not a complete solution on its own.

The AI Black Box Problem — When AI Can’t Explain Itself

Here is a simple way to understand why this matters.

Imagine you are denied a job. You ask why. The hiring manager says: “The AI system said no. I don’t know why. I can’t explain it. That’s just the output.”

Is that fair? Should you have the right to understand why a consequential decision was made about you?

Most people would say yes. And many legal frameworks — including GDPR in Europe — say yes too.

The problem is that many of the most powerful AI systems in use today genuinely cannot produce a human-readable explanation for their decisions. They work by processing data through layers of mathematical operations involving billions of numbers. The final output emerges from this process — but there is no single sentence inside the model that says “I chose this because of X.”

This is the AI black box problem.

Why It Matters Beyond Fairness

For safety: If you can’t understand why an AI makes mistakes, you can’t predict when it will make them next. A black-box medical AI that is wrong in ways nobody understands is unpredictable in dangerous ways.

For accountability: If neither the developer nor the deployer can explain a harmful decision, no one can meaningfully be held responsible for it.

For trust: People are understandably reluctant to trust systems that cannot explain themselves — particularly for decisions that significantly affect their lives.

What Is Being Done — Explainable AI (XAI)

Researchers are building tools to make AI more interpretable. This field is called Explainable AI or XAI.

Some examples:

- SHAP: Breaks down an AI’s prediction and shows you which inputs pushed the decision in which direction. For a loan refusal, it might show: “Low income contributed to the negative decision. High credit score worked in your favour.”

- LIME: Creates a simpler version of the complex AI’s behaviour around one specific decision — giving an approximation of the reasoning for that particular case.

- Attention maps: In AI systems that analyse images or text, these show visually which parts of the input the AI “paid most attention to” when making its decision.

The important honest caveat: These tools give approximations and interpretations. They do not reveal the AI’s “true reasoning” in the way a human could explain their own thinking. XAI is a genuine advance, but it does not fully solve the black box problem — it makes it more manageable.

Privacy — How AI Uses Your Data (And Why That’s a Problem)

Every time you use the internet, make a purchase, or interact with a digital service, you generate data. Lots of it.

AI systems are built on this data. The more data they have, the better they can predict and decide. But the collection and use of all this personal information creates real risks for ordinary people.

The Three Main Privacy Problems

Problem 1: Data used beyond what you agreed to When you sign up for a fitness app, you agree to share your exercise data. You do not agree to have that data used to train an AI that predicts your likelihood of developing a chronic illness — which is then sold to an insurance company. But these downstream uses can and do happen.

Problem 2: Sensitive inferences from non-sensitive data AI systems are very good at detecting patterns. They can infer that you are probably pregnant from your shopping pattern changes, even if you never disclosed that. They can estimate your political views from your news reading habits. They can infer health conditions from the types of searches you make.

You consented to share your shopping history. You did not consent to share your health status. But the AI drew the connection anyway.

Problem 3: Re-identification Data that has been “anonymised” can often be re-identified when combined with other data sources. Your anonymised location data plus your anonymised purchase history plus your anonymised social media activity can, in combination, point uniquely to you.

What Legal Frameworks Require

The GDPR (Europe’s data protection law) requires:

- A legal reason to collect personal data in the first place

- Limiting collection to only what is necessary

- Using data only for the purpose it was collected for

- Giving people the right to access, correct, and delete their data

- Explaining automatically made decisions that significantly affect people

India’s Digital Personal Data Protection Act (DPDPA) 2023 creates similar protections within India.

Emerging Privacy-Preserving Techniques

Researchers are developing ways to build AI systems that work well without needing to see raw personal data:

- Federated learning: The AI learns from data on many different devices without that data ever being collected in one central place

- Differential privacy: Mathematical “noise” is added to data before analysis, protecting individuals while still allowing useful patterns to be detected

- Synthetic data: AI is trained on artificially generated data that mimics real data without containing any actual personal information

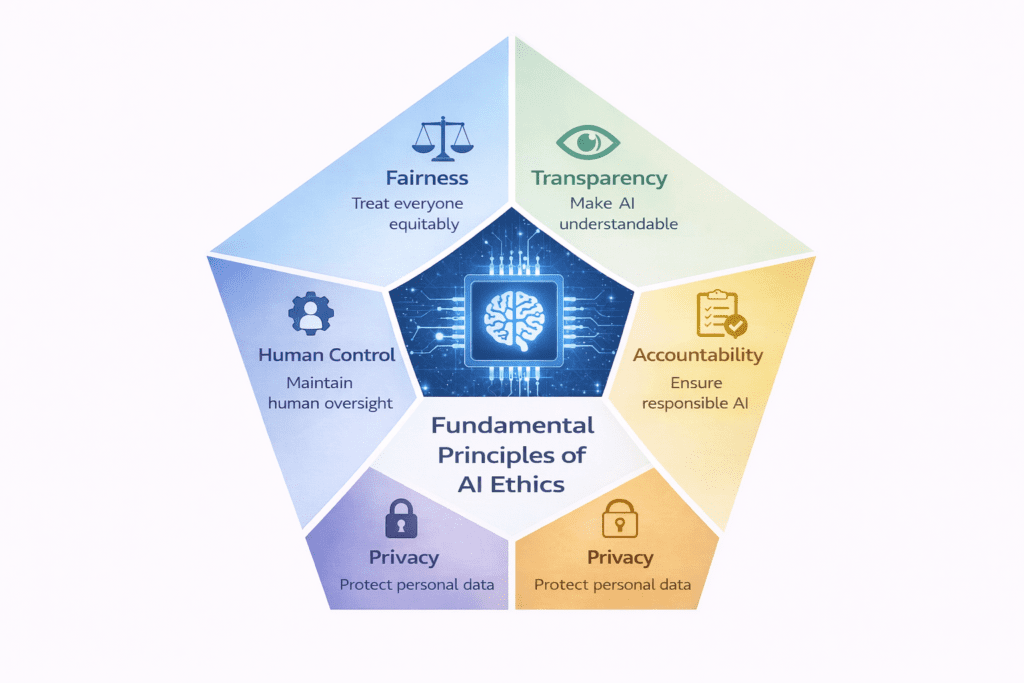

AI Ethics Frameworks — What the World Is Agreeing On

Governments, international organisations, and technology companies have been working to define what responsible AI looks like. While different frameworks have different emphases, most converge on the same five core ideas.

The Five Core Principles of AI Ethics

1. Fairness AI should treat everyone equitably. It should not produce outcomes that discriminate against people based on their gender, race, age, disability, or other protected characteristics. Achieving fairness requires active effort — it does not happen automatically.

2. Transparency People should be able to understand — in plain terms — how an AI system makes decisions that affect them. The level of explanation required should match the seriousness of the decision.

3. Accountability Someone must be responsible when AI goes wrong. Developers, deployers, and organisations cannot avoid responsibility by saying “the algorithm decided.”

4. Privacy Personal data used in AI must be handled with care and respect. People’s data should be collected minimally, used only for stated purposes, and protected from misuse or unexpected use.

5. Human Control Humans must stay meaningfully in charge of AI systems — especially in high-stakes areas. This means being able to understand what the AI is doing, monitor it, and override it when necessary.

Who Is Saying This?

These principles are not just academic ideas. They appear in formal frameworks from:

- UNESCO — adopted by 193 countries in 2021, covering human rights, sustainability, and dignity

- European Union — the EU High-Level Expert Group on AI published seven requirements for trustworthy AI

- OECD — 38+ countries have signed up to OECD AI principles emphasising human-centred values

- India — NITI Aayog published responsible AI principles in 2021 aligning with international standards

Responsible AI in India — What’s Happening Right Now

India is one of the world’s largest AI markets — both as a developer and a user of AI systems. The ethical stakes are correspondingly significant.

The Policy Landscape

IndiaAI Mission (2024) The Government of India launched the IndiaAI Mission with funding for computing infrastructure, AI applications, and datasets. “Safe and trusted AI” is listed as one of the mission’s core pillars — a recognition that capability building must go alongside responsibility.

Digital Personal Data Protection Act 2023 (DPDPA) India now has a data protection law that creates rules for how personal data can be collected, used, and stored. This directly affects how AI systems in India can use people’s data. Companies and researchers handling personal data must comply with these requirements.

NITI Aayog Responsible AI Principles India’s policy planning body published responsible AI principles covering safety, equality, privacy, transparency, accountability, environmental wellbeing, and rule of law. These align closely with international frameworks — positioning India well for global AI collaboration.

Global Partnership on AI (GPAI) India is a member of GPAI — an international initiative bringing together governments and experts to guide the responsible development of AI.

India-Specific Challenges

Language diversity: India has 22 official languages and hundreds of dialects. Most AI systems are trained primarily on English data. This means they often work significantly worse for people who communicate in Hindi, Bengali, Tamil, Telugu, or other Indian languages. This is both a technical problem and a fairness problem — AI works better for people who speak the language it was trained on.

Scale of deployment: India is deploying AI at enormous scale in healthcare, agriculture, and public services. When AI is used for medical diagnosis in rural clinics serving millions of people, or for crop prediction affecting millions of farmers, the consequences of errors are serious. Ethical oversight needs to match the scale of deployment.

Deepfakes and misinformation: AI-generated fake content — fake videos, fake audio, fake images — poses a significant challenge in India’s complex information environment. Ethical frameworks for synthetic media are a particularly urgent priority.

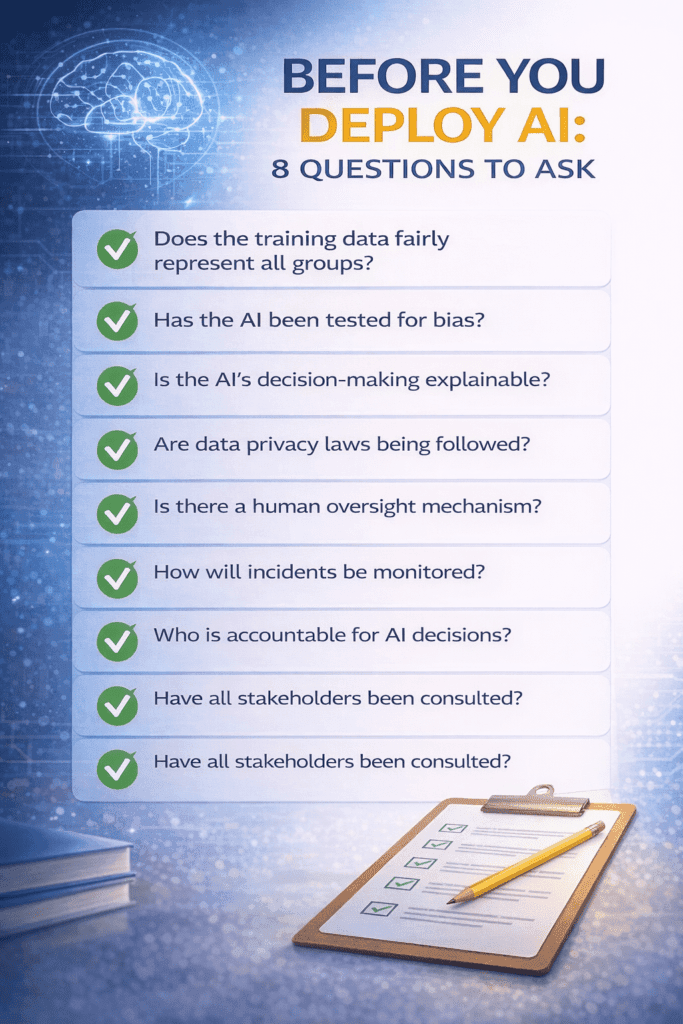

Practical Steps to Build Ethical AI

Understanding the problems is necessary. But for researchers, developers, and organisations, the more important question is: what do we actually do differently?

Here are practical, actionable steps — written for people who build or deploy AI systems.

For Developers and Researchers

Use diverse and representative data. Before training your model, ask: does my training data represent all the people this system will affect? If certain groups are underrepresented or misrepresented, the model will work less well for them. Document the gaps you find and what you did about them.

Test for fairness across groups — not just overall accuracy. A model that is 92% accurate overall might be only 78% accurate for one demographic group. That difference matters enormously. Test your model’s performance across gender, age, ethnicity, and any other characteristic relevant to your use case.

Document everything from the start. Write a “model card” — a standardised summary of your model’s purpose, performance, limitations, and intended use. Do this during development, not as an afterthought. Future users, auditors, and the people affected by your model all benefit from this transparency.

Design for human oversight. Build your system so that a human can understand what it is doing, monitor its performance, and override its decisions when necessary. Do not treat human oversight as optional or as a feature to add later.

Use explainability tools proportionate to risk. For high-stakes applications — medical, legal, financial — use tools like SHAP or LIME to understand how your model makes decisions. For lower-stakes applications, simpler forms of interpretability may be sufficient.

For Organisations

Set up an ethics review process. Before deploying any AI system that affects people’s lives, require a structured review — similar to how academic institutions review human subjects research. Ask: who could be harmed? How have we tested for that? What oversight is in place?

Monitor AI behaviour after deployment. Do not assume an AI that worked well in testing will behave the same way in real deployment on new data. Build monitoring systems to detect performance problems, unexpected errors, and potential harms.

Talk to the people who will be affected. Consulting the communities most affected by an AI system — not just technical experts — produces better systems and builds trust. People most at risk of AI-driven harm often have the clearest view of where risks lie.

The Future of AI Ethics (2026–2030)

These are not predictions — they are directions based on where current research, policy, and technology trends are heading.

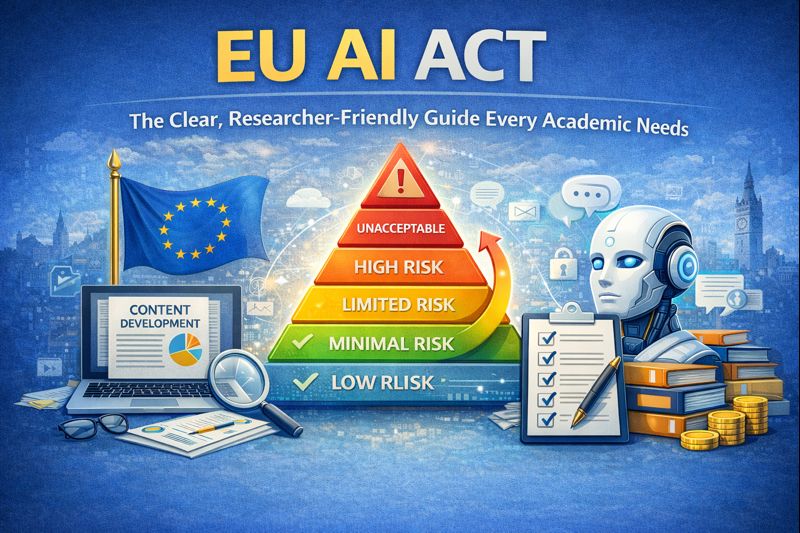

1. More Laws, More Countries

The EU AI Act is in effect from 2024–2027. Other countries are following. The United Kingdom, Canada, India, Brazil, and Japan are all developing AI governance frameworks. By 2030, most major economies will have some form of AI regulation. Developers building for global markets will need to navigate multiple overlapping legal frameworks.

2. Ethics Built Into Technical Standards

AI ethics principles are moving from policy documents into engineering practice. International standards bodies — including ISO, IEEE, and European standards organisations — are publishing technical standards that tell developers specifically how to meet ethical requirements. Following these standards will increasingly be the practical way to demonstrate compliance.

3. Governing Foundation Models

Large AI models — the powerful systems that underlie ChatGPT, Gemini, and similar tools — are being used as foundations for thousands of other applications. If a foundation model has a bias or safety problem, it can propagate through all the applications built on it. Governments are working out how to regulate these foundation models specifically, which is a complex governance challenge.

4. AI in Democratic Life

AI is being used for political advertising, content curation, and information spreading at scale. How AI shapes what people read, believe, and vote for is becoming one of the most urgent ethical and political questions of our time. Ethics frameworks specifically for AI in democratic and public information contexts are developing rapidly.

5. Involving Affected Communities

There is growing recognition that AI ethics cannot be decided only by technologists and policymakers. The communities most affected by AI — particularly marginalised groups who face the highest risk of harm — need meaningful participation in governance decisions. This is both an ethical principle and a practical way to catch problems that technical experts miss.

6. Environmental Costs

Large AI models use significant amounts of energy and water for training and operation. The environmental impact of AI is being incorporated into ethics frameworks with increasing seriousness. Sustainable AI development — minimising environmental footprint while maximising benefit — is becoming a recognised responsibility.

Ethical AI Checklist

This is a starting point, not a comprehensive compliance framework. Adapt it to your specific context.

Before You Start:

- What is this AI going to do, and who will it affect?

- Is there any group who could be harmed more than others?

- Does my training data represent all the people this system will encounter?

- Have I identified what “fairness” means for my specific use case?

During Development:

- Have I tested the model’s performance across different demographic groups?

- Have I documented the training data, model architecture, and known limitations?

- Is there a human override mechanism built into the design?

- Have I checked data collection and use against applicable privacy law (GDPR, DPDPA)?

Before Deployment:

- Have I completed a Fundamental Rights Impact Assessment or equivalent?

- Is there a mechanism for people affected by AI decisions to challenge or appeal?

- Do users know they are interacting with AI?

- Is there an incident monitoring process in place?

After Deployment:

- Am I monitoring the system’s real-world performance regularly?

- Do I have a process for identifying and responding to problems?

- Have I scheduled a review of the system’s ethical profile in six months?

- Are the people most affected by this system aware it exists and able to raise concerns?

Frequently Asked Questions

Q1. What are the biggest ethical concerns in AI right now?

The five most significant concerns are: bias and discrimination (AI replicating historical unfairness), lack of transparency (systems that can’t explain their decisions), privacy violations (data used beyond what people consented to), accountability gaps (unclear responsibility when AI causes harm), and insufficient human control in high-stakes decisions.

Q2. How can AI bias actually be reduced in practice?

By using diverse and representative training data, testing model performance across demographic groups at multiple development stages, applying technical bias mitigation tools, conducting independent fairness audits before deployment, and continuing to monitor outcomes after release. Bias reduction requires ongoing attention — it is not a one-time fix.

Q3. What is the AI black box problem in simple terms?

When an AI makes a decision, it cannot always explain why — in a way a human could understand. For decisions that significantly affect people’s lives (loans, medical diagnoses, job applications), this is a serious problem. Explainable AI (XAI) research is developing tools to make AI more interpretable, though these provide approximations rather than complete explanations.

Q4. Is AI regulated in India?

India has a growing AI policy landscape. The Digital Personal Data Protection Act 2023 covers data used in AI systems. The India AI Mission includes responsible AI as a priority. NITI Aayog has published responsible AI principles. India participates in the Global Partnership on AI. A comprehensive AI-specific law similar to the EU AI Act has not yet been enacted, though policy development is actively ongoing.

Q5. How does AI ethics affect students and researchers?

Students and researchers building AI systems—especially those used on real people—must understand ethics. They should use diverse data, check for bias, clearly explain limitations, include human oversight, and follow ethics review rules. If working in Europe or on EU-funded projects, they must also follow the legal requirements of the EU AI Act.

Final Thoughts

AI is not going away. Neither is the responsibility that comes with it.

The question is not whether AI will shape healthcare, education, employment, and public life — it already does, at enormous scale. The question is whether it does so fairly, transparently, and in ways that respect the people it affects.

The good news is that the tools, frameworks, and knowledge needed to build ethical AI are more developed and more accessible than they have ever been. This is not a problem without solutions. It is a problem where the solutions require consistent, deliberate choices by the people building and deploying AI systems.

If you are a student curious about AI ethics, this guide is your starting point. If you are a researcher or developer building AI systems, the checklist above gives you a practical starting framework. If you are a business owner or policy analyst thinking about AI deployment, the principles and questions in this guide apply directly to your decisions.

The core question of AI ethics is not technical. It is human.

Before we build, we should ask: who could this harm, and what are we doing about it?

Before we deploy, we should be able to answer that question honestly.

“AI regulation is not a barrier — it’s a framework for responsible innovation.”

Explore Related Articles

- EU AI Act: Complete Researcher Guide — What the law requires, step by step

- AI for Small Businesses in India — Practical tools with ethical deployment guidance

- AI in Market Research — Using AI responsibly for business insights

References:

- UNESCO Recommendation on AI Ethics: https://www.unesco.org/en/artificial-intelligence/recommendation-ethics

- EU High-Level Expert Group — Trustworthy AI Guidelines: https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai

- OECD AI Principles: https://oecd.ai/en/ai-principles

- NITI Aayog — Responsible AI for All: https://www.niti.gov.in/sites/default/files/2021-02/Responsible-AI-22022021.pdf

- IBM AI Fairness 360: https://aif360.mybluemix.net/

- Microsoft Fairlearn: https://fairlearn.org/

- India Digital Personal Data Protection Act 2023:

Disclaimer: This article is educational and informational purposes only. It is based on publicly available research and policy documents. It does not constitute professional, legal, or technical advice. AI ethics policy and research evolve rapidly. Always consult official sources and qualified professionals for guidance on specific situations.