Last Updated: April 20, 2026

Module 7 Complete Guide – AI in Academic Research

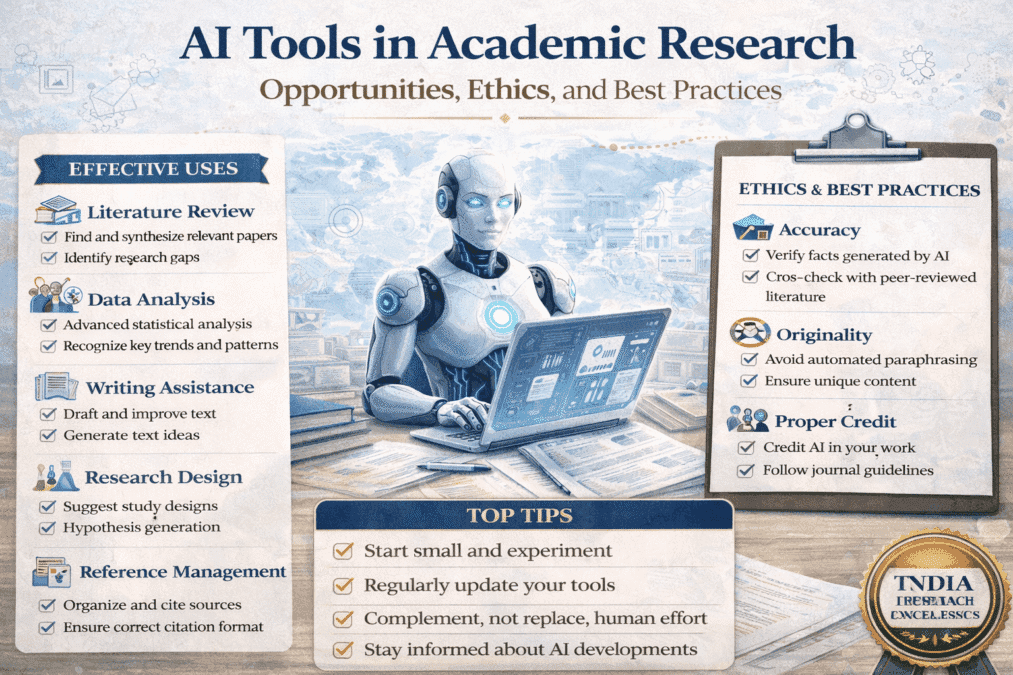

AI Tools in Academic Research

Academic Writing Mastery: The Complete 2026 Guide To Research Papers, Thesis & Dissertation Writing

Why Every Researcher Needs to Understand AI Tools Right Now

Here’s what nobody is telling PhD students about artificial intelligence: the question is no longer whether AI is changing academic research—it already has. The real question is whether you’re using it ethically, strategically, and in ways that actually build your skills rather than bypass them.

AI tools like ChatGPT, Claude, and research assistants have arrived faster than university policies, journal guidelines, or supervisor training. You’re navigating genuinely new terrain. Some students are using AI brilliantly to work smarter. Others are unknowingly crossing ethical lines. Most are somewhere in between—confused about what’s allowed, worried about doing the wrong thing, unsure whether to disclose what they’ve done.

This module gives you clear, practical answers. You’ll learn exactly what AI can legitimately help with (more than you might think), what it absolutely cannot and should not do (critical to understand), how institutional and journal policies work, how to disclose AI use properly, and how to build the AI literacy that’s becoming a basic research skill.

This comprehensive guide covers:

- The AI tool landscape available to researchers in 2024–2026

- What AI can legitimately help you with (and worked examples)

- What AI cannot and should not do—ever

- How to navigate institutional and journal policies

- Proper AI disclosure and documentation

- Practical step-by-step workflows for responsible AI use

- How to build genuine AI literacy

- Special considerations for law students and legal research

Whether you’re just starting your research or deep into your thesis, understanding AI tools is no longer optional. It’s part of being a researcher in 2026.

Understanding the AI Tool Landscape (2024–2026)

The AI ecosystem is evolving monthly. Here’s a practical overview of what’s available, what each tool does well, and where its limitations lie.

Large Language Models (LLMs)

These are the conversational AI tools most researchers encounter first. Each has distinct strengths and weaknesses.

| Tool | Best For / Key Limitations |

| ChatGPT (OpenAI) – GPT-4/4.5 | Writing text, brainstorming, code help, translation. Limit: knowledge cutoff, internet access limited in base version, occasional hallucinations. |

| Claude (Anthropic) – Opus, Sonnet, Haiku | Detailed analysis, long documents (up to 200k tokens), complex instructions, ethical reasoning. Limit: knowledge cutoff, occasional errors. |

| Google Gemini | Real-time web search, Google Scholar/Drive integration. Limit: sometimes less consistent in complex reasoning. |

| Microsoft Copilot | Integration with Word, Excel, PowerPoint. Limit: may not handle complex research tasks as well. |

The critical limitation all LLMs share: they sometimes generate false information with complete confidence. They may cite papers that don’t exist, invent statistics, or get research findings wrong. You are responsible for verifying everything.

Research-Specific AI Tools

These tools are purpose-built for academic work and generally more reliable for specific research tasks:

- Elicit – AI research assistant for finding papers, extracting data, and synthesizing findings. Has validated methods for systematic reviews as of 2025. Free and paid versions. elicit.org

- Consensus – Answers research questions by synthesizing peer-reviewed papers with source links. consensus.app

- Connected Papers – Visual graphs showing how papers relate to each other. Excellent for understanding literature fields. connectedpapers.com

- Research Rabbit – Literature mapping and paper discovery. Free and intuitive. researchrabbit.ai

- Scite – Smart citations showing whether papers have been supported, contrasted, or mentioned by later work. Essential for credibility assessment. scite.ai

- Semantic Scholar – AI-powered academic search with paper recommendations and citation tracking. Free. semanticscholar.org

- Paperpal – AI writing assistant designed for academic researchers, popular in India and Asia. 2026 edition includes field-specific language suggestions. paperpal.com

- Thesify AI – Specialized pre-submission checks for PhD theses: structure, consistency, citation patterns. Particularly useful in final review stages. thesify.ai

AI Writing and Coding Assistants

- Grammarly – Grammar, style, tone checking, and plagiarism detection. Premium version most useful. grammarly.com

- GitHub Copilot – AI pair programmer that suggests code as you type. Extremely helpful for computational research. github.com/features/copilot

- ChatGPT Advanced Data Analysis – Upload datasets and perform statistical operations in natural language. chatgpt.com

- Julius AI – Specialized in data analysis and visualization; generates publication-ready graphs. julius.ai

What AI Can Properly Help With

AI tools have real value when used the right way. Here are the legitimate uses—each with a worked example showing exactly what appropriate use looks like.

Literature Search and Discovery

AI tools excel at finding connections between papers that aren’t obvious, suggesting search terms, and mapping research landscapes.

Example appropriate prompt:

“I’m researching climate change impacts on urban infrastructure in developing nations. I’ve found papers on climate adaptation and on infrastructure challenges, but I’m struggling to find work that combines both. Can you help me identify search terms and paper connections I might have missed?”

Why this is OK: You’re using AI as a research assistant to help you find things—not to replace your thinking. You still read papers yourself, judge their quality, and put findings together in your own way.

Understanding Complex Concepts

AI can explain difficult theories or methods in simpler terms, break down statistical concepts step-by-step, or translate disciplinary jargon into accessible language.

Example appropriate prompt:

“I’m reading about structural equation modeling for the first time. Can you explain what path coefficients mean and how they differ from regular regression coefficients? I need to understand this well enough to decide if SEM is appropriate for my research design.”

Why this is OK: You’re using AI like a tutor to help you understand better. The learning is still yours.

Brainstorming and Idea Generation

AI can generate potential research angles, suggest alternative theoretical frameworks, propose methodological approaches, and help you think through counterarguments.

Example appropriate prompt:

“I’m designing a mixed-methods study on teacher retention in rural schools. I plan to use surveys followed by interviews. What other methodological approaches might I consider? What are the strengths and weaknesses of my current design?”

Why this is OK: You’re using AI as a thinking partner. You evaluate its suggestions critically and make your own decisions.

Improving Writing Clarity

AI can point out unclear sentences, find grammar mistakes, suggest more precise words, and help reorganize paragraphs.

The right approach:

“Here’s a paragraph from my methodology section. It’s unclear and too wordy. Can you suggest ways to make it shorter and more direct while keeping it technically accurate?”

Why this is OK: You wrote the original content. AI is helping you express it better—similar to a writing center consultation. The ideas and substance are still yours.

Data Organization and Coding Assistance

AI can clean messy data files, write code for analysis, calculate basic statistics, create visualizations, and spot data entry errors.

Appropriate use:

“I have survey data with some inconsistent entries. Can you help me write Python code to make all the date formats consistent and check for duplicate responses?”

Why this is OK: You’re using AI as a coding helper for technical tasks. You still design the analysis, understand the results, and draw the conclusions.

Language Support for Non-Native English Speakers

AI can translate papers, help improve academic English, suggest technical terms, and identify phrases that might be culturally confusing.

Why this is OK: You’re getting language help—similar to working with an English editor. The ideas and analysis are yours.

What AI Cannot and Should Not Do

Understanding where AI falls short—and what crosses ethical lines—is just as important as knowing when to use it.

AI Cannot Replace Your Thinking

These decisions must always be yours:

- Wrong – Deciding your research question or theoretical framework

- Wrong – Designing your research methods

- Wrong – Interpreting your findings or drawing conclusions

- Wrong – Determining what your work means for your field

- Wrong – Misuse: “Here’s my dataset. Analyze it and tell me what I should conclude.”

- Right – Better approach: “I’ve analyzed my data and found X. I interpret this as suggesting Y. Are there alternative interpretations I should consider?”

AI Cannot Guarantee Facts Are True

This is perhaps the most dangerous misunderstanding about AI tools. All current LLMs sometimes fabricate information—and they sound 100% confident when they do it. They may:

- Cite papers that don’t exist

- Get research findings completely wrong

- Invent statistics that sound plausible

- Create facts that seem real but aren’t

You are responsible for accuracy. If you include AI’s mistakes in your thesis, you face the consequences—possibly rejection, failing your defense, or reputation damage.

How to protect yourself:

- Never use AI-created citations without verifying each one exists

- Check AI’s claims by reading the actual sources

- Use AI to help you find things—not as your source of truth

- Treat all AI outputs as starting points requiring verification

AI Cannot Do Original Research

Your thesis must represent original work from you. If AI writes major sections, the work isn’t yours. This applies whether the ‘someone’ writing it is human or AI—the distinction between getting writing help and outsourcing intellectual work is real and significant.

The crucial distinction:

“Help me express this idea more clearly” = Appropriate

“Write my literature review about topic X” = Academic misconduct

AI Cannot Handle Field-Specific Nuance

AI may not understand methodological debates specific to your field, the unwritten rules and expectations of your discipline, or why certain theoretical choices matter. Always filter AI suggestions through your field knowledge and consult human experts for field-specific matters.

AI Cannot Make Ethical Decisions

Don’t rely on AI to prepare IRB applications without human review, decide whether research is ethical, evaluate consent processes, or assess risk to participants. Ethics requires a real person to think about the situation and make the call.

Navigating Institutional and Journal Policies

Policies vary widely and change frequently. Here’s how to navigate this landscape without accidentally violating rules you didn’t know existed.

Common Institutional Approaches

| Policy Type | What It Means for You |

| Complete ban | AI use in student work is prohibited entirely. Check your institution’s specific policy—these exist even if controversial. |

| Permitted with disclosure | Most common globally. AI use allowed if properly documented. You must state: ‘I used [Tool] to [Task].’ |

| Permitted for specific tasks | Grammar checking, coding, literature discovery = OK. Generating content, analyzing data without understanding = not OK. |

| No formal policy yet | Absence of policy doesn’t mean ‘anything goes.’ General academic integrity standards still apply. |

Indian Context: UGC Guidelines 2025

The University Grants Commission (UGC) released comprehensive guidelines in 2025 for AI use in higher education research. The Indian National Academy of Engineering (INAE) also published detailed ethics frameworks specifically for Indian researchers. These emphasize:

- Transparency in AI tool usage at all research stages

- Maintaining human oversight in all research decisions

- Proper attribution and disclosure of AI assistance

- Building AI literacy as a researcher skill

- Ethical boundaries calibrated to different research stages

UGC Guidelines: ugc.gov.in | INAE Framework: inae.in

Journal Policies

Always check the specific journal’s AI policy before submission. Key positions as of 2024–2026:

- Nature Portfolio: AI for improving readability OK; AI for generating scientific content not permitted. Requires disclosure. nature.com/nature/editorial-policies

- Science: AI for editing acceptable, not for creating content. Disclosure required.

- PLOS: Language editing and formatting permitted. AI-generated images or data prohibited. Transparency required.

When policies conflict: Follow the more restrictive one. Your institution’s rules govern your degree; the journal’s rules govern publication.

How to Check Your Institution’s Policy

Where to look: academic integrity office website, library research guides, graduate school handbook, department-specific guidelines, your supervisor’s expectations.

What to ask your supervisor directly:

- “What’s our department’s stance on AI use in thesis research?”

- “Are there specific AI tools you recommend or prohibit?”

- “How should I disclose AI use in my thesis?”

- “What tasks do you consider appropriate for AI assistance?”

Proper AI Disclosure and Documentation

Transparency isn’t just required by policy—it’s the mark of a researcher with integrity. Even when AI use is permitted, you must typically disclose it.

What to Disclose

Include in your disclosure: which AI tool(s) you used (name and version), what tasks you used them for, and how you verified AI outputs.

Example thesis acknowledgment:

“During the preparation of this thesis, I used Claude 3.5 Sonnet to improve the clarity and conciseness of my writing in several sections. I used ChatGPT-4 to help debug Python code for my statistical analysis. All AI-generated suggestions were reviewed and verified by me, and I take full responsibility for the content of this work.”

Example journal submission disclosure:

“The authors used Grammarly Premium for grammar and style checking throughout the manuscript. ChatGPT-4 was used to generate initial code for data visualization, which was then modified and validated by the authors. No AI tools were used to generate scientific content or conclusions.”

What NOT to Disclose

You don’t need to disclose: using Google or Google Scholar (standard research tools), basic spell-check built into word processors, citation management software (Zotero, Mendeley), or using AI to understand concepts during learning (similar to reading textbooks). The key rule: disclose AI when it directly contributes to your final work product.

Where to Disclose

- In your thesis: Acknowledgments for general AI assistance; Methods section if AI was used for analysis; footnotes if AI helped with specific sections

- In journal submissions: Check the specific journal’s requirements—some want it in Methods, others in Acknowledgments, some require a separate AI disclosure statement

- In presentations: Generally not necessary unless directly relevant to the work presented

Citing AI Tools

Citation formats for AI are still being standardized. When AI generated text you included, cite it:

APA 7th (adapted):

OpenAI. (2024). ChatGPT (GPT-4) [Large language model]. https://chat.openai.com/

Chicago 18th (adapted):

Text generated by ChatGPT, OpenAI, February 2026, https://chat.openai.com/.

Always follow your institution or journal’s specific requirements when available—these take precedence over general citation formats.

Practical Workflows: Integrating AI Responsibly

Here are concrete step-by-step workflows for common research tasks, showing exactly how to integrate AI responsibly.

Literature Review Workflow

| Stage | What You Do / What AI Helps With |

| Stage 1: Discovery | YOU search traditional databases. USE AI tools (Elicit, Research Rabbit, Connected Papers) to find additional papers and map connections. |

| Stage 2: Organization | Import papers into Zotero/Mendeley. USE AI summaries to quickly assess relevance—but YOU read and annotate every paper you’ll cite. |

| Stage 3: Synthesis | YOU identify themes and patterns. ASK AI: ‘I’ve identified these three themes. Are there other ways to organize these findings?’ YOU write the literature review. |

| Stage 4: Refinement | YOU revise based on supervisor feedback. USE AI writing assistants to improve clarity of specific sentences. YOU make all final decisions. |

Sample disclosure:

“I used Elicit and Research Rabbit to identify relevant literature beyond traditional database searches. All papers included in the literature review were read and analyzed by me.”

Data Analysis Workflow

| Stage | What You Do / What AI Helps With |

| Stage 1: Preparation | USE AI coding assistants to write data cleaning scripts. YOU verify cleaned data is correct. YOU document all transformations. |

| Stage 2: Analysis | YOU design the analysis strategy. USE AI to write code for statistical tests. YOU run analyses and examine outputs. YOU verify results make sense. |

| Stage 3: Interpretation | YOU interpret what findings mean. ASK AI: ‘I found correlation X. What alternative explanations might exist?’ YOU determine which interpretation is most defensible. |

| Stage 4: Presentation | USE AI to generate visualization code. YOU refine visualizations. YOU write results and discussion sections entirely. |

Sample disclosure:

“GitHub Copilot was used to assist with writing Python code for statistical analyses. All analyses were designed, executed, and interpreted by the researcher. All statistical outputs were verified manually.”

Writing and Revision Workflow

- Stage 1 – Drafting: YOU write the first draft of all sections. Content, arguments, and analysis are entirely yours.

- Stage 2 – Revision: YOU revise based on your own review and supervisor feedback. Use AI writing assistants to improve specific sentences or paragraphs.

- Stage 3 – Polishing: Use grammar checkers (Grammarly, etc.). Ask AI about particularly awkward sentences. YOU make all final decisions.

Sample disclosure: “Grammarly and ChatGPT were used to improve writing clarity and grammar. All content was written by the author.”

Ethical Frameworks for AI Decision-Making

When you’re uncertain whether an AI use is appropriate, apply these five tests. If any gives a concerning answer, reconsider.

| The Five Tests for Ethical AI Use |

| 1. The Transparency Test: Would I be comfortable disclosing exactly how I used AI for this task? If no → the use is probably inappropriate. |

| 2. The Learning Test: Does using AI for this task prevent me from developing skills I need? If it prevents learning → reconsider the use. |

| 3. The Attribution Test: If AI generated this content, whose work is it? If it’s substantively AI’s work → you shouldn’t include it without extreme transparency. |

| 4. The Replacement Test: Could I produce similar work without AI, just more slowly? If no → you may be relying too heavily on AI. |

| 5. The Verification Test: Can I verify that AI output is correct and appropriate? If no → don’t use it. You’re responsible for everything in your thesis. |

AI Detection Tools: What You Need to Know

Tools like Turnitin’s AI detector and GPTZero claim to detect AI-generated text. Understanding their current limitations is important for every researcher.

The Current State of AI Detection (2026)

- Turnitin 2026 benchmarks: Detects approximately 70–75% of fully AI-generated academic text, but still produces false positives in 8–12% of cases. Particularly struggles with AI-edited human writing.

- Grammarly’s 2025 findings: Detection tools struggle most with non-native English speakers, AI-assisted (not AI-written) text, and heavily edited documents blending human and AI contributions.

- Current consensus: Detection tools are improving but not reliable enough to definitively prove AI use. They raise suspicions; human assessment remains more reliable.

What This Means for You

Don’t assume you can hide extensive AI use just because detection is imperfect—style shifts, supervisor knowledge of your writing, or inability to explain details in your defense may reveal it

- Keep documentation of your writing process (drafts, notes, supervisor emails) as evidence of your work

- If your human-written work is flagged incorrectly, defend it by showing drafts and explaining your process

- Focus on using AI ethically rather than trying to evade detection

Case Studies: AI Use in Research Scenarios

Four scenarios illustrating how these principles apply in practice—including what goes wrong and how to do it right.

Case Study 1: The Literature Review

Scenario: Maria is starting her dissertation on climate migration. She has 150 papers to synthesize.

Problematic approach: Maria inputs all 150 papers into AI and asks it to write her literature review. She makes minor edits and submits it. She doesn’t deeply understand the field; the AI-generated review may miss important nuances or misrepresent findings.

Better approach: Maria reads all papers herself and takes notes. She uses Elicit and Connected Papers to help organize papers and identify themes. She asks AI: “I see Paper A argues X and Paper B argues Y. Are these complementary or contradictory?” She writes the review herself, using AI writing assistants only for clarity in specific sentences.

Case Study 2: Statistical Analysis

Scenario: James is analyzing survey data for his Master’s thesis but finds SPSS confusing.

Problematic approach: James uploads his dataset to ChatGPT and says “analyze this and tell me what’s significant.” He reports AI outputs without understanding them. If questioned in his defense, he can’t explain his statistical choices.

Better approach: James learns the basics of his planned analysis through textbooks. He uses AI to help write R code for the analysis—but he runs the analysis himself, examines outputs, consults a statistics advisor for unexpected results, and writes the results section entirely.

Case Study 3: Writing Assistance for Non-Native Speakers

Scenario: Kenji is a non-native English speaker. His ideas are strong but writing is sometimes unclear.

Appropriate approach: Kenji writes each section himself, ensuring all ideas are his own. He then uses Paperpal and Grammarly to improve clarity and grammar. For unclear sentences, he inputs: “I’m trying to say [explanation]. How can I phrase this in academic English?” Before submission, he uses Thesify AI for pre-submission checks on formatting consistency and citation patterns.

Why appropriate: The ideas, analysis, structure, and conclusions are entirely Kenji’s. AI is helping with language presentation and quality checking—the same as working with an English editor and careful proofreader, both accepted practices.

Discipline-Specific AI Norms

AI use norms vary significantly across disciplines. Here’s what to consider in different fields.

| Field | AI Use Norms and Key Cautions |

| STEM | More accepting of AI for code generation, data analysis, literature search. Still prohibited: AI-generated scientific writing without disclosure, AI-designed experiments, AI interpretation of results. |

| Social Sciences | Mixed acceptance. Quantitative: similar to STEM. Qualitative: more skeptical—AI may miss cultural nuances in coding, interpretive traditions require human understanding. |

| Humanities | Most careful. Writing is often the work itself. Deep interpretation, theory, and voice matter. Limited appropriate uses: translating sources, finding archival material. |

| Law | Accuracy paramount—legal errors have real consequences. AI has fabricated cases in legal contexts. Appropriate: initial research questions, understanding concepts, citation formatting. High risk: case law research, statutory interpretation, doctrinal argument development. |

Key Takeaways from Module 7

You now understand the AI tool landscape available to researchers in 2024–2026 and can navigate it strategically. The core principles are straightforward, even if applying them takes judgment:

| What AI Can Help With (Use Freely with Disclosure) |

| Literature discovery and mapping research fields |

| Understanding complex concepts (like a tutor) |

| Brainstorming approaches and methodological alternatives |

| Improving writing clarity and grammar |

| Writing and debugging code for data analysis |

| Language support for non-native English speakers |

| Pre-submission formatting and consistency checks (e.g., Thesify AI) |

| What AI Cannot Do (Keep These Tasks Yours) |

| Make your research decisions, choose your theory, design your methods |

| Guarantee that any fact, citation, or claim is accurate |

| Write your thesis sections—this is your intellectual contribution |

| Handle field-specific nuance your supervisor would catch |

| Make ethical decisions about your research |

Most importantly: AI is a tool—powerful but limited, useful but fallible. Your responsibility as a researcher remains unchanged: to produce original, rigorous, ethical scholarship that advances knowledge. AI can help you work more efficiently. It cannot replace your intellectual contribution.

The key to ethical AI use: maintain integrity, ensure transparency, verify everything, and never forget that your thesis must represent your thinking and your contribution to your field.

Legal Research and Writing: Complete Guide for Law Students and Legal Researchers

FAQs

Q: How can AI tools help in academic research?

AI tools can assist with specific research tasks: literature mapping (identifying key debates and search terms); drafting and editing prose for clarity; summarising long documents; generating initial outlines; identifying gaps in arguments; and automating reference formatting. AI accelerates tasks that would otherwise take more time but cannot substitute for the intellectual work of research — formulating original questions, designing methodology, analysing data, and constructing arguments. The line between acceleration and substitution is the foundational distinction in responsible AI use for research.

Q: Is it ethical to use AI in academic research and writing?

AI use in academic research is ethical when it accelerates your intellectual work without substituting for it. It is unethical when AI produces content you present as your own original thinking — arguments you have not made, analyses you have not performed, citations you have not verified. Most universities and journals now require disclosure of AI use. The test: can you explain, in your own words, every claim in your research? If AI produced a claim you cannot explain, it is not your intellectual work and should not appear in your submission.

Q: What AI tools do researchers use in 2026?

Commonly used AI research tools in 2026 include: ChatGPT, Claude, and Gemini for writing assistance and literature mapping; Elicit and Consensus for AI-assisted literature search; Research Rabbit and Connected Papers for citation network mapping; Zotero with AI plugins for reference management; Grammarly and similar tools for grammar and style; and discipline-specific tools like Westlaw AI for legal research. Database-integrated AI features (Scopus AI, Web of Science AI, SCC Online AI) are increasingly available. Tool availability and capability change rapidly — verify current features before relying on any specific tool.

Q: Do journals require disclosure of AI use in research papers?

Yes — most major publishers now require disclosure of AI use. Nature, Elsevier, Taylor & Francis, Springer, and Wiley all require that AI use in writing or data analysis be disclosed in the methods or acknowledgements section. AI cannot be listed as an author under any publisher’s policy. Indian UGC guidelines increasingly address AI in academic work. Check your target journal’s specific AI disclosure policy before submission — policies vary in detail but the requirement to disclose is now near-universal among reputable publishers.

Q: What are the risks of using AI in academic research?

The main risks are: hallucination (AI generates plausible but false information — particularly dangerous for citations and case law); plagiarism (AI-generated content that closely matches existing text); academic integrity violations (using AI to produce content presented as your own original work); over-reliance (using AI for tasks that build essential research skills); and detection (AI detection tools are imperfect but increasingly used by journals and institutions). The hallucination risk is most serious — never cite a source based on AI output alone without independent verification.

Selected References for Further Reading

AI Ethics and Policy

- UNESCO Guidance for Generative AI in Education and Research (2023) – UNESCO Publishing.

- EU AI Act Research Exemptions (2024) – European Parliament regulation on artificial intelligence.

- UGC Guidelines for AI Use in Higher Education Research (2025) – University Grants Commission, India.

- INAE AI Ethics for Indian Researchers (2025) – Indian National Academy of Engineering Guidelines and Best Practices.

AI in Academic Research

- Thorp, H. H. (2023). ChatGPT Is Fun, But Not an Author. Science, 379(6630), 313.

- Van Dis et al. (2023). ChatGPT: Five Priorities for Research. Nature, 614(7947), 224–226.

- Hutson, M. (2022). Could AI Help You to Write Your Next Paper? Nature, 611(7934), 192–193.

AI Research Tools Documentation

- Elicit: Systematic Reviews with AI – Methods and Validation (2025)

- Paperpal: AI Tools for Academic Writing – 2026 Edition

- Thesify AI: Pre-Submission Analysis for PhD Theses – Technical Documentation (2026)

- Semantic Scholar Academic Search

- Connected Papers – Visual Literature Maps

- Research Rabbit – Literature Discovery

- Scite – Smart Citations

Academic Integrity and AI

- Eaton, S. E. (2025). Academic Integrity in the Age of AI. University of Calgary Press.

- International Center for Academic Integrity (ICAI) – Fundamental Values of Academic Integrity, 5th ed. (2026)

AI Detection

- Turnitin AI Writing Detection: Benchmarks and Accuracy Metrics 2026

- Weber-Wulff et al. (2023). Testing of Detection Tools for AI-Generated Text. International Journal for Educational Integrity, 19(26).

AI Tool Documentation

- Anthropic Claude Model Card (2024)

- OpenAI GPT-4 Technical Report (2024)

- GitHub Copilot – AI Pair Programmer

Research Methods and AI

- Bail, C. A. (2024). Can Generative AI Improve Social Science? PNAS, 121(21).

- Kapoor & Narayanan (2023). Leakage and the Reproducibility Crisis in ML-Based Science. Patterns, 4(9).

Practical Guides

- Mollick, E. R. & Mollick, L. (2023). Using AI to Implement Effective Teaching Strategies in Classrooms. Wharton School.

- Chronicle of Higher Education – AI in Academia

- Inside Higher Ed – AI Tools Coverage

- The AI Research Toolkit: What Each Tool Actually Does and When to Use It

- Where AI Helps and Where It Harms: The Line That Matters

- Prompt Engineering for Researchers: Getting Useful Output

- Disclosure Documentation and AI Integrity

- AI Policy Landscape for Indian and Global Researchers

Author

Dr. Rekha Khandelwal, a legal scholar and academic writing expert, is the founder of AspirixWriters. She has extensive experience in guiding students and researchers in writing research papers, theses, and dissertations with clarity and originality. Her work focuses on ethical AI-assisted writing, structured research, and making academic writing simple and effective for learners worldwide.

Author Profile Dr. Rekha Khandelwal | Academic Writer, Legal Technical Writer, AI Expert & Author | AspirixWriters

- Module 1 Overview The Complete Guide to Research Paper and Thesis Structure

- Module 2 Overview The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

- Module 3 Overview Research Methodologies: Complete Guide to Quantitative, Qualitative, Mixed Methods & Legal Research (2026)

- Module 4 Overview Data Analysis and Results Presentation: Complete Guide for Quantitative, Qualitative & Legal Research (2026)

- Module 5 Overview Organization and Academic Tone: Complete Guide to Professional Scholarly Writing (2026)

- Module 4 Overview Peer Review and Publication: Complete Guide from Submission to Acceptance (2026)

- Module 7 Overview AI Tools in Academic Research: Opportunities, Ethics, and Best Practices (2026)

- Module 8 Overview Grant Writing and Research Funding: Complete Guide to Finding Money for Your Research (2026)

- Module 9 Overview Academic Career Development: Complete Guide to Building Your Professional Life in Research (2026)

- Module 10 Overview Research Ethics and the IRB Process: Complete Guide to Doing Research Responsibly (2026)

Research Ethics for Legal Researchers: Privilege, Confidentiality, Vulnerable Participants, and the DPDPA 2023

Research Ethics for Legal Researchers Academic Writing Mastery: The Complete 2026 Guide To Research Papers,…

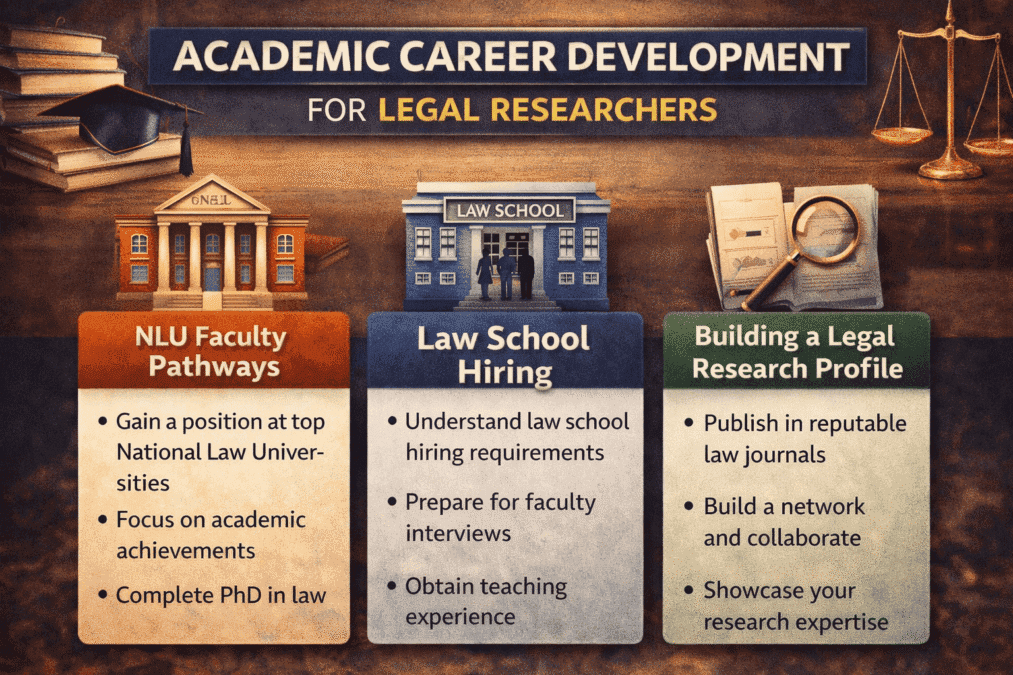

Academic Career Development for Legal Researchers

Academic Career Development for Legal Researchers: NLU Faculty Pathways, Law School Hiring, and Building a…

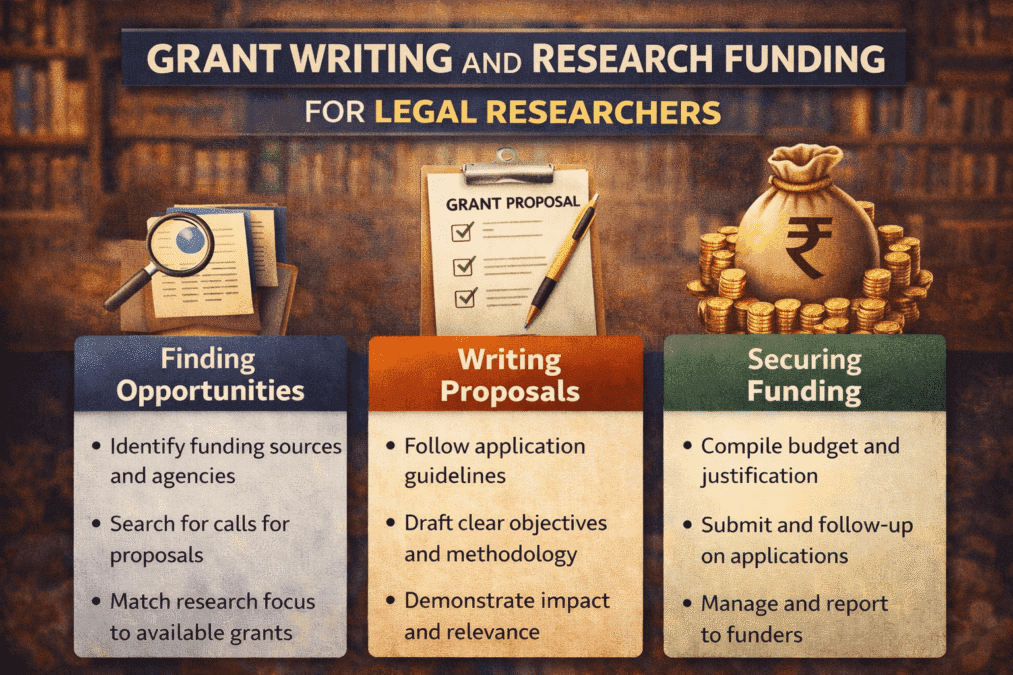

Grant Writing and Research Funding for Legal Researchers

Academic Career Development: Complete Guide To Building Your Professional Life In Research (2026) Back to…

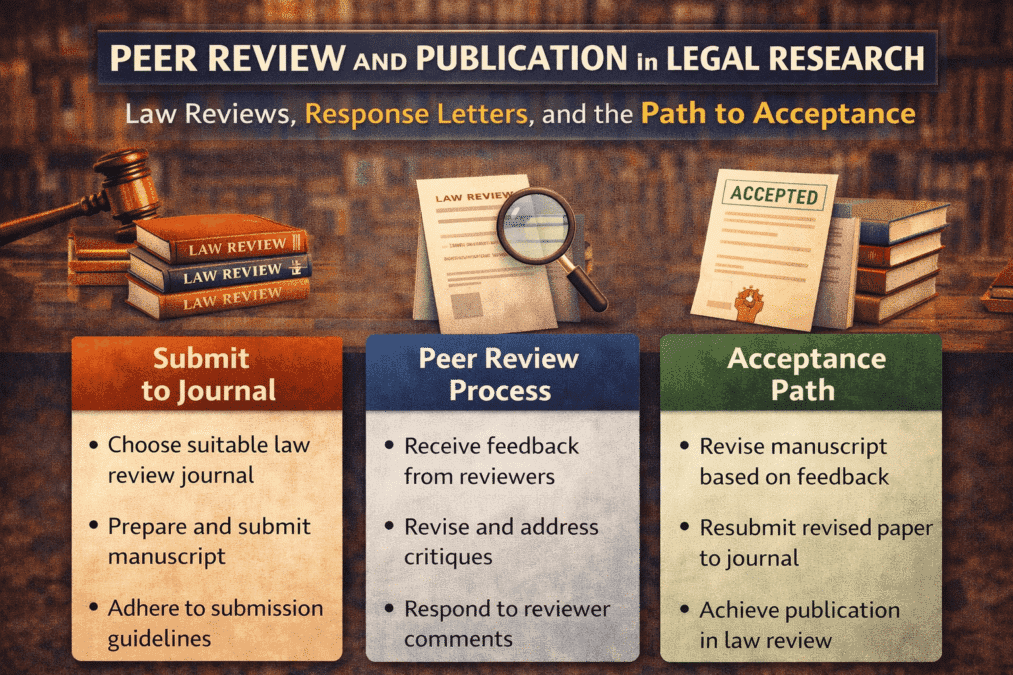

Peer Review and Publication in Legal Research

Peer Review and Publication in Legal Research: Law Reviews, Response Letters, and the Path to…

Academic Writing Mastery: The Complete 2026 Guide to Research Papers, Thesis & Dissertation Writing

Academic Writing Master From Concept to Submission Series Academic Writing Mastery Whether you are writing…

Research Integrity: Data Handling Authorship Ethics and the Indian Regulatory Framework

Cluster Post 5 | Module 10: Research Ethics and the IRB Process From Concept to…