Last Updated: March 31, 2026

Qualitative Data Collection and Analysis

Module 3: Research Methodologies

From Concept to Submission Series | 2026

Academic Writing Mastery: The Complete 2026 Guide To Research Papers, Thesis & Dissertation Writing

Module 1 (Complete Guide)- The Complete Guide To Research Paper Structure: IMRAD Format, Thesis Organization & Academic Writing (2026)

Module 2 (Complete Guide) –The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

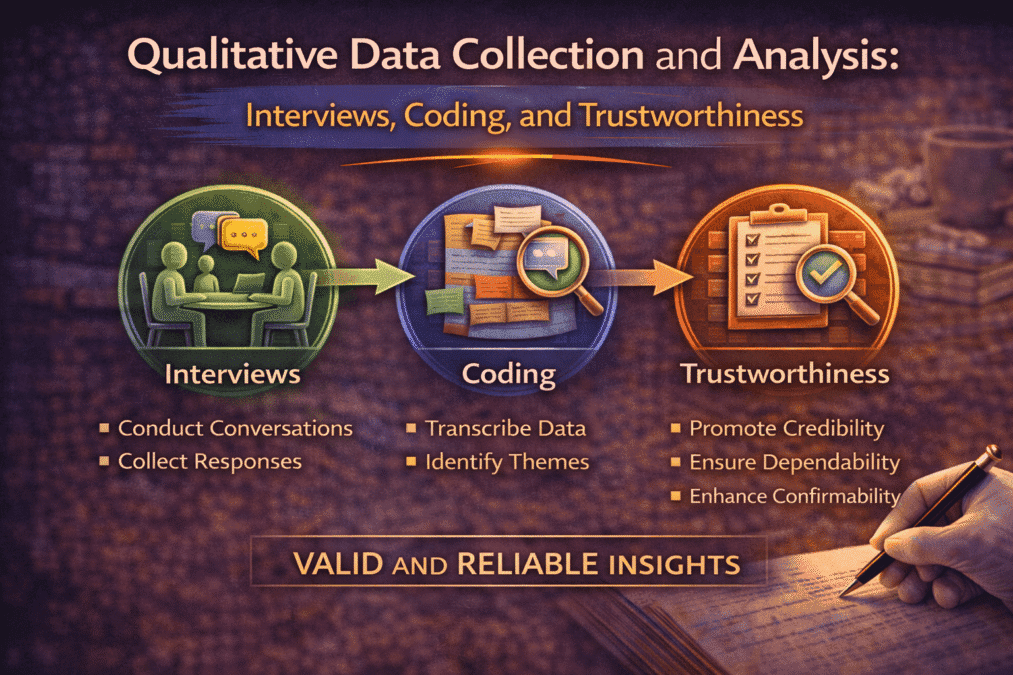

Qualitative Data Collection and Analysis: Interviews, Coding, and Trustworthiness

The module overview covered interview types, focus groups, observation, and thematic analysis. This post goes deeper: how to design and conduct interviews that generate rich data rather than thin responses, the step-by-step coding process with a worked example, the four trustworthiness criteria and how to demonstrate each one in your methodology chapter, and the specific mistakes that make qualitative analysis appear unreliable to reviewers.

Designing the Interview Guide

The interview guide is not a questionnaire. A questionnaire extracts predetermined answers to predetermined questions. An interview guide opens a space for participants to tell you what matters to them about the phenomenon you are studying — including things you did not know to ask about.

A well-designed interview guide for a semi-structured interview has three components: an opening that establishes rapport and explains the purpose, a set of main questions that cover the key areas of interest, and probes for each main question that invite elaboration without directing the response.

Main questions: the open-ended imperative

Every main question should be genuinely open-ended — designed so that the participant cannot answer it with yes, no, or a single word, and so that the researcher’s theoretical assumptions are not embedded in the question itself.

Closed: “Did peer mentoring help you feel more confident?” Leading: “How did peer mentoring help you navigate the challenges of first year?” Open: “Can you tell me about your experience of peer mentoring in your first year?” The closed question presupposes that mentoring helped. The leading question presupposes that there were challenges and that mentoring helped navigate them. The open question presupposes nothing — it invites the participant to describe whatever their experience was, including experiences where mentoring was not helpful or where challenges were not the dominant feature.

A good interview guide has six to ten main questions for a sixty-to-ninety-minute interview. More questions than this produce a rushed interview that skims across topics without depth. The depth comes from probes — follow-up questions that invite the participant to say more about something they have raised.

Probes: the source of rich data

Probes are where qualitative interviews generate their most useful data. A probe is a short, neutral follow-up that signals to the participant that you are interested in more detail about what they just said, without steering the direction of the elaboration.

- Elaboration probe: “Can you say more about that?” / “What do you mean by that?”

- Example probe: “Can you give me an example of when that happened?”

- Clarification probe: “I want to make sure I understand — are you saying that…?”

- Contrast probe: “How did that compare to what you’d expected?” / “Was that different from earlier?”

- Silence: Pausing after a participant finishes speaking often prompts further elaboration without requiring any words from the interviewer.

Probes cannot be scripted in advance for each question — they respond to what the participant actually says. The interviewer must listen closely enough to identify what is worth probing. This is a skill that develops with practice, and piloting your interview guide with two or three participants before the main study is the most effective way to develop it.

Conducting Interviews: What the Module Does Not Tell You

Recording and transcription

Record every interview with the participant’s permission. Attempting to take notes during an interview means you are simultaneously listening, writing, evaluating, and planning your next question — none of which you can do well simultaneously. Recording allows you to be fully present in the interview and to produce a complete, verbatim record for analysis.

Transcribe within 48 hours of the interview while the conversation is fresh in memory. Verbatim transcription — including pauses, hesitations, laughter, and overlapping speech — captures more information than edited transcription, but the appropriate level of detail depends on your analytical approach. Thematic analysis usually requires verbatim but edited transcription (hesitations and filler words removed). Conversation analysis requires exact notation of every sound and pause.

Managing your own role: reflexivity in practice

The module mentions reflexivity as a qualitative quality criterion. What it does not explain is that reflexivity is not just something you write about in your methodology — it is something you practise during data collection.

Keep a reflexive journal throughout your data collection period. After each interview, write for ten to fifteen minutes: What questions felt like they led the participant? What did you assume rather than ask? What surprised you, and why were you surprised? What did you notice about your own reactions to what participants said? These notes are part of your data and part of your audit trail. They also help you spot patterns in your own interviewing that you can correct in subsequent interviews.

Thematic Analysis: The Step-by-Step Process

Braun and Clarke’s reflexive thematic analysis is the most widely used qualitative analytical method, and also one of the most widely misunderstood. “We used thematic analysis” in a methodology chapter tells an examiner almost nothing unless it is followed by a description of the specific steps taken. This section gives you those steps, with a worked example.

Phase 1: Familiarisation

Read your transcripts repeatedly before you begin coding — at minimum twice, once for general orientation and once more closely, taking informal notes. The goal is to move from seeing the text as words on a page to engaging with it as data: noticing things that recur, things that seem significant, things that surprise you.

Do not begin coding in this phase. Premature coding produces codes that are too broad, based on first impressions rather than thorough familiarity with the dataset. Familiarisation cannot be rushed, and it is the step most often skipped by researchers under time pressure — with visible consequences for the quality of the analysis that follows.

Phase 2: Initial coding

Code systematically across the entire dataset. An initial code is a short label that captures what is interesting about a specific segment of data — it stays close to the data language rather than abstracting immediately to conceptual categories.

Data extract (from interview with P3, Arts student): “My mentor told me which professor to go to for extension requests, because apparently you can’t just ask anyone — there’s a whole system and if you don’t know it you just… you just don’t get the extension. I had no idea. I would have just failed the assignment.” Initial codes applied: – Institutional knowledge gap – Mentor as navigator – Hidden rules of academic system – Consequential ignorance – Social capital transfer

Each code names something different about this segment. “Institutional knowledge gap” focuses on what the student lacked. “Mentor as navigator” focuses on the mentor’s role. “Hidden rules” focuses on the structure of the institution. Multiple codes per segment is normal and appropriate — each represents a different dimension of what is analytically interesting.

Phase 3: Generating initial themes

Group codes that share a conceptual similarity into potential themes. This is where pattern recognition begins — you are looking for clusters of codes that together describe something important about the dataset. At this stage, themes are candidate patterns, not final conclusions.

Codes clustered into candidate theme: Codes: Mentor as navigator / Institutional knowledge gap / Hidden rules / Social capital transfer / Knowing who to ask / System access through relationship Candidate theme: “Peer mentors as institutional navigators who transfer hidden cultural knowledge”

Phase 4: Reviewing themes

Test each candidate theme against two criteria. First: does it hold together internally? Do all the coded segments grouped under this theme actually fit it, or are some segments there because you had nowhere else to put them? Second: does it work in relation to the full dataset? Does the theme capture something that is genuinely present and significant in the data, or does it reflect only a few isolated moments?

This is the phase where negative cases matter most. For each theme you are developing, ask: are there participants whose data contradicts or complicates this theme? If twelve participants describe mentors as navigators but three describe mentors primarily as emotional support rather than instrumental guides, the theme needs qualification — not abandonment, but accurate scoping.

Phase 5: Defining and naming themes

Write a one-paragraph definition of each theme that specifies its scope and boundaries. What does this theme include? What does it exclude? How is it different from the adjacent themes? The definition forces you to be precise about what you are claiming, and it directly produces the theme descriptions you will use in your results chapter.

Phase 6: Producing the report

Each theme in your results chapter follows the structure described in this Post Module 1: name, definition, description of how it manifested across the dataset, supporting quotes from multiple participants. The analytical work is done — the results chapter presents its output.

Trustworthiness: The Four Criteria and How to Demonstrate Them

Qualitative research does not use reliability and validity in their quantitative forms. Instead, Lincoln and Guba’s four trustworthiness criteria — credibility, transferability, dependability, and confirmability — provide the framework for evaluating qualitative rigour. The module introduces these; this section explains specifically how to demonstrate each one.

| Criterion | How to demonstrate it in practice |

| Credibility (parallel to internal validity) | Member checking: share your theme descriptions with participants and ask whether they recognise their experience in them. Prolonged engagement: sustained time in the field before and during data collection. Triangulation: multiple data sources or methods that converge on the same patterns. Negative case analysis: actively searching for and addressing data that contradicts your themes. |

| Transferability (parallel to external validity) | Provide thick description of your setting, participants, and context — enough that readers can judge whether findings might transfer to their own contexts. You cannot claim generalisability; you can provide the information readers need to make their own transfer judgments. |

| Dependability (parallel to reliability) | Maintain an audit trail: document all decisions made during data collection and analysis, including what you changed and why. Your reflexive journal, coding memos, and theme development notes constitute this trail. |

| Confirmability (parallel to objectivity) | Show that findings emerge from the data rather than from your assumptions. The audit trail serves confirmability as well as dependability. Reflexive bracketing — acknowledging your prior assumptions and how you managed them — demonstrates that you distinguish your perspective from your participants’. |

The most commonly omitted trustworthiness measure in Indian qualitative theses is negative case analysis — actively looking for data that does not fit your themes. Including a sentence in your methodology chapter stating that you conducted negative case analysis, and then actually demonstrating it in your results (by noting where themes do not apply and why), signals qualitative rigour that examiners notice and value.

FAQs

Q: How do you conduct a semi-structured interview for research?

Develop an interview guide with 8–12 open-ended questions organised around your research themes — not a rigid script but a flexible framework. Begin with easy rapport-building questions before deeper topics. Use probes to explore responses: ‘Can you tell me more about that?’ ‘What did you mean by…?’ ‘Can you give me an example?’ Record with participant consent. Transcribe verbatim. Write field notes immediately after each interview capturing your observations and preliminary thoughts. Pilot the guide with one or two participants before full data collection.

Q: What is the difference between inductive and deductive coding?

Inductive coding generates codes from the data — you read the data and let themes emerge without a predetermined framework. Deductive coding applies a pre-existing theoretical framework to the data — you code for categories you defined in advance. Most qualitative research uses an abductive approach: beginning inductively, then connecting emerging codes to existing theory. Grounded theory is purely inductive. Framework analysis is deductive. Thematic analysis can be either. State clearly which approach you used and why it fits your research question.

Q: How many interviews do you need for qualitative research?

Qualitative research does not have a fixed required sample size — it uses data saturation as the stopping criterion. Saturation occurs when additional interviews produce no new themes or codes. In practice, saturation typically occurs at 12–25 interviews for a fairly homogeneous participant group and may require more for heterogeneous groups. Some methodologies have specific norms: phenomenology typically uses 6–10; grounded theory requires enough to develop a full theoretical framework, often 20–30. Justify your sample size by explaining when and how you determined saturation.

Q: What is member checking in qualitative research?

Member checking is a trustworthiness strategy in which you share preliminary findings or interpretations with research participants to assess whether they recognise and agree with your analysis. It serves as a check on the credibility of your interpretations — not to achieve consensus, but to identify significant disagreements that might indicate misinterpretation. Share a summary of themes, not verbatim transcripts. Document both agreements and disagreements, and explain how discrepancies were resolved. Member checking is a process, not a single event.

Q: What is reflexivity in qualitative research?

Reflexivity is the ongoing process of examining how your background, assumptions, and positionality influence your research design, data collection, analysis, and interpretation. A reflexivity statement does not simply acknowledge that bias exists — it specifically describes how your identity and prior experiences shaped your research decisions. Keep a reflexivity journal throughout the project. Reflexivity is not a limitation to minimise; it is a methodological tool that makes your analytical decisions transparent and auditable.

Author

Dr. Rekha Khandelwal, a legal scholar and academic writing expert, is the founder of AspirixWriters. She has extensive experience in guiding students and researchers in writing research papers, theses, and dissertations with clarity and originality. Her work focuses on ethical AI-assisted writing, structured research, and making academic writing simple and effective for learners worldwide.

Author Profile Dr. Rekha Khandelwal | Academic Writer, Legal Technical Writer, AI Expert & Author | AspirixWriters

References

- Braun, V., & Clarke, V. (2022). Thematic Analysis: A Practical Guide. Sage.

- Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101.

- Lincoln, Y. S., & Guba, E. G. (1985). Naturalistic Inquiry. Sage.

- Saldaña, J. (2021). The Coding Manual for Qualitative Researchers (4th ed.). Sage.

- Smith, J. A., Flowers, P., & Larkin, M. (2009). Interpretative Phenomenological Analysis: Theory, Method and Research. Sage.Interpretative Phenomenological Analysis | SAGE India

- Flick, U. (2018). An Introduction to Qualitative Research (6th ed.). Sage.

Next— Mixed Methods Research: When and How to Combine Approaches

Next in Series

- Complete Guide: Data Analysis and Results Presentation: Complete Guide for Quantitative, Qualitative & Legal Research (2026) (Module 4)

- Complete Guide: Organization and Academic Tone: Complete Guide to Professional Scholarly Writing (2026) (Module 5)

- Complete Guide: Peer Review and Publication: Complete Guide from Submission to Acceptance (2026) (Module 6)

- Complete Guide: AI Tools in Academic Research: Opportunities, Ethics, and Best Practices (2026) (Module 7)

- Complete Guide: Grant Writing and Research Funding: Complete Guide to Finding Money for Your Research (2026) (Module 8)

- Complete Guide: Academic Career Development: Complete Guide to Building Your Professional Life in Research (2026) (Module 9)

- Complete Guide: Research Ethics and the IRB Process: Complete Guide to Doing Research Responsibly (2026) (Module 10)

The Complete Guide to Research Paper Structure: IMRAD Format, Thesis Organization & Academic Writing (2026)

From Concept to Submission: A Complete Guide to Research Paper and Thesis Writing Academic Writing…

The IMRAD Framework: Why It Exists, How It Really Works, and Where It Breaks Down

The IMRAD Framework Understanding the Structure of Research Papers and Theses – Module 1: From Concept…

How to Write a Research Introduction That Reviewers Cannot Ignore

How to Write a Research Introduction Module 1: Understanding the Structure of Research Papers and…

How to Write a Methods Section That Reviewers Will Trust

How to Write a Methods Section Module 1: Understanding the Structure of Research Papers and…

The Results Section: How to Present Findings Without Letting Interpretation Slip In

The Results Section Module 1: Understanding the Structure of Research Papers and Theses From Concept…

The Discussion Section: How to Turn Findings Into Knowledge

The Discussion Section Module 1: Understanding the Structure of Research Papers and Theses From Concept…

Complete Thesis Structure: A Chapter-by-Chapter Guide

Complete Thesis Structure Module 1: Understanding the Structure of Research Papers and Theses From Concept…

10 Structural Mistakes That Get Research Papers Rejected — And How to Fix Every One

10 Structural Mistakes That Get Research Papers Rejected Module 1: Understanding the Structure of Research…

How to Write a Journal Abstract That Gets Your Paper Read

How to Write a Journal Abstract Module 1: Understanding the Structure of Research Papers and…

Systematic Review and PRISMA: How to Conduct and Report a Review That Meets Publication Standards

Systematic Review and PRISMA Module 1: Understanding the Structure of Research Papers and Theses From…

Legal Research Methods: A Complete Guide to Doctrinal, Empirical and Comparative Legal Research

Legal Research Methods Module 1: Understanding the Structure of Research Papers and Theses From Concept…

The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

Module 2, Complete Guide: The Academic Writing Process – from First Draft to Submission From…

How to Start Writing and Keep Going

How to Start Writing Module 2: The Academic Writing Process From Concept to Submission Series …

How to Write Clear Engaging Academic Prose

How to Write Clear Engaging Academic Prose – Module 2: The Academic Writing Process From…

The Revision Process: How to Turn a Draft Into a Submission

The Revision Process Module 2: The Academic Writing Process From Concept to Submission Series | …

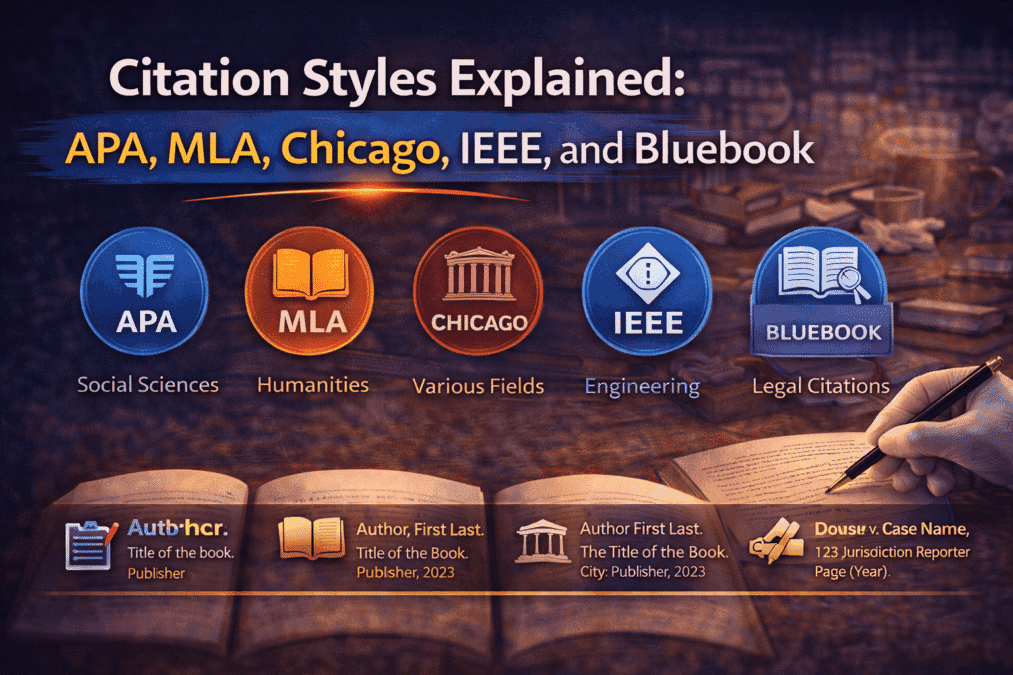

Citation Styles Explained: APA, MLA, Chicago, IEEE, and Bluebook

Citation Styles Explained Module 2: The Academic Writing Process From Concept to Submission Series | …

Preparing a Submission Ready Document: The Complete Pre-Submission Checklist

Preparing a Submission Ready Document Module 2: The Academic Writing Process From Concept to Submission…

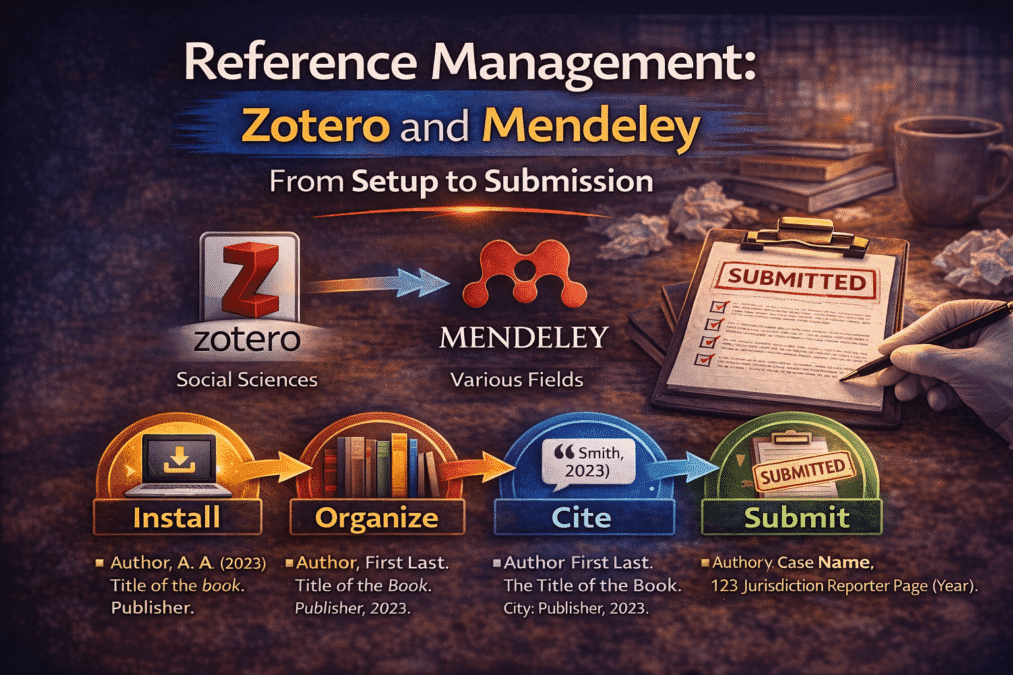

Reference Management: Zotero and Mendeley from Setup to Submission

Reference Management Module 2: The Academic Writing Process From Concept to Submission Series | 2026 Academic…

Legal Writing Process and Citation: A Complete Guide for Law Students and Legal Researchers

Legal Writing Process and Citation Module 2: The Academic Writing Process From Concept to Submission…

Research Methodologies: Complete Guide to Quantitative, Qualitative & Mixed Methods (2026)

(Module 3) – Complete Guide Research Methodologies From Concept to Submission: A Complete Guide to…

Research Paradigms: Why Your Philosophical Stance Shapes Everything

Research Paradigms Module 3: Research Methodologies From Concept to Submission Series | 2026 Academic Writing Mastery:…

Quantitative Research Design: From Hypothesis to Valid Results

Quantitative Research Design Module 3: Research Methodologies From Concept to Submission Series | 2026 Academic…

Qualitative Research Design: Choosing the Right Approach

Qualitative Research Design Module 3: Research Methodologies From Concept to Submission Series | 2026 Academic…

Qualitative Data Collection and Analysis: Interviews, Coding, and Trustworthiness

Qualitative Data Collection and Analysis Module 3: Research Methodologies From Concept to Submission Series | …

Mixed Methods Research: When and How to Combine Approaches

Mixed Methods Research Module 3: Research Methodologies From Concept to Submission Series | 2026 Academic…

Sampling: Choosing Who to Study and How Many

Sampling Module 3: Research Methodologies From Concept to Submission Series | 2026 Academic Writing Mastery:…

Research Ethics in Practice: What Ethics Forms Don’t Tell You

Research Ethics in Practice Module 3: Research Methodologies From Concept to Submission Series | 2026…

Data Analysis and Results Presentation: Complete Guide for Quantitative & Qualitative Research (2026)

(Module 4) Data Analysis and Results Presentation: From Concept to Submission: A Complete Guide to…

Preparing Your Data: The Work That Determines Analysis Quality

Cluster Post 1 | Module 4: Data Analysis and Presenting Results From Concept to Submission…

Descriptive Statistics: What to Report and How to Read Them

Cluster Post 2 | Module 4: Data Analysis and Presenting Results From Concept to Submission…