Cluster Post 2 | Module 7: AI Tools in Academic Research — Opportunities, Ethics, and Best Practices

From Concept to Submission Series | 2026

Academic Writing Mastery: The Complete 2026 Guide To Research Papers, Thesis & Dissertation Writing

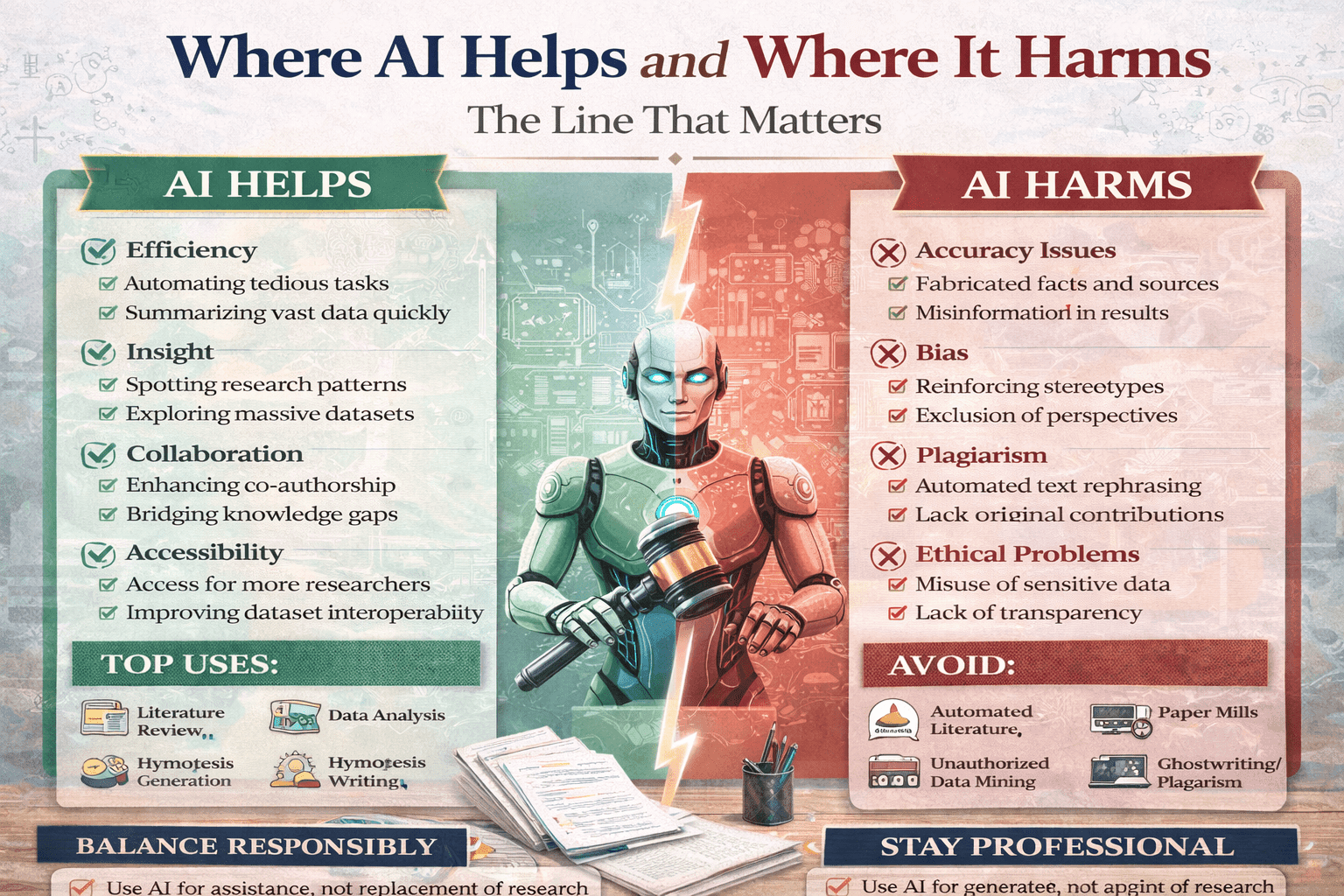

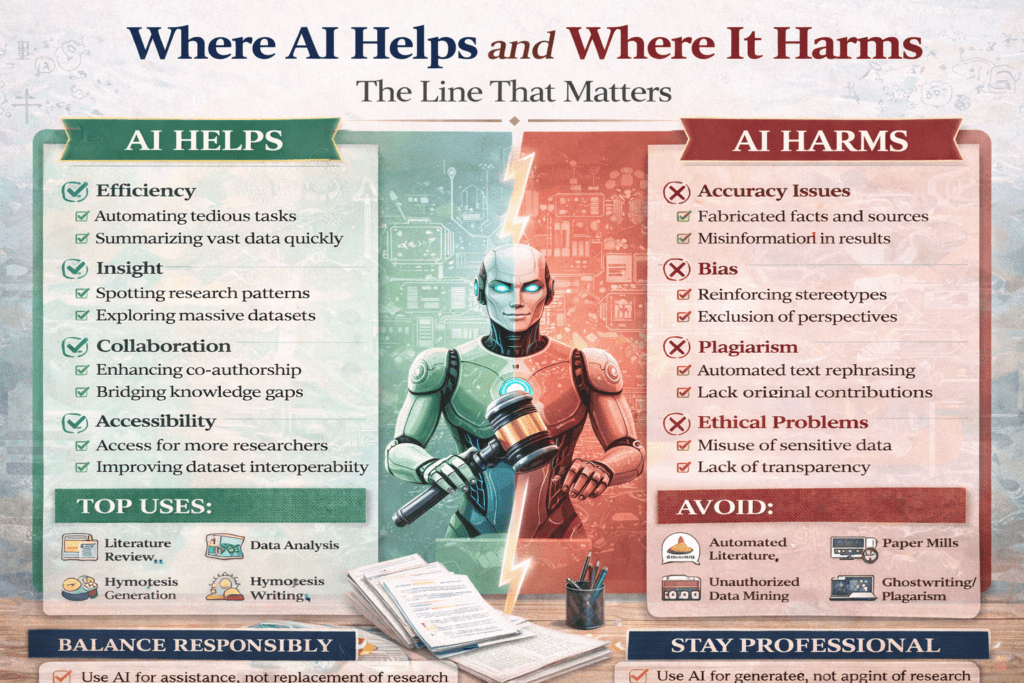

Where AI Helps and Where It Harms

The module overview gives lists of allowed and prohibited AI uses. This post goes deeper: the conceptual framework that explains why the line falls where it does, the genuinely ambiguous grey-zone cases the module does not resolve, and five practical tests you can apply to any borderline use in real time.

The Principle Behind the Rules

The module’s allowed/not-allowed lists are accurate but do not explain the underlying principle. Understanding the principle matters because no list can anticipate every situation, and you will face cases the module does not cover.

The principle is this: AI use is appropriate when it accelerates your ability to do your intellectual work; it is inappropriate when it substitutes for the intellectual work itself. This distinction — acceleration vs. substitution — is the line. Everything else follows from it.

Your thesis makes a claim about the world. That claim must be yours: grounded in your reading of the literature, your analysis of the data, your reasoning about what the evidence means. The intellectual acts that produce that claim — reading, analysing, interpreting, arguing — are the work. Anything that makes those acts faster, easier, or better expressed is appropriate assistance. Anything that performs those acts in your place is substitution.

This principle explains the apparent inconsistency in the lists: using AI to improve the clarity of a sentence you wrote is acceleration; using AI to write the sentence is substitution. Using AI to help you understand a statistical method you are then applying is acceleration; asking AI to perform and interpret the analysis for you is substitution.

The Gradient: From Clearly Acceptable to Clearly Not

In practice, AI use is not binary — it falls on a gradient from clearly acceptable to clearly not. Understanding where different uses fall helps with borderline cases.

| Use | Assessment and reasoning |

| Using Grammarly to check grammar and spelling | Clearly acceptable. Equivalent to spell-check. No intellectual work substituted. |

| Asking AI to improve the clarity of a paragraph you wrote | Clearly acceptable. The ideas, argument, and structure are yours. AI is improving expression only. |

| Asking AI to explain a concept you do not understand | Clearly acceptable. This is learning. The understanding you develop is yours. |

| Using Elicit to find papers on your topic | Clearly acceptable. Equivalent to an advanced database search. You still read and evaluate the papers. |

| Asking AI to summarise a paper you have not read, and using that summary in your literature review | Not acceptable. You are citing work you have not read, based on an AI summary that may be wrong. This is an integrity failure independent of AI — you should never cite work you have not read. |

| Asking AI to suggest themes in your qualitative data | Grey zone (see below). Depends on how it is used. |

| Asking AI to write your methodology section | Not acceptable. The methodology section documents your intellectual decisions. AI cannot have made those decisions. |

| Using AI to generate code for your statistical analysis | Acceptable with conditions. You must design the analysis, understand the code, run it yourself, and interpret the results. |

| Asking AI to interpret your statistical results | Not acceptable. Interpretation is the intellectual core of analysis. It must be yours. |

| Asking AI to write your literature review | Not acceptable. Constitutes outsourcing the central intellectual work of a chapter. |

| Asking AI to help you think through counterarguments to your thesis | Acceptable. This is a thinking partnership — the same intellectual work you would do in a seminar discussion. |

| Asking AI what your research question should be | Not acceptable. The research question is the foundational intellectual act of the thesis. |

The Grey Zone: Cases the Module Does Not Resolve

Qualitative coding assistance

The module says qualitative coding requires your deep engagement with the data. This is true. But AI assistance with qualitative data falls on a gradient. Using AI to organise codes you have already identified — grouping twenty manually-developed codes into potential themes — is closer to acceleration. Asking AI to identify themes in transcripts you have not coded yourself is substitution. The question to ask: did I engage with the data before I used AI? If yes, AI assistance in organising that engagement is generally acceptable. If no, AI is doing the analytical work.

Translating non-English sources

Using AI to translate a paper from French or German so you can read and engage with it is clearly acceptable — this is the same as using Google Translate, just better. But there is a grey zone: using AI to summarise a non-English paper you cannot translate yourself, citing it as if you engaged with the original, is more problematic. If you have used AI translation, you may want to note this — ‘translated with AI assistance’ — where relevant, and verify key passages with a human translator if the paper is central to your argument.

Structuring an outline

The module permits brainstorming. Does that include asking AI to suggest the structure of your thesis chapter? This is genuinely ambiguous. If the structure reflects the logical requirements of your argument — and you understand why each section follows from the previous — using AI to generate an initial outline that you then evaluate, restructure, and own is closer to acceleration. If you take the AI’s outline as the chapter’s skeleton without interrogating whether it reflects the logic of your specific argument, that is more problematic. The test: can you explain, in your own words, why the chapter has the structure it does? If yes, the structure is yours regardless of how you arrived at it.

Improving writing for non-native speakers

This is the most frequently misunderstood grey zone. A non-native speaker who writes in clear but non-idiomatic English and uses AI to improve the phrasing is using AI to accelerate expression, not substitute thinking. This is legitimate. But the gradient matters: asking AI to rewrite a whole section ‘in better academic English’ — where AI may also subtly change the argument, add hedges, remove precision — is more problematic. The principle: the ideas and argument must be preserved exactly; only the expression should change. Review every AI suggestion to ensure the meaning is unchanged.

Five Practical Tests for Borderline Cases

Test 1: The explanation test

Can you explain, in your own words without referring to the AI output, the substance of what the AI produced? If AI generated a paragraph about structural equation modelling in your methods section: can you explain SEM, why you chose it, how it works, and what the output means? If yes, the AI accelerated your work. If you cannot explain it without the AI’s output, the AI has substituted for understanding you should have.

Test 2: The prior work test

Did you do substantial intellectual work before asking AI for help? Good AI use typically begins with your own engagement: you read the papers, you developed the codes, you wrote the draft. AI helps at the revision, organisation, or expression stage. When AI is the first thing you reach for — before reading, before thinking, before writing — that is a signal that substitution may be occurring.

Test 3: The verification test

Can you verify every factual claim in the AI output against an independent source? If AI claims a paper says X, have you read that paper and confirmed it says X? If AI produces a statistical output, have you run the analysis yourself and confirmed it? AI outputs that cannot be independently verified should not appear in your thesis. If you cannot verify it, you cannot use it.

Test 4: The disclosure test

Would you be comfortable disclosing this use to your supervisor and thesis examiners? If the answer is no — if you would not want to tell your supervisor that you used AI this way — that is a reliable signal that the use crosses into substitution or integrity violation. Appropriate AI use is always fully disclosable.

Test 5: The removal test

If you removed all AI assistance from your process, would you still have produced substantially the same research? Slower, yes. Less polished, perhaps. But the same questions, the same data, the same analysis, the same conclusions? If removing AI would fundamentally change what you produced — not just when you produced it, but what — that is substitution. If you would have produced the same research just more slowly, AI has accelerated without substituting.

Legal Research and Writing: Complete Guide for Law Students and Legal Researchers

FAQs

Q: What tasks should researchers never use AI for?

Researchers should never use AI for: generating research findings or conclusions (AI cannot conduct research); producing citations without independent verification (AI fabricates citations); writing methodology sections that describe methods the researcher did not actually use; conducting or interpreting statistical analysis; producing qualitative analysis or coding; drafting ethical declarations or IRB applications based on research not yet designed; and writing thesis or dissertation chapters that are passed off as the researcher’s own intellectual work. These are the core intellectual activities that constitute research — AI use in these areas is academic fraud, not efficiency.

Q: What is AI hallucination and why is it dangerous in research?

AI hallucination is when an AI model generates confident, plausible-sounding information that is factually incorrect — including fabricated citations with realistic-looking author names, journal titles, volume numbers, and page numbers that do not exist. In research, hallucinated citations are particularly dangerous because they are difficult to detect without checking each source individually, they undermine the integrity of your work if published, and in legal research, citing a non-existent case can have professional consequences. Never cite any source based solely on AI output — verify every citation against a primary database before inclusion.

Q: Does using AI in research count as plagiarism?

Using AI to generate text presented as your own writing may constitute plagiarism or academic fraud depending on your institution’s policy, even if the AI output is not copied from a specific source. Most university academic integrity policies define plagiarism to include submitting work generated by AI without disclosure. Beyond policy compliance, there is a deeper issue: if AI wrote the argument, the analysis, or the synthesis, the intellectual contribution is not yours. Academic work is assessed as the product of your thinking — AI-generated content represents someone else’s training data, not your research.

Q: Can AI detect plagiarism in research papers?

AI-based plagiarism detection tools (Turnitin AI, iThenticate, Copyleaks) can identify text that closely matches existing published sources and flag potential AI-generated content. They are imperfect: they produce false positives (flagging legitimate paraphrasing) and false negatives (missing cleverly paraphrased plagiarism). AI content detection specifically is unreliable — it identifies probabilistic patterns associated with AI writing, not definitive evidence. The solution is not to evade detection but to conduct genuine research: your own reading, your own analysis, your own writing, with AI used only for appropriate assistance.

Q: How does AI affect research integrity in Indian universities?

UGC has issued guidelines addressing AI use in higher education, and most NLUs and Indian universities are developing AI academic integrity policies in 2025–2026. Currently: Shodhgandhi anti-plagiarism checks catch text matching published sources but do not reliably detect AI-generated content. PhD theses and research papers submitted to journals face increasing scrutiny for AI-generated sections. The reputational risk of having AI-generated content identified in submitted work is significant. The safest approach: use AI only for tasks where disclosure is straightforward — writing assistance, grammar checking, literature mapping — and disclose accordingly.

References

- Eaton, S. E. (2025). Academic Integrity in the Age of AI. University of Calgary Press.

- ICAI. (2026). The Fundamental Values of Academic Integrity (5th ed.). International Center for Academic Integrity.

- UNESCO. (2023). Guidance for Generative AI in Education and Research. UNESCO Publishing.

Next: Cluster Post 3 — Prompt Engineering for Researchers: Getting Useful Output

- Module 1 Overview The Complete Guide to Research Paper and Thesis Structure

- Module 2 Overview The Academic Writing Process: Complete Guide from First Draft to Submission (2026)

- Module 3 Overview Research Methodologies: Complete Guide to Quantitative, Qualitative, Mixed Methods & Legal Research (2026)

- Module 4 Overview Data Analysis and Results Presentation: Complete Guide for Quantitative, Qualitative & Legal Research (2026)

- Module 5 Overview Organization and Academic Tone: Complete Guide to Professional Scholarly Writing (2026)

- Module 4 Overview Peer Review and Publication: Complete Guide from Submission to Acceptance (2026)

- Module 7 Overview AI Tools in Academic Research: Opportunities, Ethics, and Best Practices (2026)

Next in Series

- Module 8 Overview Grant Writing and Research Funding: Complete Guide to Finding Money for Your Research (2026)

- Module 9 Overview Academic Career Development: Complete Guide to Building Your Professional Life in Research (2026)

- Module 10 Overview Research Ethics and the IRB Process: Complete Guide to Doing Research Responsibly (2026)

Writing the Thesis Abstract and Introduction

Cluster Post 2 | Module 5: Thesis Writing and Submission From Concept to Submission Series …

Writing the Results Section: Separating Findings from Interpretation

Cluster Post 6 | Module 4: Data Analysis and Presenting Results From Concept to Submission…

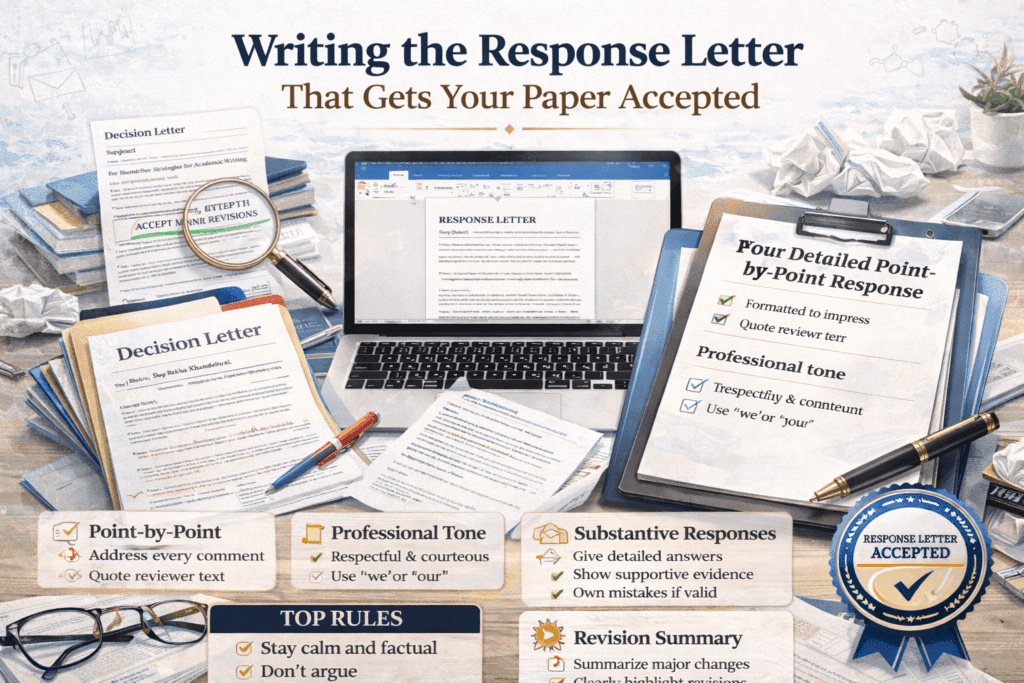

Writing the Response Letter That Gets Your Paper Accepted

Cluster Post 2 | Module 6: Peer Review, Responding to Feedback, and Publication Strategies From…

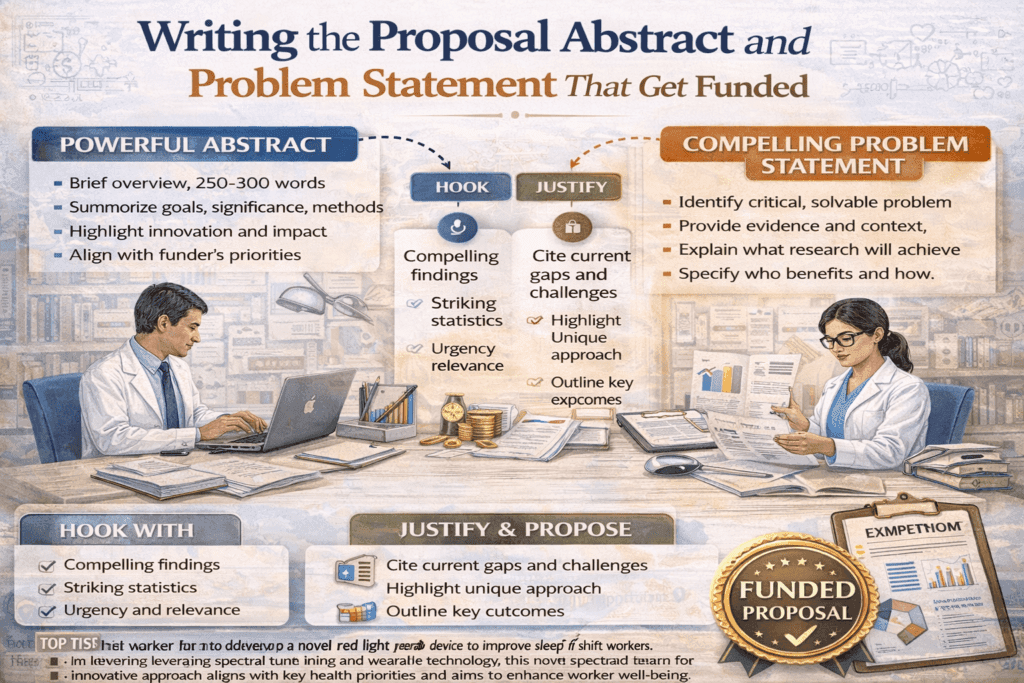

Writing the Proposal Abstract and Problem Statement That Get Funded

Cluster Post 2 | Module 8: Grant Writing and Research Funding From Concept to Submission…

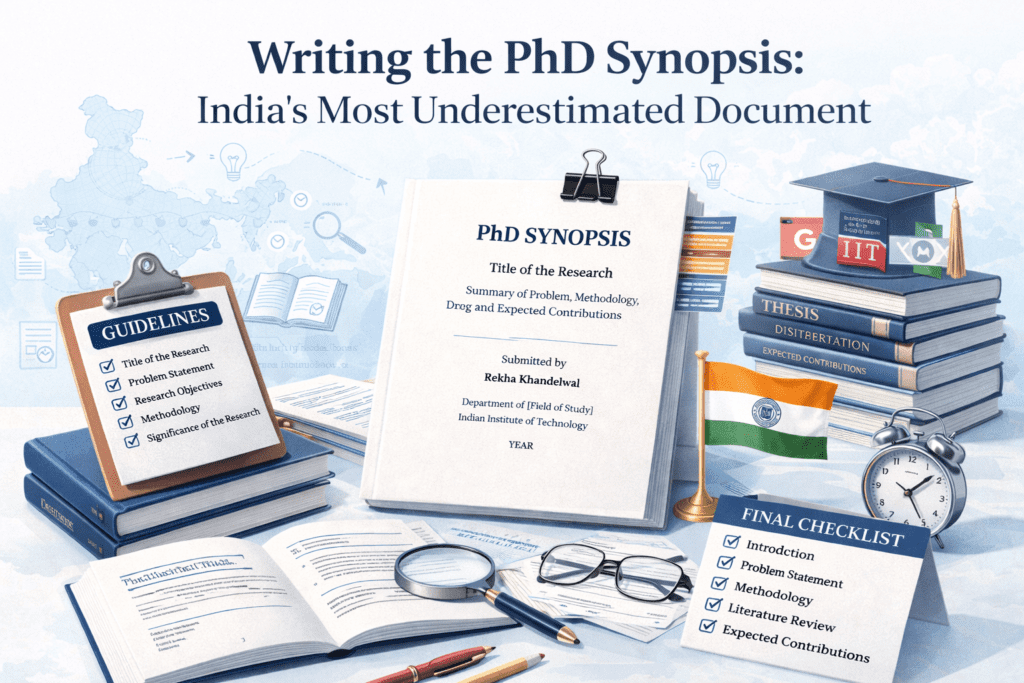

Writing the PhD Synopsis: India’s Most Underestimated Document

Cluster Post 6 | Module 5: Thesis Writing and Submission From Concept to Submission Series …

Where AI Helps and Where It Harms: The Line That Matters

Cluster Post 2 | Module 7: AI Tools in Academic Research — Opportunities, Ethics, and…